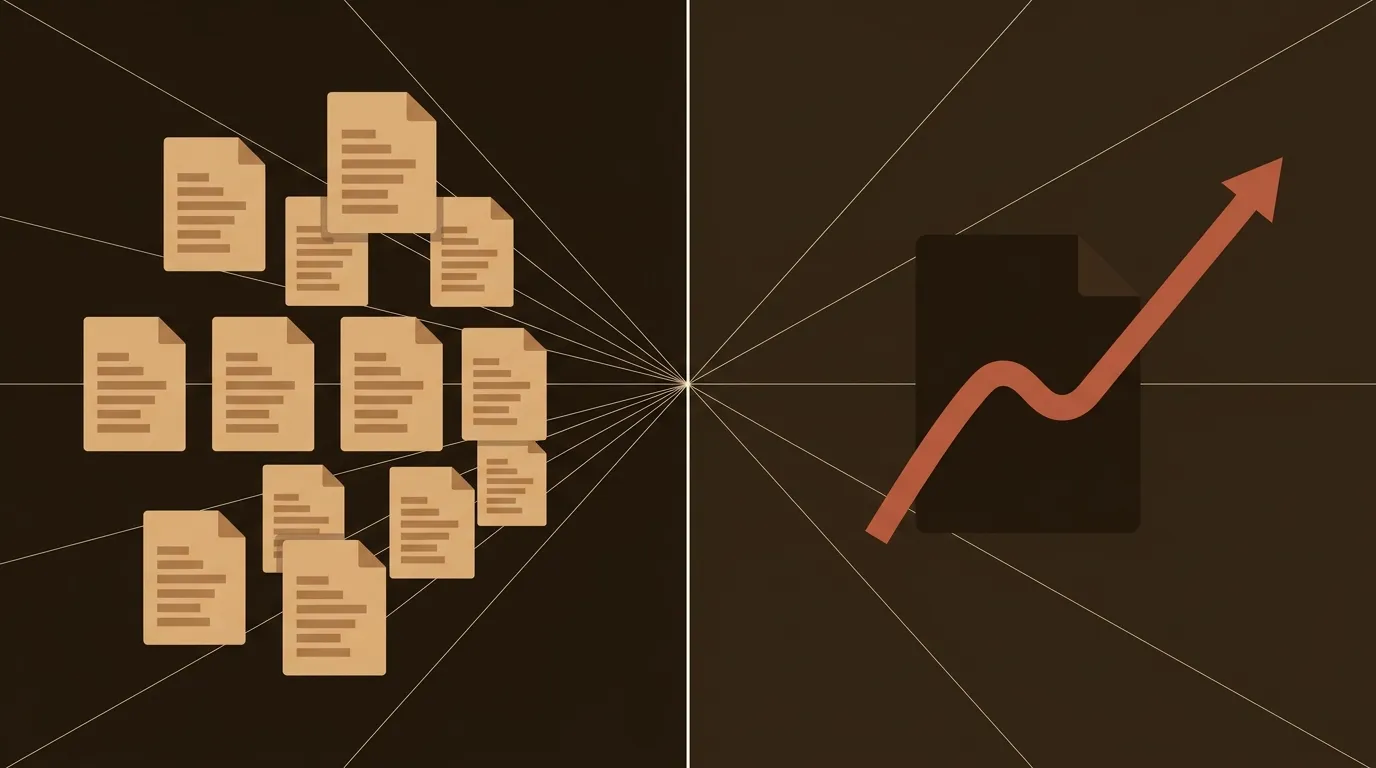

Ninety-one percent of content that ranks in the top three positions cites five or more external statistics. That number, on its own, isn't surprising. What's surprising is how few B2B content teams have noticed the second part of the problem: when every team uses AI to draft content, and every AI pulls from the same public data sources, the citations across competing articles start to look identical.

We've been watching this play out for over a year. The same HubSpot stat. The same Gartner forecast. The same McKinsey pull quote. Across ten competing blog posts targeting the same keyword, you'll find six of them citing the exact same three studies. Search engines notice. And increasingly, LLMs cluster near-duplicate URLs together and choose one page to represent the entire set. If your sourcing matches your competitors' sourcing, your content becomes interchangeable. Invisible.

The emerging constraint isn't publishing volume. It's data differentiation.

The Statistical Monoculture Problem

AI writing tools are exceptionally good at finding and citing widely available data. That's also their biggest weakness. They train on the same corpora, surface the same studies, and produce drafts that are statistically identical to what your competitor's AI just produced.

Research from ALM Corp found that even when AI-generated content isn't word-for-word identical, it can appear duplicate-like to search engines because of similar patterns, structures, and repetitive sourcing. The result: reduced visibility. Systems that summarize answers, whether Google's AI Overviews or ChatGPT's citations, have little reason to pull from pages that say the same thing as ten others.

The data on this is stark. A Semrush study found that content classified as purely AI-generated appeared in the top spot just 9% of the time, while human-written content held that position 80% of the time. But the recommendation from the same study isn't "stop using AI." It's this: use AI to move faster through research, outlining, and drafting, then invest the time saved into incorporating expert insights and proprietary data.

That's the playbook we're going to break down.

Why Your Competitors' Content Looks Like Yours

Pull up any B2B topic, say "account-based marketing ROI" or "customer retention strategies." Now open the top ten organic results. You'll notice a pattern.

Three or four of them cite the same Bain & Company stat about retention being 5-25x cheaper than acquisition. Half of them reference the same Forrester or Gartner report. The introductions follow the same structure. The conclusions say the same thing in different words.

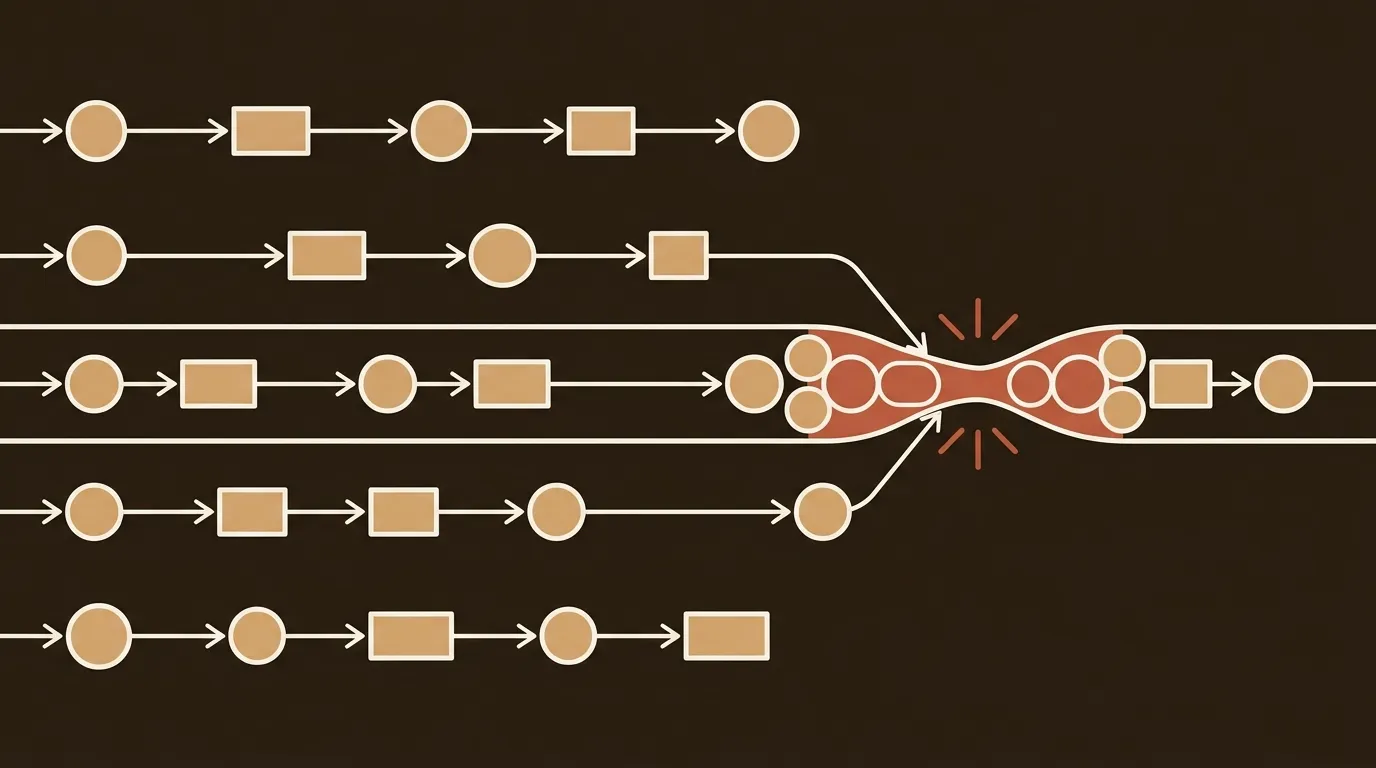

This isn't a coincidence. It's the predictable outcome of a content production model where AI does the sourcing and humans do the editing. AI tools pull from what's publicly available and frequently cited. Frequently cited data gets cited more, creating a feedback loop.

The result is what we call statistical monoculture: a market condition where competing content becomes indistinguishable at the evidence layer. And search engines are getting better at detecting it. When content is duplicated at the data level, AI systems struggle to interpret ranking signals, reducing the likelihood that any one version gets selected.

A Sourcing Architecture That Actually Works

So how do you break out of statistical monoculture without hiring a research team or doubling your production timeline? You build a sourcing architecture: a repeatable system for injecting non-replicable evidence into AI-assisted workflows.

We've tested four pillars that work for small teams (one to three people) without adding more than 45 minutes per article.

Pillar 1: Micro-Surveys With Your Existing Audience

You don't need a 2,000-respondent study to produce original data. A five-question survey sent to your email list, your LinkedIn followers, or your Slack community can generate a stat nobody else has.

Here's what that looks like in practice. Say you're writing about content marketing budgets. Instead of citing the same Content Marketing Institute figure everyone else uses, you send a three-question Typeform to 200 newsletter subscribers asking about their 2025 spend. You get 47 responses. That's enough to write "47% of the marketing managers we surveyed increased their content budget by at least 15% this year." No competitor has that number. No AI can generate it.

The time cost: 15 minutes to build the survey, 5 minutes to distribute it. You batch-send surveys monthly and stockpile proprietary data points for future articles.

Pillar 2: First-Party Customer Data (Anonymized)

Your product usage data, your support tickets, your sales calls, these all contain evidence that's unique to your business. A SaaS company might say "We analyzed 1,200 customer onboarding sessions and found that teams completing setup in under 30 minutes retained at 2.3x the rate of those who took longer." That's a stat built from data no competitor can access.

The key constraint is anonymization and aggregation. You don't need to share individual customer details. Aggregate trends are enough. And they're wildly effective for building authority signals. CGT Marketing notes that by 2026, effective B2B content marketing focuses on building a digital footprint that commands authority, and proprietary data is the fastest path there.

Pillar 3: Partnership Research

This one's underused. Co-authoring a small study with a complementary (non-competing) company gives you shared distribution, shared credibility, and data neither of you could produce alone. An email marketing platform partnering with a CRM vendor to survey their combined user bases produces a dataset twice as large and twice as unique.

The time investment is higher up front (coordinating with a partner, aligning on methodology), but the output compounds. One partnership study can feed six to eight articles across both organizations.

Pillar 4: Structured Expert Quotes

This is the fastest win and the most overlooked. A 10-minute interview with an internal subject-matter expert, a customer, or an industry peer produces quotes that no AI can fabricate and no competitor can replicate.

The word "structured" matters here. Don't just ask "What do you think about X?" Ask specific, data-oriented questions: "What percentage of your pipeline came from organic content last quarter?" or "How many hours per week does your team spend on content production?" The answers become citable evidence.

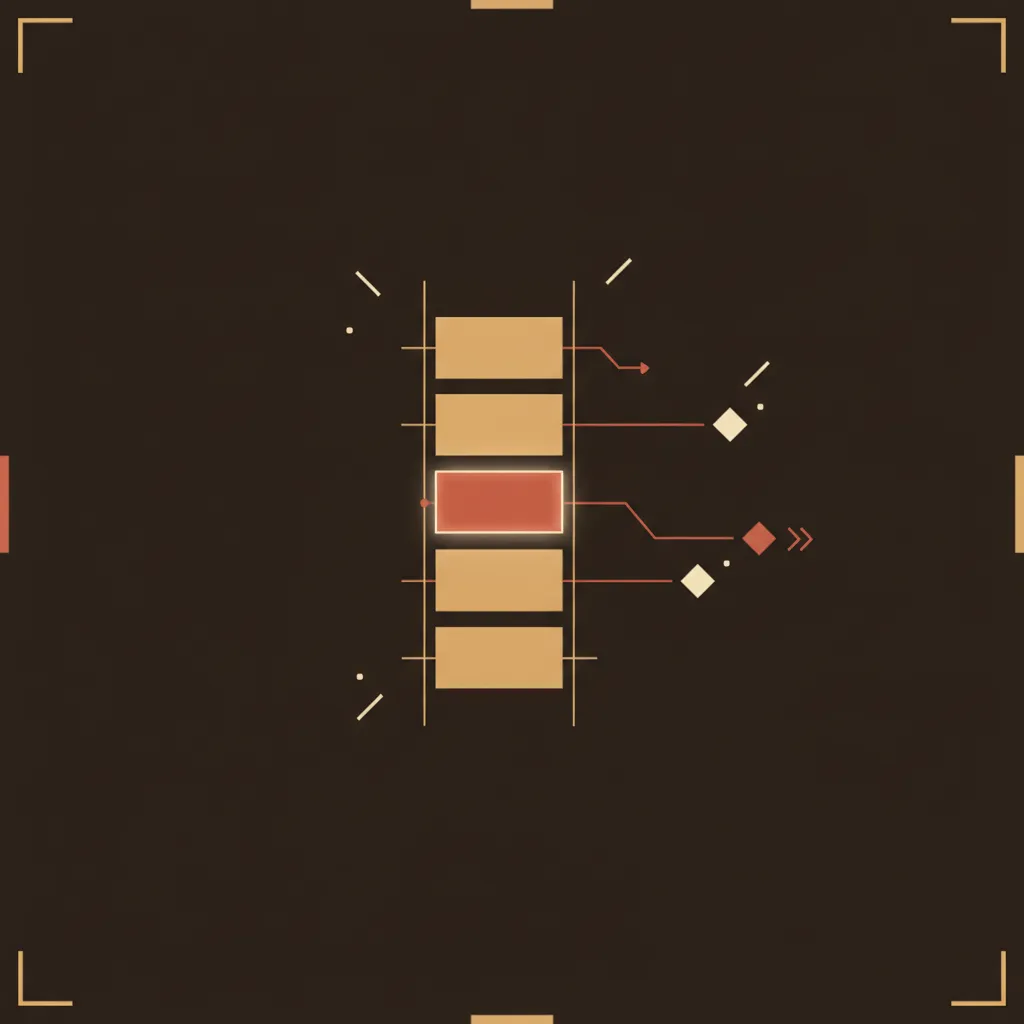

The 45-Minute Enhancement Workflow

We tested this with our own editorial process and landed on a time allocation that works consistently.

Minutes 1-10: Proprietary data scan. Before touching the draft, check your data stockpile. Do you have a survey result, a customer metric, or an expert quote that's relevant to this topic? If yes, flag where it fits. If no, fire off a quick survey or book a 10-minute expert call for next week's batch.

Minutes 11-30: Integration and rewrite. This is the bulk of the work. Replace generic stats in the AI draft with proprietary data points. Swap "according to a recent study" with "according to our survey of 83 SaaS marketing managers." Add context the AI can't: what surprised you about the data, where it contradicts conventional wisdom, what it doesn't tell you.

Minutes 31-40: Citation audit. Open the top five ranking articles for your target keyword. List every stat they cite. If your draft shares more than two sources with any of them, that's a red flag. Find a replacement. Presence AI's research shows that updating content with genuinely new statistics (not just changing "2025" to "2026") produced a 71% citation lift, versus only 12% for cosmetic timestamp changes.

Minutes 41-45: Authority signals. Add a named author with credentials. Include methodology notes if you're citing original research ("We surveyed 83 SaaS marketing managers via Typeform in March 2025"). Add a visible update date.

That's it. Forty-five minutes layered onto an AI-assisted draft.

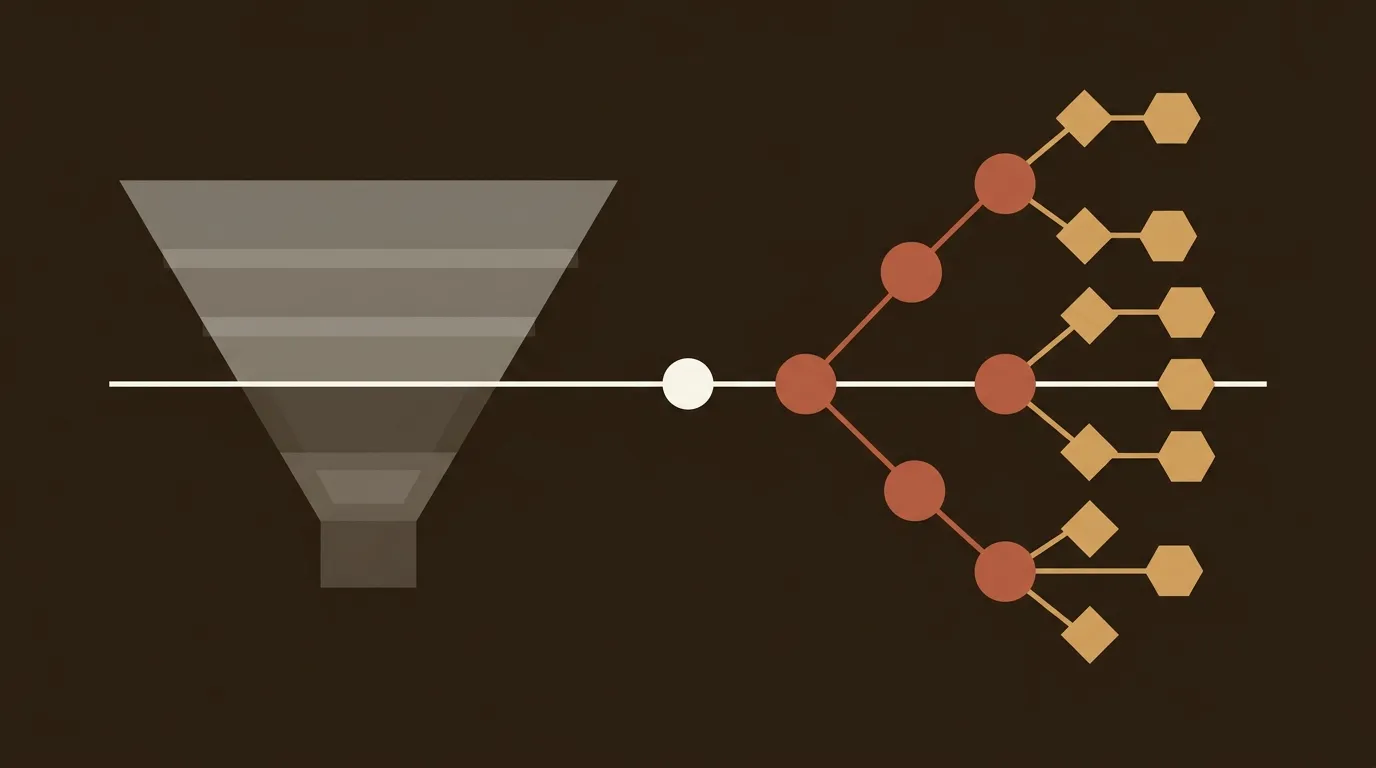

The Data Uniqueness Index: Score Before You Publish

We built a simple scoring checklist for our own editorial workflow. It's not perfect, and we're still refining it. But it catches the most common differentiation failures before they go live.

Evidence Diversity (0-30 points)

- Contains original survey data or first-party research: +10

- Includes customer case studies or direct customer quotes: +8

- References partnership or co-authored research: +7

- Contains structured expert quotes with specific data: +5

Source Originality (0-30 points)

- Includes proprietary statistics not found in top 10 competing articles: +15

- Uses a mix of academic, industry, and primary sources: +10

- Cites studies published within the last 12 months: +5

Structural Data Density (0-20 points)

- Contains at least one data table or comparison matrix: +10

- Includes structured lists with quantified examples: +10

Editorial Authority (0-20 points)

- Named author with visible credentials or bio: +5

- Clear methodology or source explanation for original data: +8

- Visible publication date and update history: +7

Score 80 or above and you have genuinely differentiated content. Between 60 and 79, you're competitive but not defensible. Below 60, you've produced commodity content that search engines and LLMs will treat as interchangeable.

We score every article before publishing. The ones that score below 60 go back for proprietary data injection. It takes discipline, but it's cheaper than watching your organic traffic flatline.

Where This Gets Genuinely Messy

We're not going to pretend this is clean or easy. Three tensions remain unresolved.

First, micro-surveys have small sample sizes. Forty-seven respondents isn't statistically rigorous, and an honest content team should say so. We include sample sizes in our citations and let readers judge. Some readers will dismiss n=47; others will value it over a recycled n=5,000 stat they've seen in twelve other articles. There's no clean answer here.

Second, first-party data requires cross-team cooperation. Getting product usage data from engineering, or win/loss data from sales, involves internal politics that no workflow template can solve. Some organizations make this easy. Others make it nearly impossible. If your company falls in the second category, lean harder on expert quotes and micro-surveys while you work on the internal access problem.

Third, the 45-minute number is an average. Some articles need 20 minutes (you already have great proprietary data on the topic). Others need 90 (you need to run a new survey and wait for results). Batching your data collection, running monthly surveys and quarterly expert interview rounds, helps smooth this out.

What Happens When You Get the Sourcing Right

Presence AI's citation research found that content with data tables achieved an average 67% citation rate. Comparison pages with three or more tables earned 25.7% more citations. These aren't vanity metrics; citation rate directly predicts visibility in AI-powered search results, which is where a growing share of B2B discovery happens.

And the Semrush study makes the ROI argument clearly: AI-assisted content with human enhancement (original research, expert insights, proprietary data) outperforms both pure AI content and pure human content in rankings. The hybrid wins. But the "human enhancement" part has to actually be enhancing, not just light copyediting.

The teams that will own organic visibility in 2026 aren't the ones publishing 200 posts a month. They're the ones publishing 30 posts a month where every single one contains evidence their competitors can't copy-paste.

That's the math. And unlike most content advice, it's a math problem you can actually measure.

References

-

CGT Marketing LLC, "B2B Content Marketing Services: The 2026 Strategy for Specialized Industries" -- https://cgtmarketingllc.com/b2b-content-marketing-services-the-2026-strategy-for-specialized-industries/

-

ALM Corp, "AI-Generated Content and Google Search: What a 16-Month Experiment Shows About Rankings, Indexing, and Long-Term SEO" -- https://almcorp.com/blog/ai-generated-content-google-search-rankings/

-

ALM Corp, "How Duplicate Content Destroys Your AI Search Visibility: The Definitive Guide" -- https://almcorp.com/blog/duplicate-content-ai-search-visibility-guide/

-

Presence AI, "AI Citation Rates Research: What Content Gets Cited Most" -- https://presenceai.app/blog/ai-search-citation-rates-research-which-content-gets-cited

-

Semrush, "Does AI content rank well in search? [Survey + Data study]" -- https://www.semrush.com/blog/does-ai-content-rank-in-search-data-study/