Most marketing teams we talk to have the same story: they added an AI writing tool, watched their draft output triple, and then spent the next quarter wondering why their published post count barely moved. The bottleneck didn't disappear. It migrated.

According to Sight AI's research on scaling content production, 58% of marketing professionals spend their time on reviews, approvals, and revisions rather than creating. That stat isn't about lazy teams. It's about a structural mismatch between how fast AI generates drafts and how slowly humans can validate, optimize, and publish them.

We've been tracking this pattern for over a year now. And the math tells a story that most "AI saves you 10x" pitches conveniently leave out.

The Generation Illusion

Here's what happened across the industry in 2024: AI tool adoption among marketers hit roughly 85%, and teams started producing content at rates they'd never imagined. But producing drafts isn't the same as publishing posts. And publishing posts isn't the same as publishing posts that rank.

The gap between "draft generated" and "post live" is where editorial labor actually lives. It's not glamorous. Nobody writes LinkedIn posts about the two hours they spent fact-checking an AI's hallucinated statistic or reconciling keyword targets with a draft that ignored half the brief.

Proofed's analysis of in-house editing costs puts it bluntly: some organizations initially thought they could replace a team of writers with one or two editors plus AI, saving salary overhead. What they found instead was that editing AI drafts can sometimes take nearly as long as writing from scratch when quality is low. One editor described spending hours fixing AI-generated articles that were "almost unreadable" in places.

So if an editor spends 3 hours rewriting an AI piece that took 5 minutes to generate, and a skilled writer would have produced a publishable version in 4 hours, your actual time savings are... 55 minutes. Minus the cost of the AI subscription.

Where the Hours Actually Go

We've broken down the editorial workflow into its component parts and tracked where AI-augmented teams report spending the most unaccounted time. The pattern is consistent across companies we've spoken with and data from Pilot Press's cost analysis.

Prompt iteration. The re-prompt loop, where you generate content, realize it's off-brand, adjust the prompt, regenerate, and tweak again, eats 15 to 30 minutes per cycle. Multiply that across 20 posts a month and you've burned an entire workday just on prompt management. Nobody tracks this time. It doesn't show up in any cost-per-article calculation.

Research validation. AI confidently cites studies that don't exist, misattributes quotes, and confuses correlation with causation. AIContentfy's comparison of AI and traditional writing notes that AI-generated content without human review routinely misses factual precision, with search engines increasingly penalizing content that lacks verified sourcing. Someone has to check every claim. That someone is usually the same editor who's already behind on reviews.

Brand voice alignment. Getting an AI draft to sound like your brand takes work. Pilot Press estimates 2 to 4 hours per article for brand consistency, with revision and approval cycles at a $60/hour manager rate adding $120+ per piece. That's a hidden cost that transforms the economics of "cheap" AI content pretty fast.

SEO reconciliation. The draft comes out. It hits the word count. But it targeted the wrong intent, stuffed keywords unnaturally, or missed internal linking opportunities entirely. Someone has to go back through and fix it. If you have 100 AI-written articles ready to go, as Sight AI points out, do you have the resources to properly review and optimize each one?

CMS handoffs and publishing logistics. Formatting for WordPress. Adding meta descriptions. Compressing images. Scheduling social promotion. These tasks existed before AI, but they've become proportionally larger as draft production outpaces everything downstream.

The Coordination Tax Nobody Budgets For

This is where it gets structurally interesting.

Coordination overhead doesn't scale linearly. It follows the formula n(n-1)/2, where n is the number of people involved. Double your team from 4 to 8 people and your coordination complexity jumps from 6 connections to 28. That's not a metaphor; it's graph theory, and it applies to every Slack thread about who's reviewing what.

Sight AI's research found that coordination consumes 30 to 40% of total content team capacity, with 73% of content teams reporting inconsistency as their primary scaling challenge. A content manager can spend 20 hours per week just keeping everyone aligned on priorities and deadlines.

Think about that. Half a person's full-time job, gone to coordination. Not writing. Not editing. Not strategizing. Just making sure the right draft reaches the right reviewer at the right time with the right context attached.

And here's the part that makes AI adoption actually make this worse: when you 3x your draft output without changing your editorial infrastructure, you haven't saved time. You've created a queue. Subject matter experts who review technical accuracy, legal teams who approve certain content types, executives who want sign-off on high-profile pieces, each becomes a bottleneck where content sits waiting.

We've seen teams where the average time from "AI draft complete" to "post published" exceeds two weeks. Not because anyone is slow. Because everyone is overloaded with review requests that didn't exist six months ago.

The Real Cost-Per-Article Math

Let's do the calculation that AI writing tool vendors don't put in their marketing.

A typical AI-assisted blog post for a B2B company, assuming you care about quality and SEO:

- AI tool subscription cost per post: ~$1-3 (varies by plan)

- Prompt iteration time: 20 min ($20 at $60/hr loaded cost)

- Research validation: 30 min ($30)

- Brand voice editing: 45 min ($45)

- SEO reconciliation: 20 min ($20)

- CMS formatting and publishing: 15 min ($15)

- Coordination overhead (reviews, approvals, Slack): 20 min ($20)

Total per post: roughly $150 to $155 in human labor, plus the AI subscription.

Compare that to a mid-tier freelance writer who delivers a publish-ready, researched, SEO-optimized post: $200 to $400, with no coordination overhead on your team beyond the initial brief and a final review.

The AI-assisted post is cheaper. But not by the 10x margin the pitch deck promised. And the hidden labor falls on your team, which means it competes with every other priority they have. Alibaba's analysis of AI subscriptions vs. freelance editors flags this exact tradeoff: companies often overlook training and onboarding expenses as they integrate AI writing tools, which impacts user engagement and productivity.

Why Solving Generation Alone Stalls Automation

The pattern we keep seeing is teams that invested heavily in the "generation" layer of content, a better LLM, a fancier writing assistant, more templates, and then plateaued.

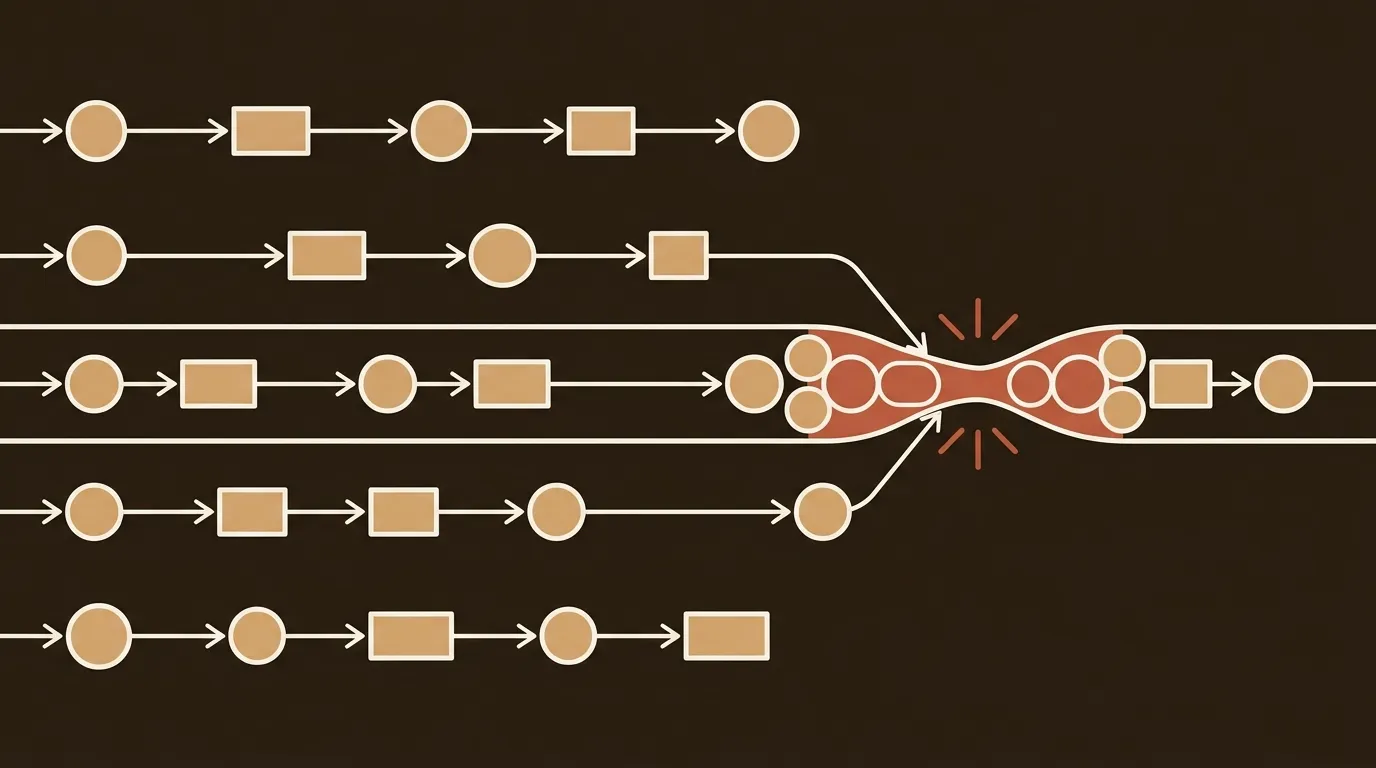

They plateaued because generation was never the bottleneck for most teams. The bottleneck was always the connective tissue: research flowing into briefs, briefs informing drafts, drafts getting validated against sources, validated drafts getting optimized for search, optimized posts getting formatted and scheduled.

Each handoff between these stages introduces delay, context loss, and coordination cost. A draft that arrives without its research sources attached means the editor has to go find them. A post optimized for SEO but not reviewed for brand voice means another round of edits. A finished post sitting in Google Docs instead of the CMS means someone has to manually move it over.

These are individually small frictions. Collectively, they're the reason AI-assisted teams publish 42% more content but struggle to prove pipeline impact. Volume went up. Quality assurance became inconsistent. Attribution got murky because nobody could track which posts went through proper review and which got rushed out.

What Actually Works: Workflow Architecture First

The teams capturing real productivity gains from AI aren't the ones with the fanciest writing tools. They're the ones who mapped their editorial workflow end-to-end before adding AI anywhere.

That means being explicit about every handoff: who does research, where does it go, who writes the brief, what triggers a draft, who reviews for accuracy vs. brand voice vs. SEO, and what "ready to publish" actually means.

Agentic workflows, where AI handles repeatable process steps while humans provide judgment at defined checkpoints, show the most promise here. Instead of re-briefing an assistant each time and stitching outputs together manually, teams define a workflow that coordinates steps, carries shared context, and delivers a consistent result. Every step runs against the same brief, brand rules, and quality standards, which reduces handoffs and cuts coordination overhead.

Sight AI's data suggests this approach can transform economics dramatically: 87.5% output increases with 7.5% cost increases when properly implemented. But "properly implemented" is doing a lot of heavy lifting in that sentence. It requires upfront investment in workflow design that most teams skip because they're eager to start generating.

A Tangent Worth Including

There's a term gaining traction for what happens when teams skip the workflow step: "workslop." Content that appears polished but lacks real substance, effectively offloading cognitive labor onto whoever has to review it next. We think the term is a bit harsh but the phenomenon is real. And it's a direct consequence of optimizing for generation speed without building the infrastructure to catch quality issues before they compound.

The Audit You Should Actually Run

If you manage a content operation with any AI tooling, here's the exercise we'd recommend: track every minute spent on a single blog post from the moment someone decides to write it until it goes live. Not the AI generation time. Everything else.

Break it into categories: briefing, prompt work, research, fact-checking, editing for voice, SEO optimization, formatting, approvals, publishing. Add the coordination time: Slack messages, status update meetings, "hey did you review that draft" pings.

Most teams who do this honestly find that generation accounts for less than 15% of total time-to-publish. The other 85% is the orchestration layer. And that's exactly the layer most AI writing tools ignore completely.

The question worth sitting with: are you investing in making the 15% faster, or the 85% better?

References

- Proofed, "The Hidden Cost of AI Content" - https://proofed.com/knowledge-hub/the-hidden-costs-of-in-house-editing/

- Pilot Press, "Hidden costs: freelance writers vs AI content solutions" - https://pilotpress.ai/blog/hidden-costs-freelance-writers-vs-ai-content-solutions

- AIContentfy, "AI Writing vs Traditional Writing: Pros and Cons" - https://aicontentfy.com/en/blog/ai-writing-vs-traditional-writing-pros-and-cons

- Alibaba, "Is Buying An AI-writing Assistant Subscription Smarter Than Hiring A Freelance Editor For Blog Content" - https://www.alibaba.com/product-insights/is-buying-an-ai-writing-assistant-subscription-smarter-than-hiring-a-freelance-editor-for-blog-content.html

- Sight AI, "Scaling Content Production Challenges: Fix Your Engine" - https://www.trysight.ai/blog/scaling-content-production-challenges