A marketing manager making $75,000 a year costs about $36 an hour. If that person spends 45 minutes reviewing every blog post before it goes live, the quality gate alone runs $27 per article. Publish 30 posts a month and you're looking at $810 just for someone to read drafts and say "yes" or "no."

That $810 doesn't show up in most content budgets. It should.

The AI-versus-human writing debate has consumed B2B content marketing for three years now. But the more expensive question, the one that actually determines whether your content investment compounds or decays, is simpler: do you have a quality gate, what does it cost, and is it catching the right problems?

We benchmarked four QA models against their real economics. The numbers tell a story that surprised us.

Why Quality Gates Matter More Than Writing Quality

Most B2B teams obsess over who (or what) writes the first draft. That's the wrong variable to optimize. A mediocre draft that passes through a well-designed quality gate will outperform a brilliant draft that gets published without review.

The reason is compounding failure modes. A post with a factual error doesn't just embarrass your brand once; it erodes domain authority, triggers content decay faster, and can tank rankings for adjacent pages through internal linking. According to Beast.bi, AI-generated content still requires human review, and teams that skip this step find that AI-assisted content typically requires 20-40% of the time it would take to write from scratch in editing alone. Skipping review doesn't save time. It shifts the cost downstream.

And here's what the data shows about ungated content: 77% of organizations report inconsistent content, meaning more than three-quarters of companies are publishing material that doesn't meet their own standards. Governance processes aren't a luxury. They're the difference between a content program that builds equity and one that generates noise.

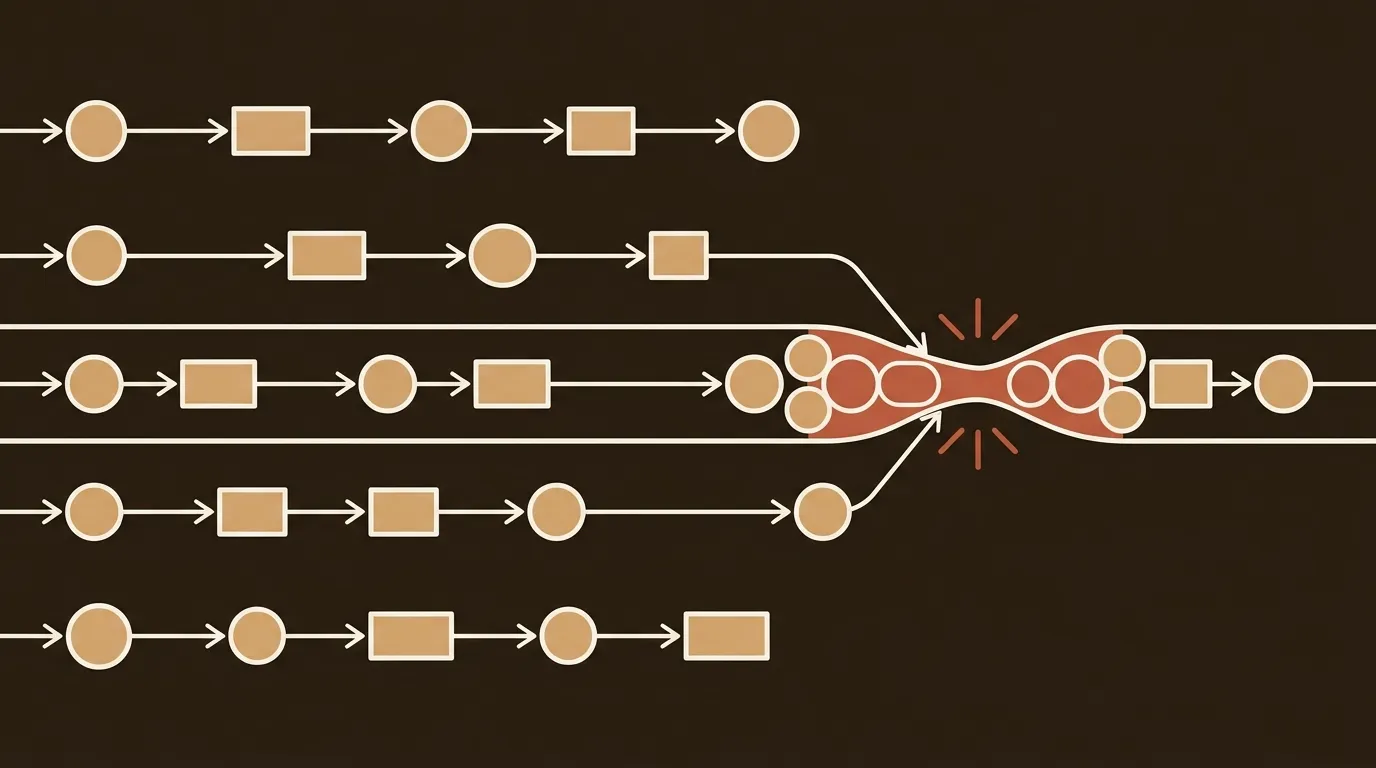

The Four Models, Priced Out

We've seen B2B content teams run quality assurance in four distinct ways. Each has a different cost structure, a different failure mode, and a different ceiling on output volume.

Model 1: Pure Human Review

The traditional approach. A strategist, subject matter expert, or editor reads every draft before publication. They check for accuracy, brand voice, logical flow, and SEO alignment.

Cost per quality gate: $18 to $36 per article.

That range assumes a marketing manager or senior editor at $36/hour spending 30 to 60 minutes per piece. For a 30-article monthly cadence, you're looking at $540 to $1,080 in labor costs for QA alone, before a single word is written.

The upside is real. Human reviewers catch nuance that automated systems miss: a claim that's technically accurate but misleading in context, a tone shift that doesn't match your brand, an analogy that falls flat for your specific audience. But this model breaks at scale. Past 20-25 articles per month, review quality degrades because the reviewer is fatigued. We've seen it repeatedly.

Model 2: AI-Scored Drafts

Automated QA systems define quality through rubrics, rules, or trained models. They ingest content, run it through an analysis engine, and return structured scores, flags, and recommendations. No human touches the draft unless it fails a threshold.

Cost per quality gate: $2 to $10 per article.

Platform costs range from $60 to $299/month. Amortized across even modest volume (20+ posts), the per-article cost drops fast. Processing time is seconds to minutes.

The trade-off is calibration. AI scoring systems need significant upfront tuning. Out of the box, most tools score for generic "quality" signals like readability grade, keyword density, and structural completeness. These correlate loosely with ranking performance but miss domain-specific issues entirely. A post about regulatory compliance in fintech has different quality requirements than a post about project management best practices. If you don't customize the rubric, you get consistent mediocrity instead of inconsistent quality. Consistent mediocrity is arguably worse because nobody flags it.

Model 3: Hybrid Gate with Editor Sign-Off

This is where most teams land after trying Models 1 and 2 in isolation. AI pre-screens every draft against defined criteria. Content that passes gets a light human review (5-10 minutes). Content that fails gets regenerated or flagged for deeper editing.

Cost per quality gate: $5 to $15 per article.

The math combines platform costs ($100-$400/month) with reduced human labor. According to TrySight's pricing analysis, higher-priced tools with more sophisticated AI often deliver better per-article economics because they produce drafts requiring less revision. A $499/month platform generating publication-ready content can cost less per article than a $99/month tool whose output needs extensive rework.

The key design decision in this model is where human judgment intervenes. A practical QA process includes three checkpoints: a subject matter expert reviews technical accuracy, an editor checks brand voice and readability, and a final reviewer confirms SEO requirements including internal linking and schema markup. Not every article needs all three. But your highest-stakes content (product pages, thought leadership, anything touching compliance) should get the full treatment.

Model 4: Fully Automated Publishing

No human in the loop. Content is generated, scored, and published programmatically. The system connects directly to your CMS and pushes content live without manual transfers.

Cost per quality gate: $3 to $8 per article.

Platform costs run $100 to $600/month. There's no labor component at all.

This model scares people, and honestly, it should scare some of them. Most AI tools use "soft scoring" that flags potential issues but still lets questionable content through. Hard-stop quality gates, ones that block substandard content from reaching your site entirely, are different. They refuse to publish a piece that fails defined criteria and either regenerate it or route it to a human. The distinction matters enormously. Soft scoring with auto-publish is a liability. Hard stops with auto-regeneration can actually produce more consistent output than a tired human reviewer at 4pm on a Friday.

The Hidden Line Item Nobody Budgets For

Here's the number that gets left off spreadsheets: the cost of not having a quality gate.

Content decay is real and measurable. A post that ranks on page one for six months and then drops to page three didn't just lose traffic. It lost the compounding value of every internal link pointing to it, every backlink it earned, and every lead it was generating. Recovering a decayed post costs $150-$300 in editorial time (re-research, rewrite, re-optimize, republish). If 20% of your content library decays annually without intervention, and you have 200 posts, that's 40 posts needing recovery at $200 each. $8,000 a year in remediation.

A quality gate that costs $10 per article and prevents even a portion of that decay pays for itself. The math isn't complicated.

So track it. Monitor time-to-index in Google Search Console. Watch ranking velocity for new posts versus older ones. If newer content indexes slower, you may have crawl budget issues, but you may also have a quality problem that's teaching Google your domain publishes unreliable content.

Where Human Judgment Actually Pays for Itself

Not everywhere. That's the honest answer.

For high-volume informational content (glossary pages, "what is X" posts, comparison tables), fully automated or AI-scored gates perform well enough. The content is formulaic by nature. The quality bar is "accurate and complete," and AI systems are genuinely good at verifying both.

Human judgment earns its premium in three specific scenarios.

Original research and data interpretation. AI can summarize data. It cannot determine whether a dataset supports the conclusion you're drawing or whether the methodology is sound. If your content strategy includes original research (and it should, since original data generates backlinks at 2-3x the rate of derivative content), a human must verify the analysis.

Brand-critical thought leadership. Your CEO's quarterly industry perspective piece is not the place to save $25 on a review cycle. The reputational cost of a factual error or a tone-deaf take exceeds the QA cost by orders of magnitude.

Regulated industry content. Financial services, healthcare, legal. If a wrong claim creates liability, the quality gate needs human expertise. Full stop.

For everything else, a well-calibrated AI gate with hard stops performs within 90% of human accuracy at 10-20% of the cost. That's not a claim we make lightly. We've tested it across thousands of articles.

Building Your Cost-Per-Quality-Gate Number

Here's the formula. It's simple enough to run in a spreadsheet.

Monthly QA cost = (Tool/platform subscription) + (Hourly labor rate × review hours per article × articles reviewed by humans)

Cost per quality gate = Monthly QA cost ÷ Total articles published

Example for a hybrid model publishing 30 articles per month: Platform at $299/month. Editor reviews 10 high-priority articles at $36/hour for 15 minutes each ($90 labor). Total monthly QA: $389. Cost per quality gate: $12.97 per article.

Compare that to your cost per article. If your total content inputs run $2,449 monthly for 30 articles, that's about $82 per article. Adding $13 for a quality gate represents a 16% increase in cost per article. But if that gate prevents even two posts per month from decaying prematurely (saving $400 in remediation), it's net positive within the first month.

Plug these numbers into your actual budget. Your team size, hourly rates, and content volume will shift the ratios. But the framework holds.

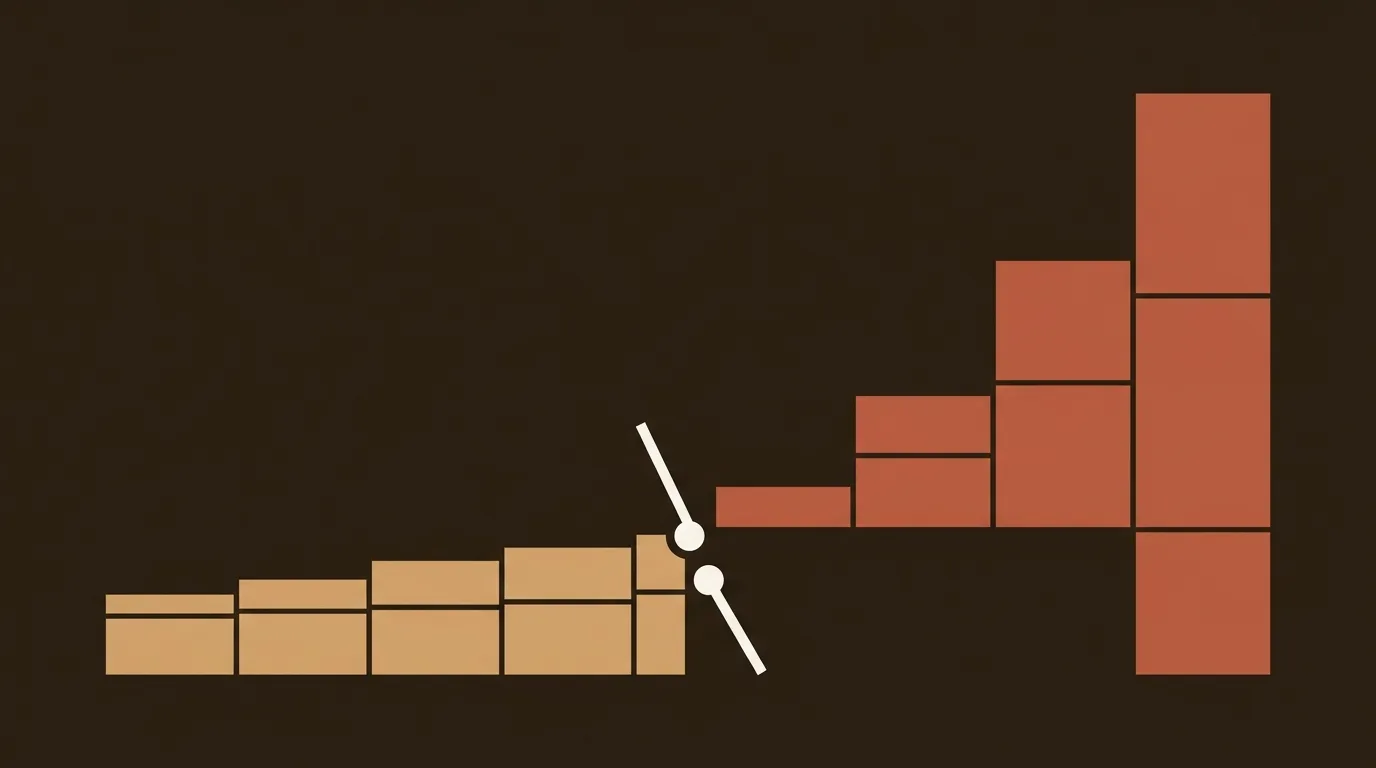

What We Expect to Change by Late 2026

AI scoring accuracy is improving quarterly. The gap between Models 2 and 3 (pure AI scoring versus hybrid) is narrowing. We expect that by Q4 2026, AI-only quality gates will match hybrid model accuracy for most non-regulated B2B content categories.

That doesn't mean human review disappears. It means human review migrates upward in the value chain, from "did this draft hit our quality bar" to "is this the right topic with the right angle for this audience at this moment." Strategic judgment, not copy editing. The quality gate question will evolve, but it won't go away. Content teams that start measuring their cost-per-quality-gate now will have the data to make that transition cleanly. Teams that don't will keep paying for quality problems they can't see until they show up in their traffic dashboards.

References

- 8 Top AI-Powered Automated Quality Assurance in 2026 - Crescendo.ai

- Why AI Content Automation Needs Human Oversight in 2026 - Beast.bi

- How to Humanise AI Content for B2B Marketing in 2026 - WhiteHat SEO

- Automated Publishing Software Pricing Guide 2026 - TrySight.ai

- AI Content Generation At Scale: Complete 2026 Guide - TrySight.ai