Ninety-one percent of B2B organizations run content marketing programs. Eighty-five percent have adopted AI tools. Yet only about one in three rate their content efforts as highly effective. That gap hasn't budged in two years. Not despite the tooling revolution, but alongside it.

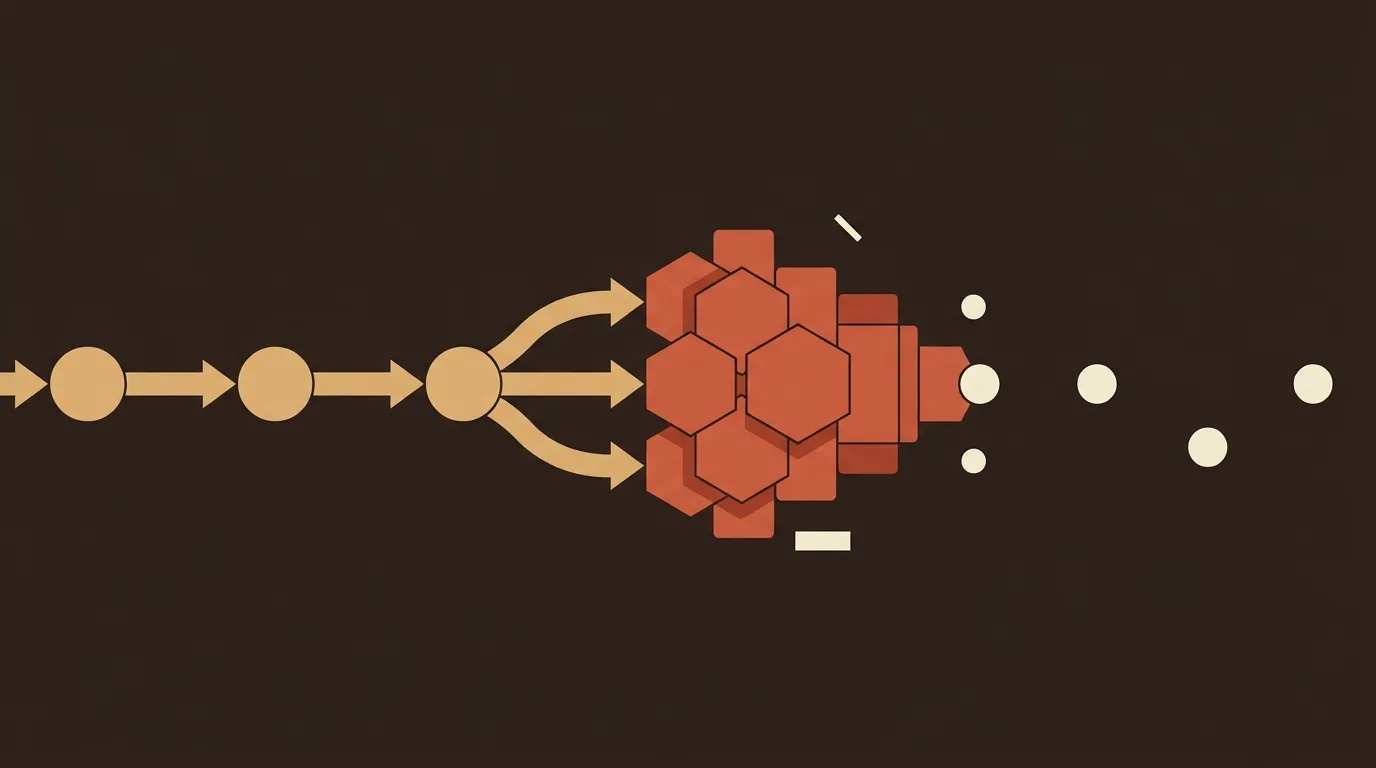

We've spent a lot of time trying to understand why. The obvious answers, writing quality and publishing volume, don't hold up under scrutiny. AI-generated drafts are decent enough to ship for most use cases, and volume has never been cheaper to produce. The real answer is less glamorous: most B2B teams bolted AI generation onto manual editorial infrastructure that was already breaking. Speed increased. The bottlenecks didn't disappear. They just compressed into a shorter timeline.

This piece maps where the 67% of underperforming programs actually lose their returns.

The adoption curve flattened, but effectiveness didn't follow

The raw numbers paint a picture of near-universal adoption with wildly uneven results. According to G2's 2025 AI in B2B Marketing report, 54% of B2B marketing teams take an ad hoc approach to AI, experimenting without applying it broadly. Only 19% have integrated AI into daily workflows. And 54% of marketers say they feel overwhelmed by the prospect of doing so.

That last number deserves attention. Overwhelm isn't a skill gap. It's a signal that the integration surface area is too large for the way these teams are structured. A two-person marketing team doesn't need a better ChatGPT prompt. They need their entire content pipeline to work differently.

The distinction matters because it reframes the problem. This isn't "AI isn't good enough." It's "we adopted the fastest part of the pipeline and left everything else untouched."

Where 67% of programs bleed out

We've identified five structural failure points that consistently separate the top third from everyone else. They're not exotic. They're mundane. That's why they persist.

Topic validation is guesswork at most companies

Most B2B content calendars get built in a brainstorming session, a shared doc, or a Slack thread. Someone has a hunch. Someone else saw a competitor publish on a topic. A sales rep mentions a customer question. The editorial calendar fills up with topics that feel reasonable but have no validation layer.

AI can help here, but not in the way most teams use it. Content gap analysis powered by AI can scan forums, support tickets, and search patterns to find information gaps with demonstrated demand but inadequate supply. That's a fundamentally different input than "what should we write about this month?" Yet most teams skip this step entirely, jumping straight from idea to draft.

The downstream cost is invisible but real. You publish a well-written article on a topic nobody is searching for. It gets 40 organic sessions a month. The next article does the same. After six months, you have 24 posts and a traffic graph that looks like a flatline. The content wasn't bad. The topic selection was never validated.

SEO integration happens after the fact (or not at all)

Here's a pattern we see constantly: a team uses AI to draft an article, then hands it to someone for "SEO optimization." That person adds a few keywords, adjusts a meta description, and calls it done.

This gets the order of operations backwards. SEO should inform the brief before a single word is written. Target keyword, search intent, competing content analysis, internal linking opportunities, and content structure should all be inputs to the writing process, not afterthoughts.

The gap is getting wider, too. As AI-powered search engines increasingly determine how audiences discover content, tracking whether your content appears in LLM-generated answers is becoming as important as traditional SERP rankings. This measurement category didn't exist two years ago. Most analytics tools don't cover it natively. And most content teams aren't even thinking about it.

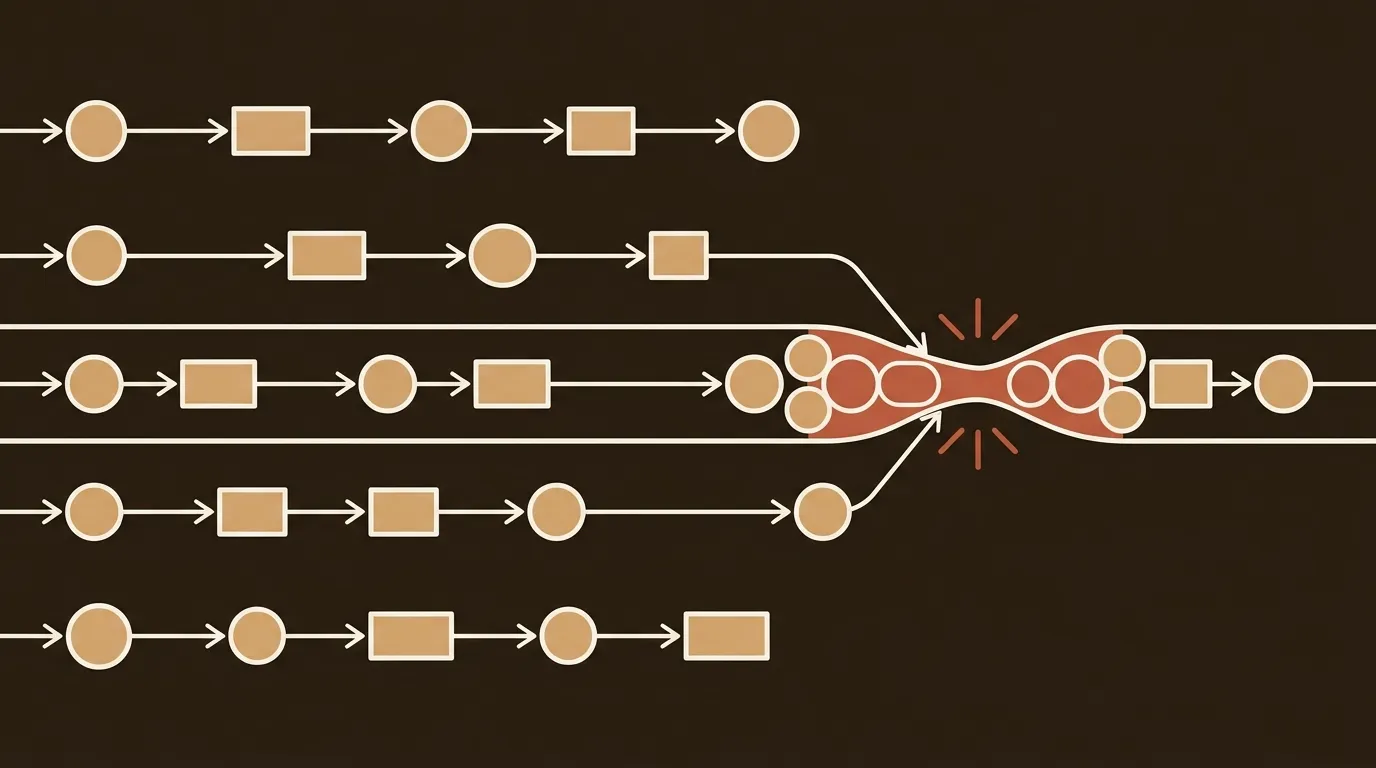

The re-prompt loop eats more time than anyone admits

The average blog post takes 3.8 hours to write. Teams using structured AI workflows report producing publication-ready articles in 9.5 minutes. But that 9.5-minute figure comes from teams with structured systems. The majority experience something very different.

They generate a draft. It's off-brand. They adjust the prompt. The tone is better but the structure is wrong. They regenerate. Now it's too long. They edit manually. Forty-five minutes later, they have something usable that still needs a human pass for accuracy.

This is the re-prompt loop, and it's the single biggest time sink in AI-assisted content creation. It happens because there's no structured brief feeding the generation step. No brand voice parameters. No content evaluation between generation and publishing. The AI writes in a vacuum, and the human fills the gap with manual iteration.

One B2B SaaS company found their lead quality dropped 23% after six months of unstructured AI content creation. Prospects couldn't reconcile professional whitepapers with casual blog posts, all generated by different prompts with no unified guidelines. The volume was there. The coherence wasn't.

Performance attribution is a black hole

Here's a number that should bother every marketing leader: 51% of teams cannot track ROI or see the true business impact of their AI investments. Only 19% track AI-specific KPIs.

Traditional content metrics measure what happens after publication. Pageviews, time on page, conversions. But they don't capture what it cost to produce, how efficiently it was created, or whether quality is consistent across the pipeline. Content velocity and cost per content unit are foundational AI metrics, and teams tracking these before and after AI adoption can demonstrate concrete productivity gains within 90 days.

Without this data, content programs can't make rational investment decisions. You don't know if your $3,000/month agency is outperforming your $29/month AI tool. You don't know if publishing three times a week is more effective than once. You don't know if your best-performing content cluster took 10 hours or 100 hours to build. You're flying blind, and the budget conversation next quarter will reflect it.

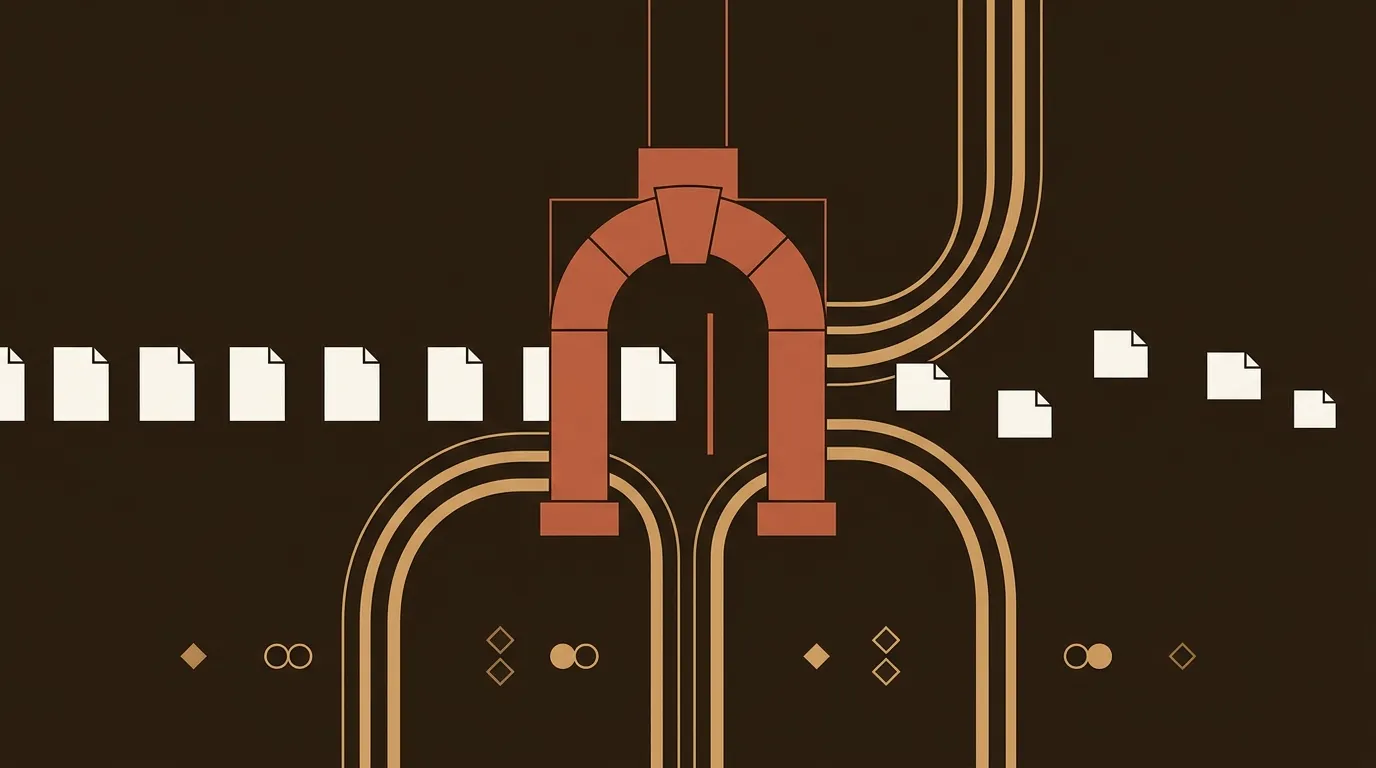

Publishing logistics are the silent tax

Small B2B content teams spend 40% or more of their week on coordination tasks. Pinging writers for drafts. Manually posting to LinkedIn. Copy-pasting leads into CRM fields. Building the same weekly performance report from scratch.

Content promoted across three or more channels generates 287% more leads than single-channel content. But most teams publish and then distribute manually hours later. Or forget entirely. The post goes live on the blog at 2 PM on Tuesday. The LinkedIn share happens Thursday. The email mention goes out the following week. By then, the algorithm has moved on and the window for initial engagement has closed.

This is not a creativity problem. It's a plumbing problem. And plumbing problems are boring enough that they never make it onto the priority list.

Why the top 33% pull ahead

The answer from Content Marketing Institute's 2026 research is surprisingly specific. More than half of effective teams point to content relevance and quality (65%) and team skills and capabilities (53%) as the drivers. Top performers distinguish themselves by having organizational guidelines for generative AI use and integration of AI into daily processes, not just access to better tools.

This tracks with what we've observed. The effective programs don't have bigger budgets or more staff. They've done the unglamorous work of redesigning how content moves through their organization. Brief creation, research, writing, evaluation, SEO, publishing, and distribution aren't separate steps handled by separate people with separate tools. They're one connected system.

The math nobody wants to do

Let's run a quick calculation. Say you're a 2-person marketing team publishing 8 blog posts per month.

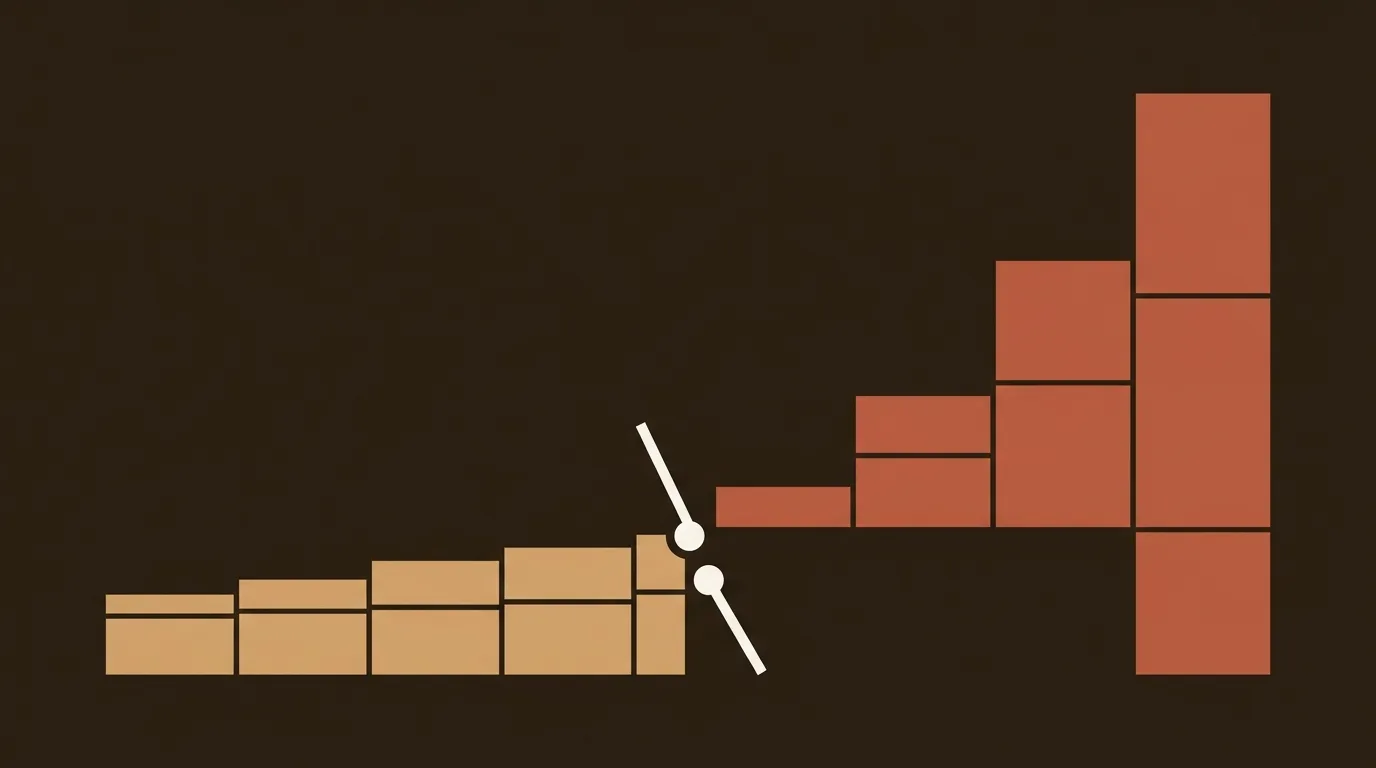

Manual approach: 3.8 hours per post for writing, plus 2 hours for research, topic validation, SEO, formatting, and publishing. That's 46.4 hours per month. At a blended cost of $75/hour, you're spending $3,480/month on blog content.

AI-grafted-onto-manual approach: 1.5 hours per post (AI draft + manual editing) plus the same 2 hours for everything else. That's 28 hours per month. Cost: $2,100/month. You saved 40% on the writing step, but 60% of the work (and cost) was never in the writing.

Teams that restructure around workflow automation report 40-60% reduction in total content production cost and 50-70% faster turnaround. The savings come from automating the other 60%, not from making the writing step faster.

So the 67% of underperforming teams? They optimized the 40% and left the 60% untouched. That's the gap.

The uncomfortable question ahead

About 95% of AI initiatives fail to deliver expected financial returns, largely because organizations apply outdated ROI frameworks that don't capture what AI-generated content actually does.

The dividing line forming right now isn't between teams with AI and teams without it. Everyone has AI. It's between teams that redesigned their workflow architecture around AI and teams that plugged AI into a workflow designed for humans. The first group will compound their advantage every quarter. The second group will keep wondering why their content metrics look the same as they did in 2023.

We don't think this gap closes on its own. It widens. And the teams that figure out the plumbing, not just the prose, are the ones who'll still be publishing profitably a year from now.

References

- Content Marketing Institute, "B2B Content and Marketing Trends: Insights for 2026" -- https://contentmarketinginstitute.com/b2b-research/b2b-content-marketing-trends-research

- G2, "AI in B2B Marketing: How Teams Are Using AI In 2025" -- https://learn.g2.com/ai-in-b2b-marketing

- 1827 Marketing, "AI in B2B Marketing: 2025 Statistics Every CMO Needs to Know" -- https://1827marketing.com/smart-thinking/ai-in-b2b-marketing-2025-statistics-every-cmo-needs-to-know/

- NAV43, "AI Content Creation Workflows: Scale Quality Content & Eliminate the Prompt Bottleneck" -- https://nav43.com/blog/ai-content-creation-workflows-scale-quality-content-eliminate-the-prompt-bottleneck/

- Xenia Consulting, "AI-Powered Content Workflows: B2B Marketer's Guide" -- https://xenia-consulting.com/ai-powered-content-workflows-b2b/