Most B2B content teams built their 2025 budgets around a simple assumption: AI generation drops the cost per article to somewhere between $15 and $50, and a light human edit adds maybe 20 minutes of labor on top. That math worked for about 18 months. It doesn't work anymore.

Google's March 2026 core update, rolled out alongside a separate spam update on March 24, specifically targets scaled content abuse. And the simultaneous expansion of AI Overviews has introduced a second, parallel set of editorial requirements that most teams haven't even begun to account for. The result is a paradox that's quietly wrecking content operation budgets: AI handles more of the writing, but earning visibility now demands more human time per article, not less.

We've been tracking the numbers. Here's what a realistic, fully-loaded cost model actually looks like in 2026.

The Two-Front Problem

The March 2026 update isn't operating in isolation. It followed a February 2026 core update that started targeting AI content quality and topical authority signals. Think of February as the warning shot and March as the enforcement action. Sites publishing hundreds or thousands of pages per month with minimal human review are the primary casualties.

But ranking in traditional SERPs is only half the battle now. AI Overviews have become a second distribution channel with its own editorial logic. Google is testing large citation blocks at the bottom of AI Overviews, and getting cited there follows different rules than ranking in position one.

Here's the kicker: ranking #1 no longer guarantees an AI citation. Only 38% of AI Overview citations come from top-10 pages, down from 76% a year ago. And roughly 90% of ChatGPT citations come from pages ranked position 21 or lower. So you need depth across sub-queries, not just one well-optimized page.

This means every article you publish now has two performance criteria, each with distinct editorial requirements. One set of standards for traditional ranking. Another for citation eligibility. The overlap is smaller than you'd think.

Why the 2024 Cost Model Broke

Let's do the math that most teams ran in late 2024 or early 2025. A typical "AI-assisted" article cost model looked something like this:

- AI generation (GPT-4 or Claude): $0.50-$3.00 in API costs

- Light copyedit: 15-20 minutes of human time

- SEO optimization pass: 10 minutes with a tool like Clearscope or SurferSEO

- Total human time: ~30 minutes

- Fully loaded cost at $50/hr blended rate: roughly $25-$30 per article

That model assumed the output was "good enough" after a quick once-over. And for a while, it was. Google hadn't yet drawn a hard line on scaled AI content, and AI Overviews were still in limited rollout.

Now look at what 2026 actually requires.

The 2026 Fully-Loaded Article: Line by Line

We've broken this into the stages that content operations teams actually need to run. These aren't aspirational. They're the minimum for content that ranks and gets cited.

Strategic briefing (before any AI touches it)

Quality starts before you ever prompt the AI. A proper brief defines target keyword, search intent, audience segment, and the specific angle that differentiates this piece from the 47 others already ranking. You also need to map the sub-queries that AI Overviews are pulling citations from, because your article needs to address those too.

Human time: 25-40 minutes per article.

This step didn't exist in most 2024 workflows. Teams were generating topics from keyword lists and feeding them straight into prompts. That approach now produces content that Google's systems flag as thin or duplicative.

AI generation

Still the cheapest step. API costs haven't changed much. The generation itself takes seconds.

Cost: $0.50-$5.00 depending on model, length, and iteration count.

Section-by-section fact-checking

This is the new non-negotiable. AI models generate text that sounds authoritative, but the details are often fabricated or outdated. An LLM will confidently cite a 2022 statistic as current. It will reference studies that don't exist. And these errors aren't obvious; they're embedded in otherwise fluent prose.

Every claim, statistic, and reference needs manual verification. Not a spot check. A full audit.

Human time: 30-45 minutes per 1,500-word article. Longer for data-heavy topics.

We've seen teams try to automate this step with a second AI pass. It catches some things. It misses others. The liability of publishing fabricated data (especially in B2B, where your readers are domain experts) makes this a genuinely hard problem to shortcut.

Structural editing for citation eligibility

This one is new and weird. 55% of AI Overview citations come from the top 30% of a page. That means your best answers need to appear early. But traditional engagement-optimized content buries the answer to build dwell time.

You don't have to pick one approach, but you do have to be intentional about both. Someone needs to restructure the article so that answer capsules, data tables, and definitive statements appear in positions where AI systems extract them.

Pages containing original data tables earn 4.1x more AI citations. That's not a rounding error. That's a structural editing requirement.

Human time: 20-30 minutes per article.

E-E-A-T signal layer

E-E-A-T is non-negotiable in 2026. Small businesses and solo creators have to prove real expertise. For AI-generated content, this means adding author attribution with verifiable credentials, linking to primary sources, including original analysis or commentary that couldn't have come from an LLM alone, and ensuring the bylined author actually reviewed the piece.

This isn't just metadata. It requires a subject matter expert to read the draft and add the specificity that signals real experience. A generic AI article about "B2B lead generation" won't cut it. An article that references specific campaigns, names tools by version number, and includes original calculations will.

Human time: 15-25 minutes if your SME is available. Much longer if they're not.

Post-publication monitoring and iteration

The checklist doesn't end at publish. Citation-ready content is a living system. Within the first two weeks, you need to test your target query in ChatGPT, Perplexity, and Google AI Overviews. By week four, check Search Console for AI-related referral traffic. If you're not getting cited within 30 days, you update: add missing subtopics, strengthen the opening, add more data.

The teams that update systematically end up owning citations that everyone else leaves on the table.

Human time: 15-20 minutes per article per month, ongoing.

The New Math

Add it up for a single article:

| Step | Human Time | Cost @ $50/hr |

|---|---|---|

| Strategic briefing | 30 min | $25 |

| AI generation | -- | $3 |

| Fact-checking | 40 min | $33 |

| Structural editing | 25 min | $21 |

| E-E-A-T review | 20 min | $17 |

| Post-pub monitoring (month 1) | 20 min | $17 |

| Total | ~135 min | ~$116 |

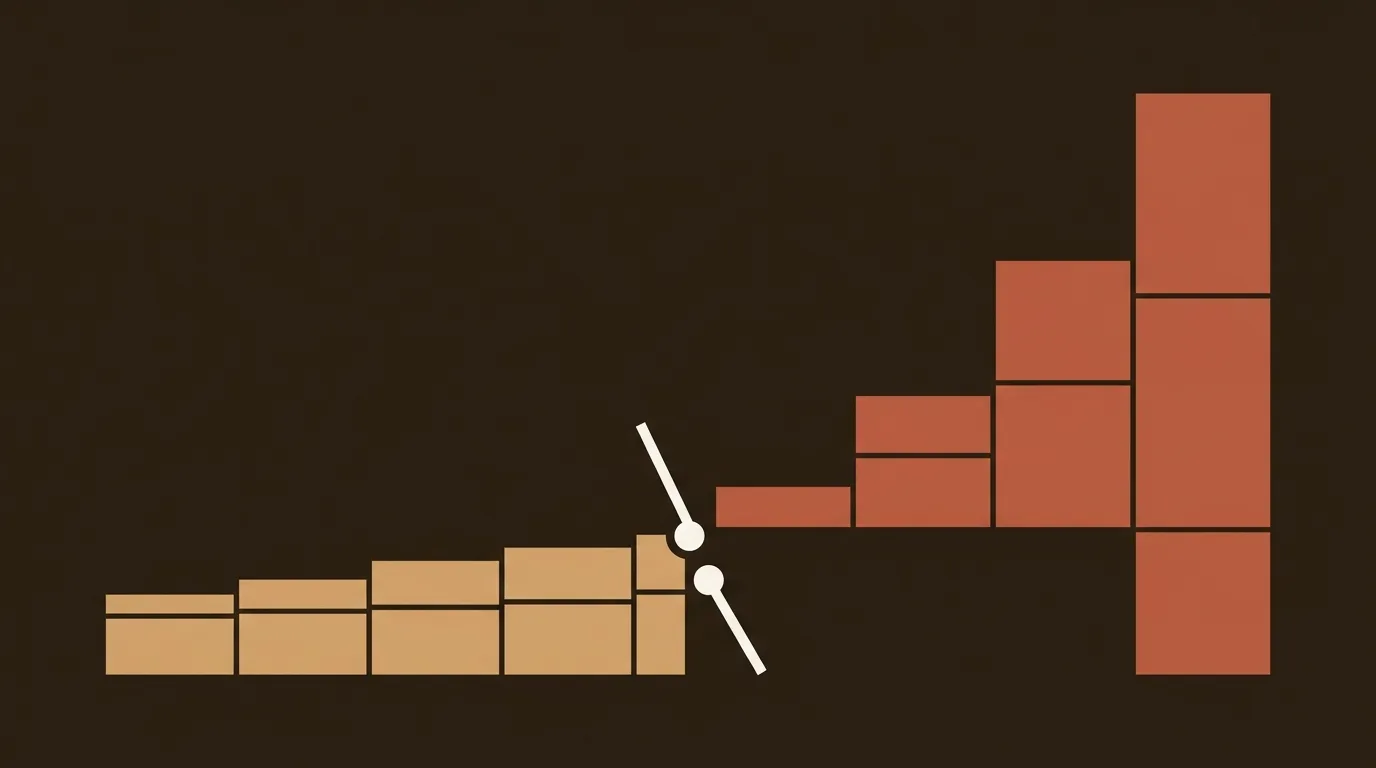

Compare that to the $25-$30 per article teams budgeted in 2024. The cost has roughly 4x'd, and the human time component went from 30 minutes to over two hours.

And this is the realistic estimate, not the pessimistic one. If your fact-checking surfaces actual errors (it will), add revision cycles. If your SME pushes back on the angle, add more. If the post-pub audit shows no citations after 30 days, add an update cycle.

The Volume Trap

Here's where it gets uncomfortable. Sites publishing 50-100 quality AI articles with human editing saw traffic increases of 30-80%. Sites publishing 1,000+ unedited AI articles saw traffic drops of 40-90%.

The difference was not whether they used AI. It was whether they built a quality control process around it.

So the team that planned to publish 200 articles a month at $30 each (total budget: $6,000) now needs to either cut volume to ~50 articles at $116 each (total: $5,800) or increase budget to $23,200 to maintain volume. Most teams will cut volume. That's the right call, but it requires rethinking the entire content calendar.

Some teams will try to split the difference by applying full editorial rigor to "hero" posts and lighter review to "long-tail" content. That strategy has legs, but only if you accept that the long-tail pieces are ranking-eligible but probably not citation-eligible. You're playing two different games with two different cost structures.

What This Means for Staffing

A one-person marketing team was never going to produce 50 fully-reviewed articles a month. But in 2024, AI made it feel possible. The generation was fast, the editing felt manageable, and the volume felt like a competitive advantage.

In 2026, the bottleneck is human review capacity. A single marketer can realistically produce 15-20 fully compliant articles per month if that's most of what they do. An agency managing multiple client blogs needs dedicated QA roles that didn't exist two years ago.

The irony isn't lost on us. AI was supposed to reduce headcount in content operations. Instead, it's created a new category of work (citation optimization, fact verification, structural editing for AI extraction) that requires skilled humans.

Where the Efficiency Gains Actually Live

This isn't all bad news. AI still saves real time in specific phases: first-draft generation, keyword clustering, outline creation, and meta description writing. Those gains are legitimate. A writer who used to spend four hours drafting a 2,000-word article now spends 40 minutes reviewing and improving an AI draft.

But the savings get recaptured (and then some) by the new editorial requirements. The net effect is that AI changes where humans spend time, not how much time they spend. Production shifts from creation to curation, verification, and optimization.

The highest-performing teams we've observed treat AI as a productivity multiplier in the drafting phase while investing more human hours in the quality phases that Google and AI systems now explicitly reward. That's the model that survives 2026. Whether it scales the way anyone hoped in 2024 is a question nobody has a clean answer to yet.

References

- Google's March 2026 Core Update: What Changed in SEO and Why It Matters Now

- Video: Google March 2026 Core & Spam Update, AI-Generated Title Links, Bing AI Reports Update & Much More

- Google March 2026 Core Update: Confirmed Timeline, SEO Impact, and What Site Owners Should Do Next | ALM Corp

- Google March 2026 Core Update: Impact and Recovery Guide

- AI Generated SEO Articles Quality: Complete Guide 2026