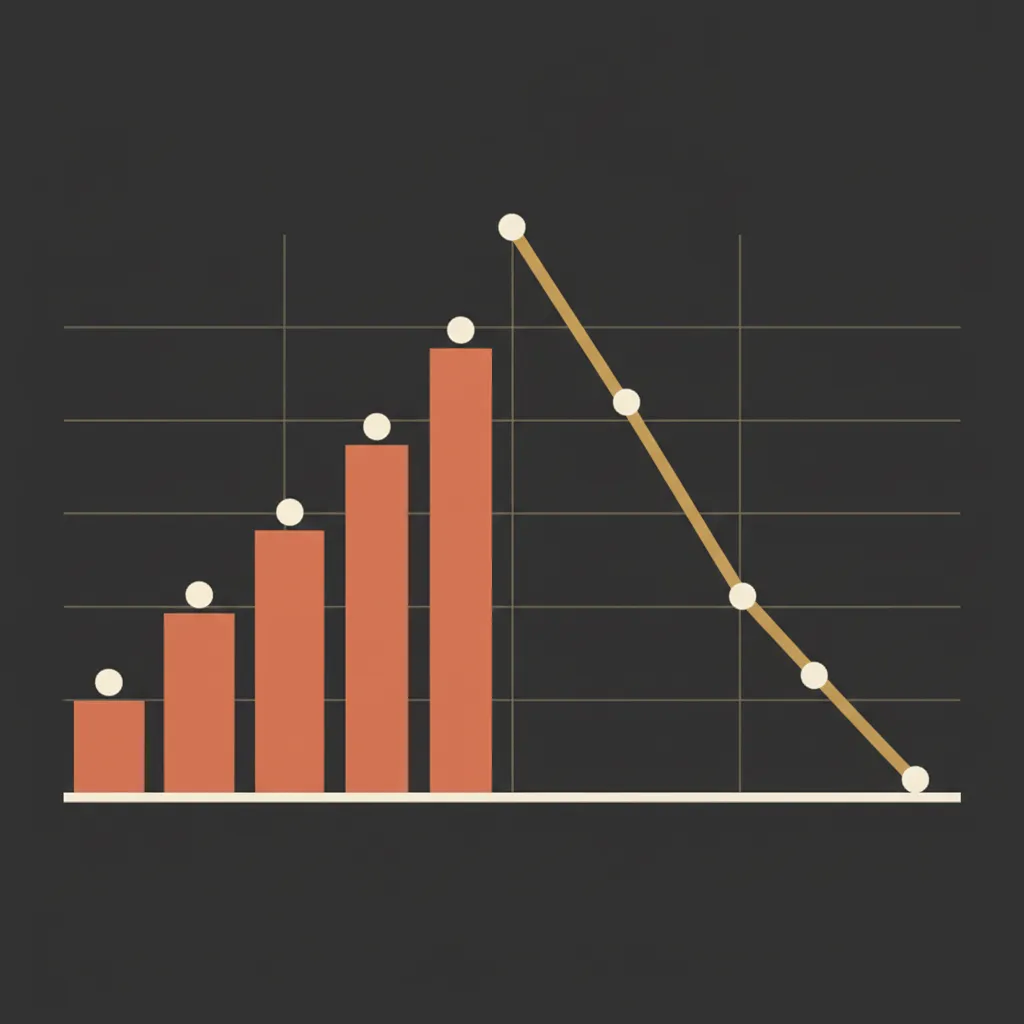

Ninety-five percent of generative AI pilots are failing to deliver measurable P&L impact, according to a recent MIT report covered by Fortune. That number has been making the rounds on LinkedIn like a doomsday headline. But the more interesting signal is buried underneath it: 79% of teams using generative AI report productivity gains in their day-to-day work.

Both things are true at the same time. And the contradiction tells us something specific about where the breakdown actually happens.

The Productivity Paradox Is a Measurement Problem

If individual contributors feel more productive but companies can't find the ROI on their P&L, the problem isn't the AI model. It's what happens between "the draft is done" and "this content is generating organic traffic six months later."

We've seen this pattern over and over in content marketing. A writer (or an AI) produces a blog post. It sits in a Google Doc for a week. Someone eventually copies it into the CMS, guesses at a meta description, skips internal linking because it's tedious, and hits publish. The post earns 47 pageviews in its first month and quietly dies.

The Marketing AI Institute's analysis of the MIT data makes an important distinction: the study defined "success" narrowly as deployment beyond pilot phase with measurable KPIs and ROI impact six months post-pilot. That's a high bar. And it's exactly the right one for content teams to adopt, because content that doesn't compound isn't content strategy. It's busywork.

Where the MIT Data Gets Misread

There's a tempting narrative that goes like this: "AI writing tools aren't good enough yet, so most pilots fail." That reading is wrong.

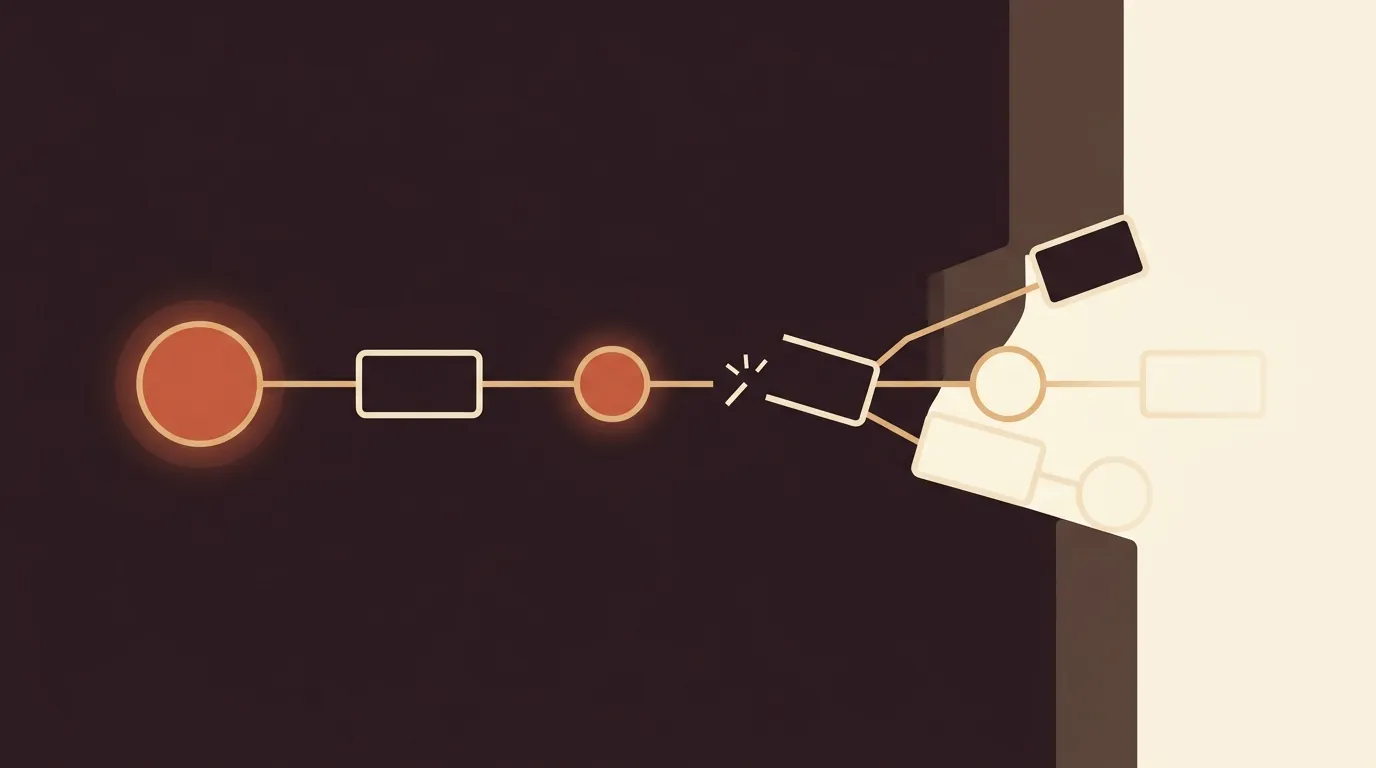

The MIT research, as Trullion's breakdown explains, found that generic tools like ChatGPT excel for individuals because of their flexibility but stall in enterprise settings because they don't learn from or adapt to existing workflows. The failure isn't generation quality. It's integration depth.

Internal builds achieved roughly a 33% success rate. Specialized vendor solutions hit about 67%. That gap is enormous, and it points to a specific architectural problem: most teams bolt a writing tool onto the front of their workflow and assume the rest will sort itself out.

It does not sort itself out.

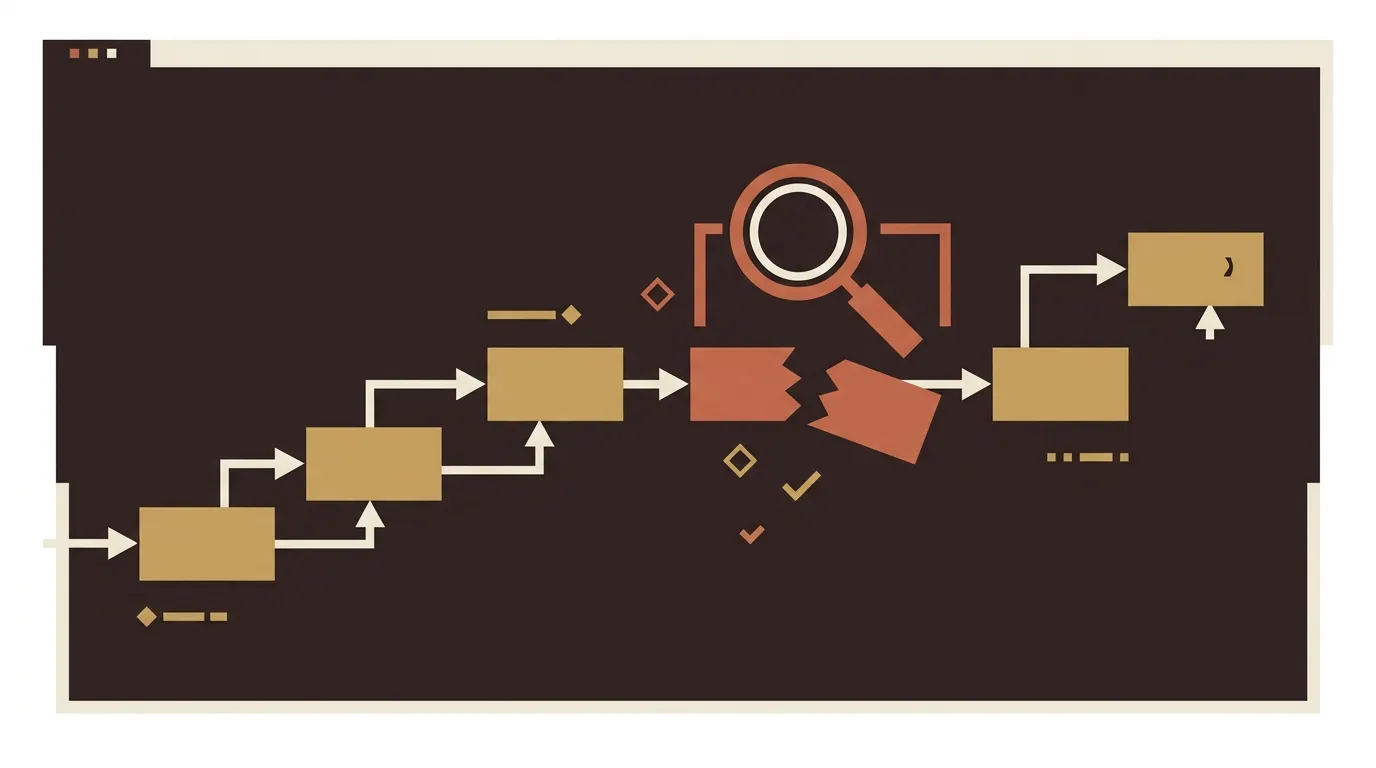

The six steps that happen after a draft exists (keyword validation, internal linking, quality scoring, metadata generation, publish-pipeline execution, and performance tracking) are where most of the cost sits and where nearly all of the ROI variance lives. These steps are boring. They're also the difference between a blog that compounds and a blog that doesn't.

The Six Post-Generation Steps Nobody Instruments

Let's be specific about what falls apart after the writing is done.

1. Keyword Validation and Search Intent Alignment

A draft exists. But does it target a keyword cluster that has actual search volume? Does it match the intent behind those queries? Most AI writing tools produce content based on a prompt, not based on validated keyword research tied to your domain's authority gaps.

According to Averi's 2026 State of Content Workflows report, AI-assisted content workflows can cut production time by 60 to 80%. But that statistic masks a dirty truth: more than half of all respondents still spend one or more workdays creating each piece of content. Speed gains in the drafting phase are being eaten alive by manual effort in the validation and optimization phases.

2. Internal Linking

This one is genuinely messy. SeoProAI's guide to internal linking tools lays out the challenge clearly: as your site grows, internal linking becomes exponentially more tedious. You need to interlink between an increasing number of pages, and most teams simply stop doing it.

The cost of neglecting internal linking is invisible until you audit your site and find that 40% of your high-value articles are orphaned, with zero internal links pointing to them. Those pages will never rank, no matter how good the writing is.

Automated internal linking tools exist, and they work on topical relevance analysis and authority distribution. But they need to be part of the pipeline, not an afterthought someone runs manually every quarter.

3. Quality Scoring and Editorial Checks

The AI produces a draft. Who checks it? In most pilot programs, the answer is "whoever has time," which usually means nobody.

A proper quality gate includes grammar and readability scoring, brand voice assessment, factual verification, plagiarism detection, and AI detection checks. The Averi report describes a review phase where AI handles grammar and plagiarism while humans verify facts and refine tone. That's the right model. But most teams skip this step entirely because it requires tooling they haven't set up.

And here's the part that makes this problem worse: over-automating without any editorial oversight can produce robotic anchor text, redundant links, or circular paths that actively frustrate readers. So you can't just automate and walk away. You need a scoring system that catches problems before they go live. Quality assurance is not a nice-to-have. It's the mechanism that prevents your content program from becoming a liability.

4. Metadata and Publishing Pipeline

Title tags, meta descriptions, canonical URLs, clean slugs, heading structure, alt text, schema markup, XML sitemap pings. Each of these is a small thing. Collectively, they determine whether search engines can even parse your content correctly.

Most CMS platforms offer some of this natively. But "some" isn't enough when you're publishing 20 to 50 posts per month. The manual overhead of checking each field across each post adds up to hours per week, and it's exactly the kind of repetitive task that humans are bad at doing consistently.

5. Performance Attribution

Here's where things get genuinely hard in 2026. With AI-generated search answers (Google's AI Overviews, Bing's Copilot, Perplexity) pulling content into answer boxes, traditional click-through metrics don't capture the full picture anymore. The AI marketing industry hit $47.32 billion in valuation in 2025 (Averi), and generative engine optimization (GEO) tracking is becoming a necessary layer on top of standard SEO analytics.

If you can't attribute organic traffic to specific posts, you can't calculate content ROI. And if you can't calculate content ROI, your pilot will join the 95% that fail to show P&L impact. Not because the content was bad, but because nobody measured the right thing.

6. Content Decay and Re-optimization

A post that ranks #4 today will not rank #4 in six months without maintenance. Search intent shifts. Competitors publish better versions. SERP features change. Content decay is not a bug; it's how search works.

The best content operations schedule automated re-audits, detect declining performance, and trigger re-optimization based on new queries and competitor activity. Most teams do this manually, if they do it at all.

The Build vs. Buy Mistake

The Trullion analysis surfaced a pattern that should make every marketing manager pause: almost everywhere enterprises went, they tried to build their own AI tools. But purchased solutions delivered more reliable results.

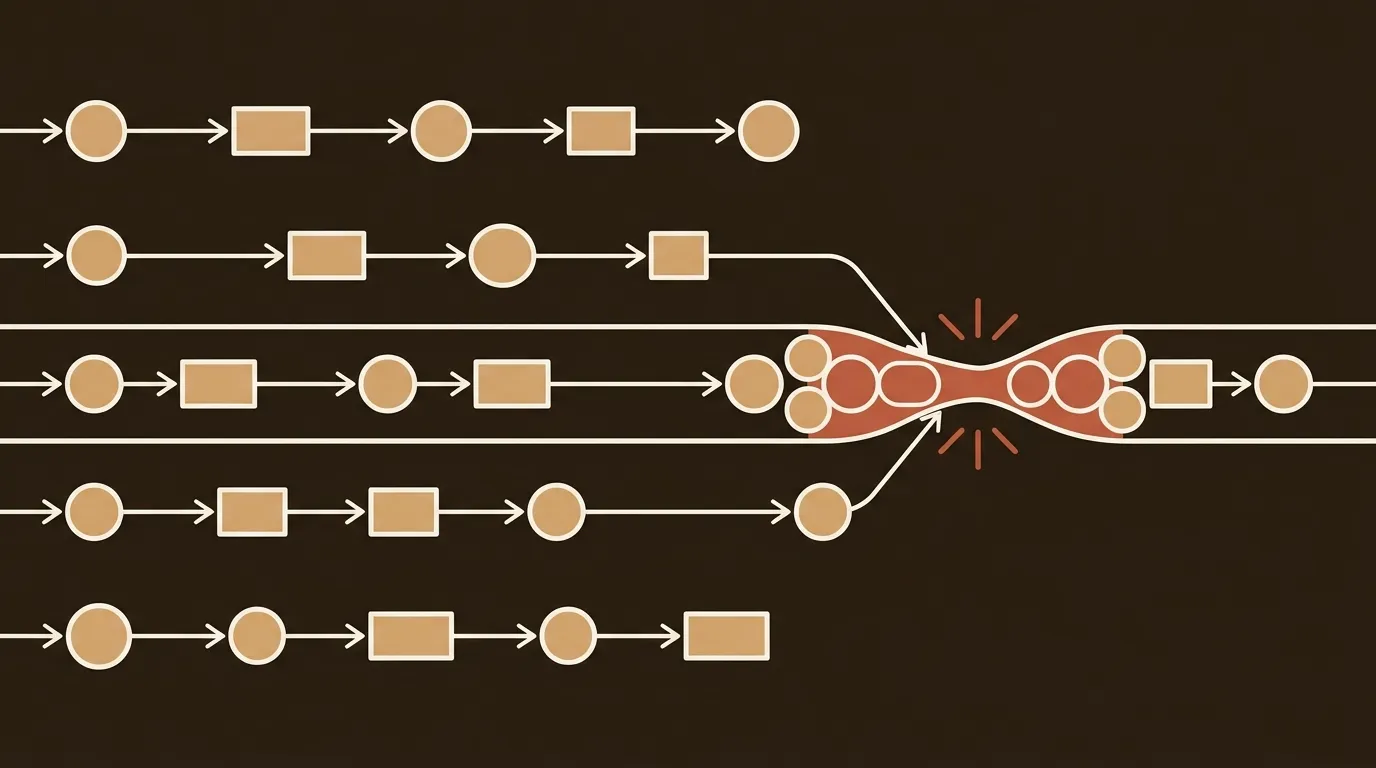

This isn't surprising if you think about it structurally. Building a content pipeline that handles research, generation, quality scoring, SEO optimization, and publishing requires integrating five to ten different systems. Each integration point is a maintenance burden. Each one can break independently.

Small B2B teams (one to three people) don't have the engineering bandwidth to build and maintain this kind of infrastructure. And yet, many of them are cobbling together ChatGPT plus Surfer SEO plus Ahrefs plus their CMS plus a spreadsheet for tracking, held together by manual copy-paste and good intentions.

That's not a pipeline. That's a series of disconnected tools with a human being serving as the glue. And that human being is also the one writing the quarterly board report and managing the paid ads budget.

What the 5% That Succeed Actually Do Differently

The MIT data shows that the small minority of successful AI deployments share specific traits: high domain specificity, deep workflow integration, and vendor-led implementations with clear KPIs.

Applied to content operations, this means the winners aren't the teams with the best AI writer. They're the teams that have instrumented every handoff point in their pipeline. They know exactly how long it takes to go from brief to published post. They know the cost per article including all post-generation overhead, not just the writing step. They measure organic traffic per post at 30, 60, and 180 days.

The Marketing AI Institute's take emphasizes that success emerges when teams pick one pain point, execute well, and partner with vendors who use their own tools. That's pragmatic advice. Don't try to automate everything at once. Pick the most expensive handoff in your pipeline, automate it, prove the ROI, and expand from there.

For most content teams, the most expensive handoff is the gap between "draft done" and "post live and optimized." That's where the time goes. That's where the money goes. And that's where pilots go to die.

The Strategic Question for 2026

The question isn't which AI writing tool to adopt. GPT-4o, Claude, Gemini; they all produce serviceable B2B blog drafts. The generation layer is approaching commodity status.

The real question is: which of the six post-generation steps are you instrumenting, and which are you leaving to manual effort and hope?

We don't think every team needs to automate all six steps on day one. But we do think every team needs to know what each step costs them. Track the hours. Calculate the per-post overhead. Then make an informed decision about which steps to automate first.

The 95% failure rate isn't a verdict on AI. It's a verdict on incomplete implementation. And incomplete implementation, for content teams, almost always means the same thing: they automated the writing and forgot about everything else.

References

- MIT report: 95% of generative AI pilots at companies are failing -- Fortune

- That Viral MIT Study Claiming 95% of AI Pilots Fail? Don't Believe the Hype -- Marketing AI Institute

- Why 95% of GenAI projects fail -- and why the 5% that survive matter -- Trullion

- 2026 State of Content Workflows -- Averi

- Ultimate Guide to Internal Linking Tools for Content Operations -- SeoProAI