Ninety-one percent of B2B marketing teams now use AI tools, up from 63% just a year ago. But here's the part that should make every marketing leader uncomfortable: only 41% can confidently prove ROI from those tools, down from 49% the previous year. More adoption. Less proof it's working. That's not a growth story. That's a measurement crisis.

We've watched this pattern develop across dozens of B2B content teams, and the underlying cause is almost always the same: teams bought AI writing tools, celebrated the speed improvements, then couldn't explain to their CFO why organic traffic didn't follow.

The Confidence Number Is Going the Wrong Direction

Let's sit with this for a second. AI adoption among marketing teams jumped nearly 30 percentage points in a single year. Budget allocation followed. Among $10B+ companies, a third now put 20% or more of marketing spend into AI, with 79% reporting at least 2x ROI. So some organizations are clearly making it work.

But the average team? The average team is drowning in output and starving for attribution.

Only 19% of content marketers using AI have implemented measurement frameworks that actually track AI-related performance indicators. The other 81% are flying blind, relying on proxies like "articles published per month" or "hours saved per writer." Those metrics feel productive. They look good in a Monday standup. And they tell you almost nothing about whether your content investment is generating pipeline.

Measuring the Wrong Part of the Machine

The root problem is specificity. Or rather, the lack of it.

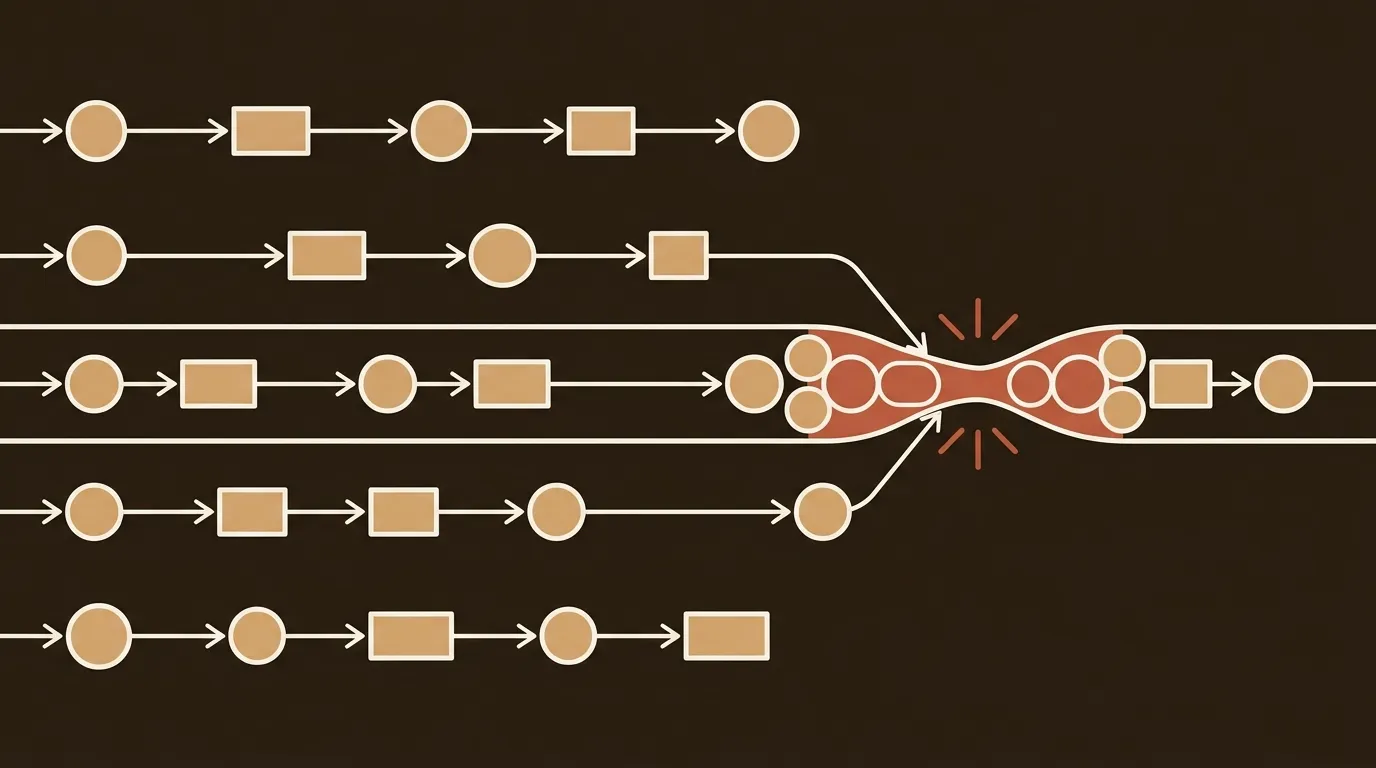

Most B2B teams measure AI's contribution at exactly one point: content generation. Did the AI draft arrive faster than a freelancer would have? Yes. Did it cost less per word? Almost certainly. Did it require fewer revision rounds? Sometimes. Those are real gains. But they're gains in the production phase only, and production is maybe 30% of what determines whether a blog post actually ranks, drives traffic, and converts.

To justify ROI for AI-driven workflow integration, teams need to measure across three dimensions: time saved, output quality, and revenue lift. Pre/post comparisons, cost-substitution models, and performance attribution frameworks all matter. Yet most organizations stop at the first dimension and call it done.

Here's what gets lost when you only track the writing step: research quality degrades silently, because nobody's measuring whether the AI pulled from authoritative sources or hallucinated a stat. SEO optimization becomes an afterthought bolted on post-draft. Quality gates don't exist, so mediocre content ships alongside good content and drags domain authority down. And distribution? Most teams still handle that manually, inconsistently, or not at all.

The compounding economics of the full publishing lifecycle are invisible if you're only watching the text generation part.

Why "Hours Saved" Doesn't Survive the CFO Conversation

We've been in enough budget meetings to know: "hours saved" is a weak argument. It's not that it's untrue. It's that it doesn't connect to a line item the finance team cares about. You saved your writer 12 hours per week. Great. Did headcount go down? Did output quality go up measurably? Did organic traffic increase? Did any of that translate to qualified leads?

The multi-touch nature of AI's influence makes this genuinely hard. AI tools often touch every stage of the content journey, and traditional last-touch attribution models completely miss this distributed impact. So AI ends up looking like it's not delivering value even when it's influencing outcomes at every step.

This isn't a technology failure. It's an instrumentation failure. The tools themselves work fine. The measurement infrastructure around them doesn't exist.

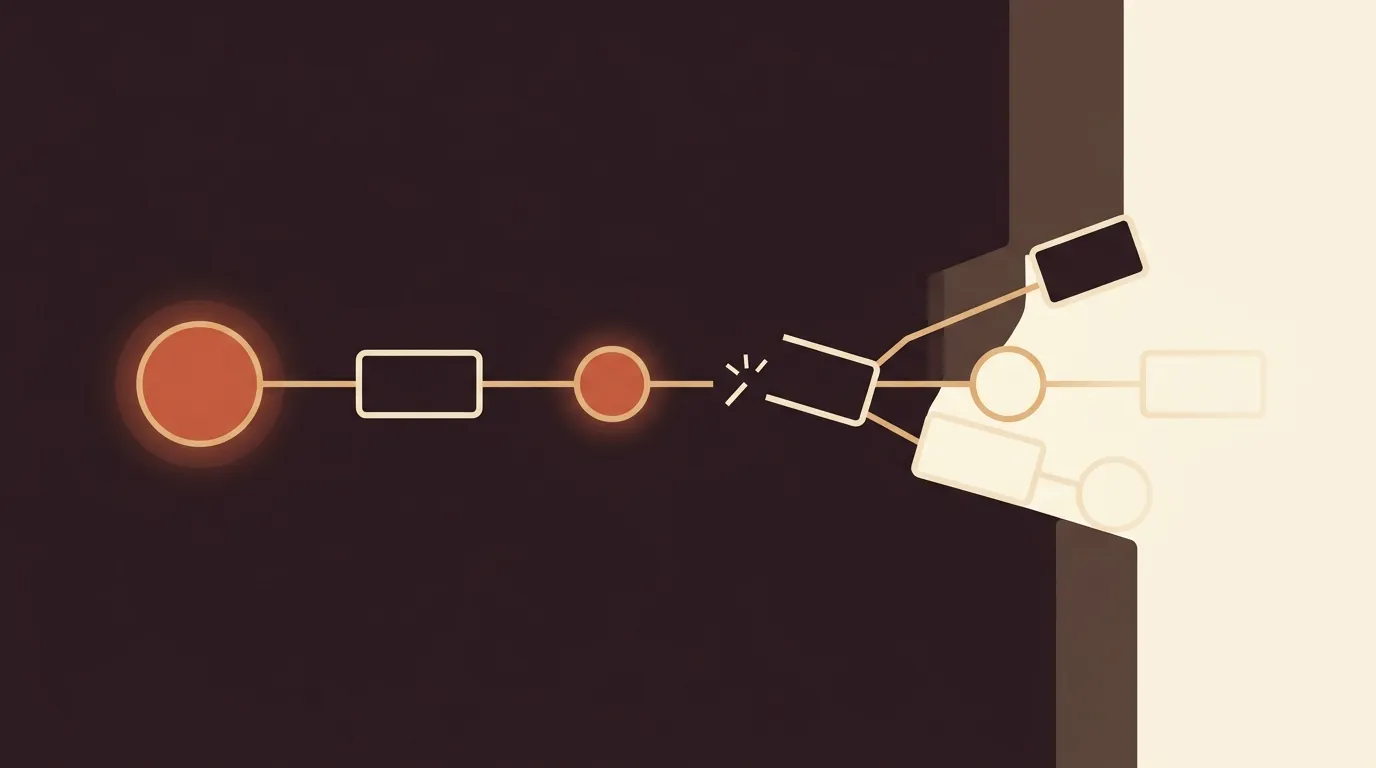

The Maturity Gap Nobody's Talking About

There's a stark divide forming between teams that use AI and teams that have integrated AI into a system. 71% of B2B firms use AI to produce content, but 56% see its primary value in basic execution, things like first drafts, rephrasing, and email subject lines.

Only about 23% have AI agents fully integrated into their marketing stack in production. The rest are running disconnected tools that don't share context, don't maintain brand voice across assets, and don't compound in value over time.

The gap between these maturity levels is enormous. We've seen benchmarks showing teams at advanced maturity producing 5-10x more content at 75-85% lower cost per article, with organic growth curves that less mature teams simply cannot replicate. And the cost to move from basic to advanced isn't primarily financial. It's operational. It requires rethinking what you measure, where you measure it, and who owns the pipeline end to end.

This is genuinely messy, by the way. There's no clean playbook. Every team's stack is different, every CMS has its own constraints, and the definition of "integrated" varies wildly depending on who you ask. But the direction is clear: isolated AI tool usage is plateauing in value.

A Quick Calculation

Say you're a 2-person marketing team publishing 8 posts per month with a freelancer costing $350 per article. That's $2,800/month, $33,600/year. You switch to an AI writing tool and cut the per-article cost to $80 (your time for editing plus the tool subscription). You're now spending $640/month on content production. Savings: $2,160/month.

Sounds like a win. But if those 8 AI-generated posts rank 40% worse on average because nobody optimized them for search intent, didn't run quality checks, and published without proper metadata, your organic traffic per post drops. After six months, you've "saved" $12,960 but your organic traffic is flat or declining. The CFO sees a content budget line item that isn't delivering growth. That's how AI writing tools get cut from budgets despite working exactly as advertised.

Now imagine the alternative: the same 8 posts go through research validation, SEO optimization before publishing, automated quality scoring, and proper metadata tagging. Cost per post is higher, maybe $120. But average ranking position improves, organic traffic compounds, and after six months you have a defensible growth curve to show leadership. The $240/month difference in cost is irrelevant next to the attribution story you can now tell.

What the Leaders Are Actually Measuring

The organizations reporting strong AI ROI aren't using better AI models. They're measuring different things.

Google AI Overviews now appear for 13.14% of all search queries, and Google Search Console treats those impressions identically to standard organic results. You can't tell, using traditional tools, whether your traffic came from a standard SERP listing or an AI Overview citation. That's a measurement blind spot that's only growing.

The teams closing this gap are building new metrics into their workflows: Citation Rate (how often their content gets cited in AI-generated answers), AI-Sourced Pipeline (leads that originate from AI Overview referrals), and Share of Voice in AI responses for their target keywords.

AI traffic converts at 14.2% compared to Google's 2.8%. That's a 5x conversion premium. So if you're not tracking where AI-referred traffic goes after landing on your site, you're missing your highest-converting channel entirely.

These aren't exotic metrics. They require some setup, sure. But the infrastructure to track them exists today. Most teams just haven't prioritized it because they're still celebrating the "we published 40% more posts this quarter" metric.

Closing the Gap Before Budgets Lock In

B2B content budgets are increasing heading into 2026. The window to demonstrate ROI before the next budget cycle is narrow.

The companies that will defend and grow their AI content investment share a few traits. They instrument the pipeline, not just the output. They attribute organic traffic growth to the system (research + optimization + quality + publishing cadence), not to any single AI tool. And they tie everything back to metrics that survive a finance review: qualified leads, pipeline velocity, and revenue per marketing dollar.

High-performing teams design repeatable pipelines that turn a single strategic idea into a full set of assets, with governance built in from the start, clear ownership, and continuous refinement based on performance data.

The competitive advantage has moved. It's no longer about having an AI writer. It's about having a system that proves the AI writer (and everything around it) is worth the investment.

We keep coming back to a simple observation: the teams struggling with AI ROI aren't struggling because AI doesn't work. They're struggling because they automated the easiest part of content marketing and left the hard parts (research, quality control, SEO, attribution) running on the same manual, untracked processes they've always had. Fix the measurement infrastructure first. The ROI numbers will follow, and they'll be real.

References

- Where AI Will Be Used by B2B Marketers in 2026: DGR Interview with Jasper's Loreal Lynch - Demand Gen Report

- How to prove ROI from AI workflow integration in B2B marketing - MarTech

- Content Marketing ROI 2026: Only 19% Track AI KPIs - Digital Applied

- The State of AI Content Marketing: 2026 Benchmarks Report - Averi

- Google AI Overviews Traffic Impact: Measuring ROI & Pipeline Attribution - Discovered Labs