AI-assisted content teams now publish a median of 17 articles per month. Non-AI teams manage about 12. That's a 42% velocity increase, and it's well-documented across recent benchmarks.

But here's the number that should worry you more: only 19% of those teams track AI-specific KPIs. The other 81% are publishing more content with no structured way to prove it's working. They're measuring outputs (traffic, maybe leads) without connecting those outputs to the AI-specific inputs and efficiency gains that determine actual ROI.

The operational gap isn't content volume. It's measurement infrastructure. And the gap is widening.

Five More Articles a Month Doesn't Mean Five More Deals

The production efficiency is real. A content engine with integrated workflow can move from topic approval to published piece in 1.5 to 2.5 hours including human review, compared to 8 to 12 hours for manual processes or 2 to 4 weeks in a typical agency workflow. Over half of content professionals in the US and UK say they're already using AI to accelerate creation and organization.

So teams are faster. The question nobody's answering well: faster at producing what, exactly?

We've seen this pattern repeatedly. A small B2B team adopts AI writing tools, doubles their publishing cadence, watches aggregate traffic tick up, and calls it a win. Six months later, pipeline hasn't moved. The CEO asks why the blog budget went up 30% while demo requests stayed flat. Nobody has an answer because nobody built the measurement layer to trace the connection between volume and revenue.

This is not a hypothetical. Only 8% of B2B marketers believe they successfully gauge their content's ROI and influence on revenue, yet content marketing accounts for 25% to 40% of B2B marketing budgets. That disconnect is staggering. And adding AI tools without adding measurement discipline just makes the problem larger, faster.

Google's March 2026 Update Made This Urgent

Publishing cadence used to be a defensible moat. Cover enough keywords, publish frequently enough, and you'd accumulate domain authority over time. Google's March 2026 core update broke that strategy.

The numbers are stark. Pages with original data, proprietary insights, or genuine expert commentary gained an average of 22% in visibility. Content backed by proprietary data now ranks 2.3x higher post-update than content without original research. Meanwhile, the update significantly devalued scaled, low-effort AI content, the kind generated at volume without human editing or first-hand experience.

HubSpot reportedly lost 70 to 80% of its organic traffic after years of prioritizing broad keyword coverage over original insight. If that doesn't get your attention, I'm not sure what will.

For small B2B teams, this means a volume-first strategy without quality measurement is actively dangerous. You're not just wasting money on content that doesn't convert. You're potentially diluting your domain's quality signals with posts that hurt your rankings.

The mandate from Google is explicit: content must introduce concepts, frameworks, or data that don't appear in top-ranking competitors. Think "We analyzed 50,000 B2B emails and found that subject line length affects open rates 40% less than sender identity" rather than "10 Tips for Better Email Marketing."

Three Metrics That Actually Matter

We think most B2B teams need exactly three core metrics to build what we'd call a minimal viable measurement stack. Not a dashboard with 47 charts. Three numbers, tracked consistently.

Cost Per Article (Including the Hidden Costs)

This sounds obvious. It is not.

Content velocity and cost per content unit are the foundational AI metrics, but most teams calculate them wrong. They count the AI tool subscription and maybe the writer's time. They forget the editing pass, the SEO review, the image sourcing, the CMS formatting, and the internal review cycle.

Here's a real calculation we've run. Say a marketing manager earning $85K/year spends 4 hours on a manually written blog post. Loaded cost per hour (with benefits, overhead) is roughly $55. That's $220 in labor per article, plus maybe $150 for a freelance writer's draft, plus $30 for stock images. Total: $400 per article.

With AI-assisted workflows, that same manager spends 1.5 hours on review and editing. Labor drops to $82.50. The AI tool costs maybe $0.60 to $2.00 per article depending on the plan. Images are generated. Total: roughly $85 per article.

That's a 79% cost reduction. But you only know that if you're tracking it. And you only know if it's worth it if you connect cost per article to the next metric.

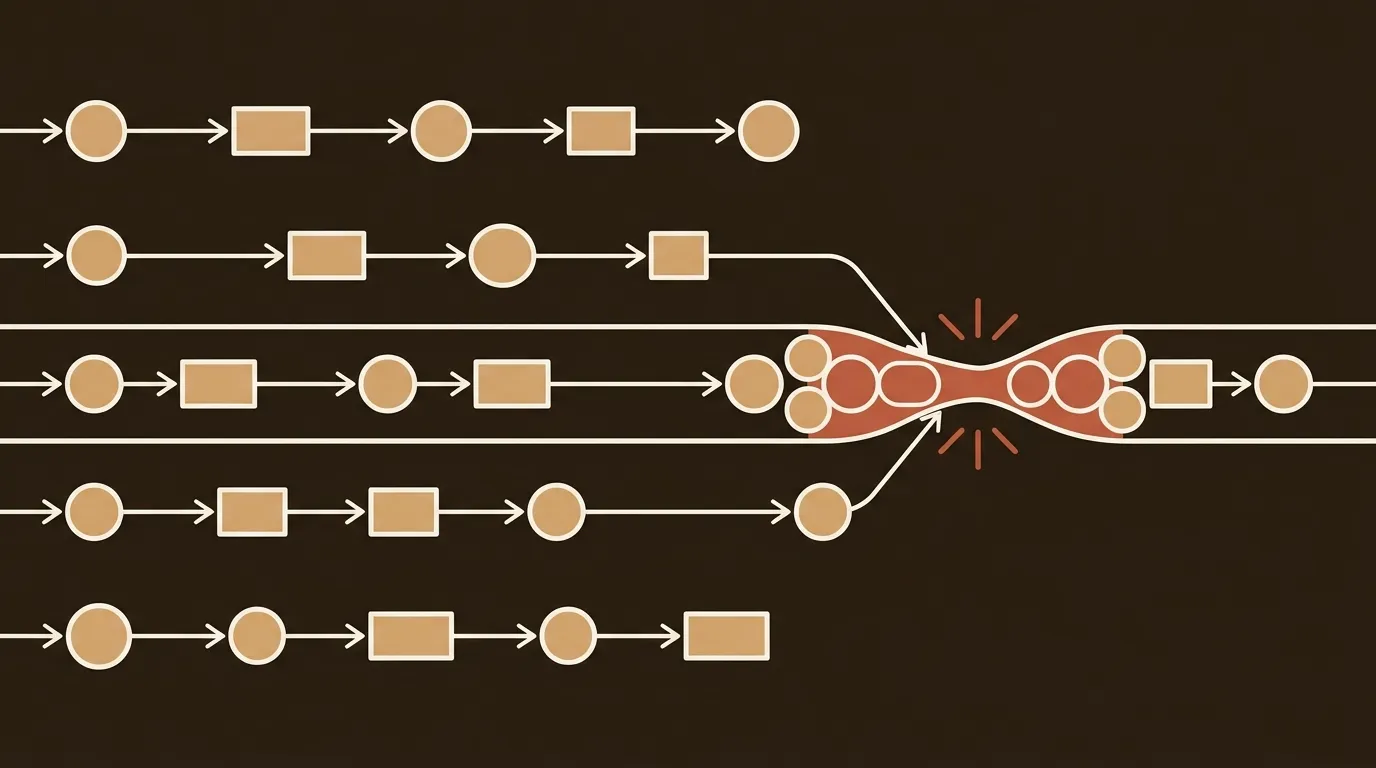

Traffic Per Post Cohort

Aggregate traffic metrics are nearly useless for evaluating AI content performance. A team publishing 17 articles per month will see overall traffic rise simply because more pages exist. The question is whether per-piece performance is holding steady, improving, or degrading.

Segment your content into cohorts. The most useful split we've found: by publishing method (AI-drafted then human-edited vs. fully human-written), by content type (thought leadership vs. keyword-targeted vs. product pages), and by publication month.

Then track 90-day ranking trends by content cluster and origin type. This surfaces systematic quality differences between AI and human content on equivalent topics. You might find your AI-assisted how-to guides perform identically to human-written ones while your AI-assisted opinion pieces underperform. That's actionable. "Overall traffic is up 15%" is not.

A simple spreadsheet works for this. Columns: article URL, publish date, production method, content type, 30-day sessions, 60-day sessions, 90-day sessions, target keyword, current ranking position. Update monthly. You'll spot patterns within two quarters.

Conversion Rate by Publishing Frequency

This is the metric that separates content-as-activity from content-as-pipeline.

Track these by publishing frequency windows. During months you published 8 articles, what was the MQL-to-SQL rate on blog-sourced leads? During months you published 20 articles? If doubling output doesn't move conversions, or worse, if it dilutes them, you have a clear signal.

We'll be honest: this is genuinely messy to measure for small teams. Attribution is imperfect. Sales cycles in B2B can stretch months. A prospect who reads three blog posts in January might not request a demo until April. You won't get clean data on this for at least two quarters, and even then it'll be directional rather than precise.

But directional is enough. Directional beats guessing.

Building This in Practice, Week by Week

Weeks 1-2: Baseline and Tagging

Tag every piece of content in your CMS with production method and content type. Go back and tag the last 6 months if you can. Set up a shared tracking sheet with the columns listed above. Pull current traffic and ranking data from Google Search Console and your analytics tool.

This is boring work. Do it anyway.

Weeks 3-6: Pipeline Wiring

Set up custom GA4 events for your key conversion actions: demo requests, contact form submissions, newsletter signups, gated content downloads. GA4's event-based model gives you visibility into which pages convert visitors to leads if you configure it properly. Most teams have GA4 installed but haven't set up the conversion events that matter for B2B.

Weeks 7-12: First Review Cycle

Review efficiency and quality metrics weekly in editorial standups. They're leading indicators. Review performance metrics monthly in marketing team reviews. They're lagging indicators that reflect publishing decisions you made 4 to 12 weeks earlier. Quarterly, compare all three views against targets and prior periods.

Month 4 and Beyond

By now you should have enough data to calculate a real ROI number. Track total cost, total attributed revenue, ROI ratio, and quarter-over-quarter efficiency change. Pick one headline metric for AI impact, something like "AI reduced cost per piece by 58% QoQ while maintaining per-post traffic within 5% of human-written baseline."

That sentence, backed by your data, is worth more than any pitch deck.

What If You Don't Have Proprietary Data?

Not every B2B team can run original research. Some are too small. Some operate in niches where data collection is impractical.

The alternative is topical authority. Google's ranking algorithms increasingly favor websites that demonstrate clear authority over a niche. This means depth over breadth. Fewer topics covered with more thoroughness. Internal linking structures that show topical coverage. Consistent publishing within a defined subject area over time.

For a 2-person marketing team, this might mean publishing 10 articles per month on 3 tightly related topics instead of 20 articles scattered across 15 topics. The per-piece investment goes up. The aggregate authority signal goes up more.

And you'll only know which approach works better if you're measuring. Which brings us back to the stack.

Consistency Beats Sophistication

A quarterly ROI report that uses the same structure and metric definitions for four consecutive quarters produces comparative data far more actionable than a single sophisticated report. We've seen teams spend weeks building beautiful dashboards in Looker or Tableau only to abandon them because the underlying data definitions kept changing.

Start simple. A Google Sheet with three tabs (cost tracking, traffic cohorts, conversion events) updated on a fixed schedule beats any BI tool used inconsistently.

The 81% of AI-assisted teams not tracking AI-specific KPIs aren't failing because they lack tools. They're failing because they never decided which three numbers actually matter and committed to watching them every month. The measurement stack doesn't need to be complex. It needs to exist, and it needs to persist long enough to reveal patterns.

Publishing 17 articles a month is a capability. Proving those 17 articles generate pipeline is a competitive advantage. The teams that figure this out in 2026 will pull away from the ones still celebrating word count.