Google's December 2025 core update finished rolling out on December 29, and the wreckage is still being tallied. Nearly 15% of pages that held Top 10 positions before the update vanished from the Top 100 entirely. Generic content optimized for keywords rather than people took the hardest hit, with sites showing weak E-E-A-T signals losing 45-80% of their visibility.

But here's what caught our attention: the sites that gained weren't the ones with the most backlinks or the oldest domains. They were the ones with identifiable, credible authors.

Keyword Density Lost. Author Credibility Won.

Google now assigns what amounts to an authenticity score, evaluating whether content reflects genuine expertise or was manufactured for ranking purposes. Language patterns like "in my testing" and "our research found," specificity markers such as original data, and verifiable author bios all feed into this assessment.

The system has moved beyond matching keywords to verifying entities. Google's Knowledge Graph now cross-references whether an author is a verified entity with credentials that hold up outside of the blog post itself. A medical article written by "The Content Team" gets treated differently than one linked to a physician with a verifiable NPI number and published papers.

This isn't limited to health or finance anymore. Specialists gained ground while generalists lost across every niche.

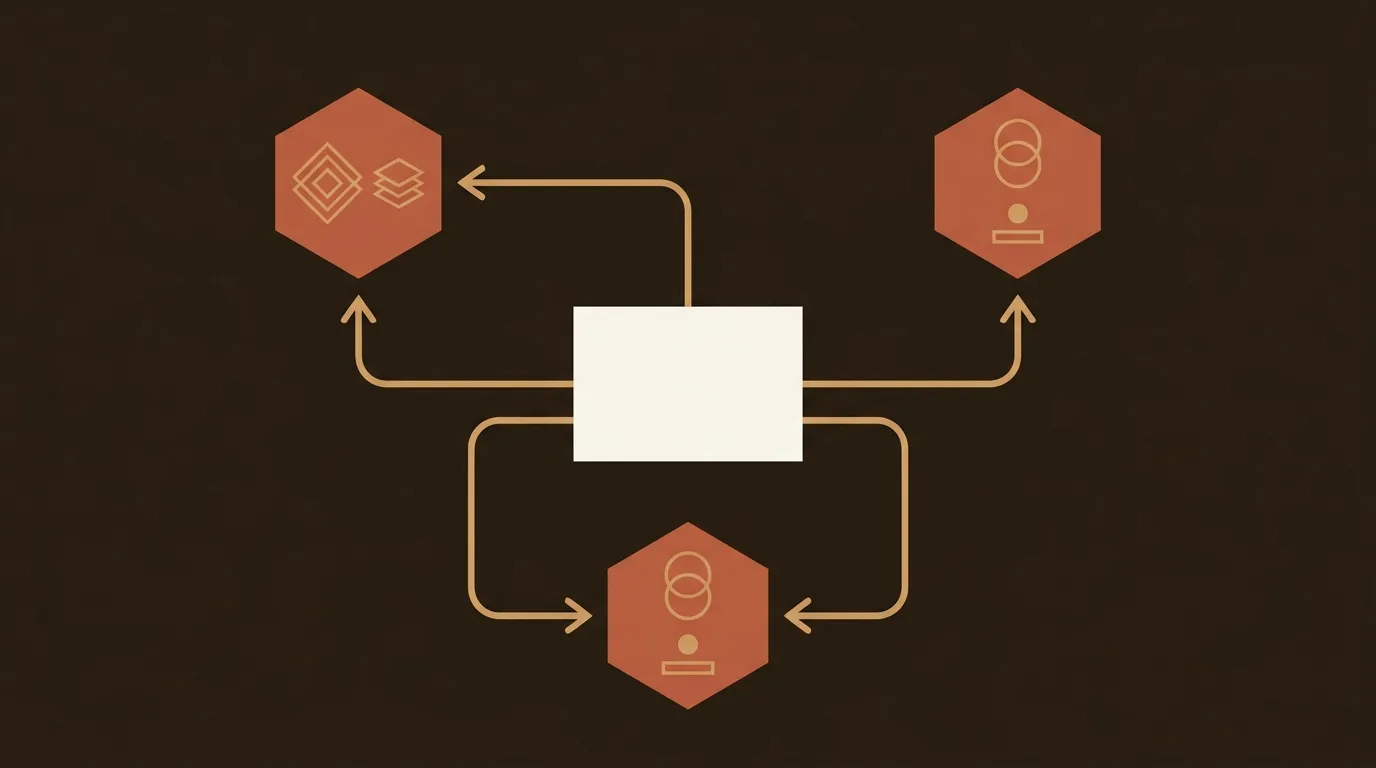

AI Search Engines Care About the Same Signals (Differently)

The timing matters. Over 400 million people use ChatGPT weekly. Google's AI Overviews appear on more than half of all search results pages. And 43.2% of pages ranking #1 in Google are cited by ChatGPT, which is 3.5x the rate of pages outside the top 20.

So traditional rankings and AI citations are becoming linked. But they reward different things. Domain Authority correlations have dropped to r=0.18, and 47% of AI Overview citations now come from pages ranking below position #5. AI systems reward content authority, not domain age.

That distinction is worth sitting with. A three-person team publishing well-attributed, topically consistent content can earn AI citations that a large site with thin, unsigned articles cannot.

What an "Author Authority Stack" Actually Looks Like

We've started thinking about this as a layered system rather than a checklist.

Layer 1: Verified identity. Every article linked to a real person with cross-referenced profiles on LinkedIn, industry directories, or other authoritative platforms. CMOs should shift budget from high-volume production to high-authority entity verification.

Layer 2: Topical consistency. Pick lanes and stay in them. Publish 30 posts about content marketing ops, not 5 posts each across 6 unrelated topics. Algorithms (and AI models) notice patterns.

Layer 3: Experience proof. Original screenshots, proprietary data, first-hand case studies with specific numbers. Pages combining text, images, video, and structured data see up to 317% more citations.

Layer 4: Consistency at scale. None of this works if you publish twice and disappear. The authority stack compounds over time, and it breaks when publishing cadence drops.

That fourth layer is, honestly, where most small teams fail. Building the stack is one problem; maintaining it across 10, 20, or 50 posts per month is a different problem entirely. It's why we built Wonderblogs with author attribution and brand context baked into every step of the pipeline. Configuring your team's credentials and topical focus once, then having that context applied consistently across every generated post, is the only way we've found to make this sustainable without a full editorial team.

The Uncomfortable Math

Most blogs are still optimizing for the 2023 version of SEO. Keyword research, backlink outreach, technical audits. None of that is wrong, exactly. But it's incomplete.

The December 2025 update made authorship a ranking input. AI search made it a citation input. And the gap between teams that understand this and teams that don't is going to widen fast, because building an author authority stack takes months of consistent publishing. Starting six months late means being six months behind a competitor who already did.

The question isn't whether transparent authorship matters. The data settled that. The question is whether you can execute on it before your competitors figure out the same thing.