Zapier pulls in 16.2 million organic visitors per month and ranks for over 1.3 million keywords. They don't have a proportionally massive content team. They have a system. And that system, at its foundation, is programmatic SEO done with discipline.

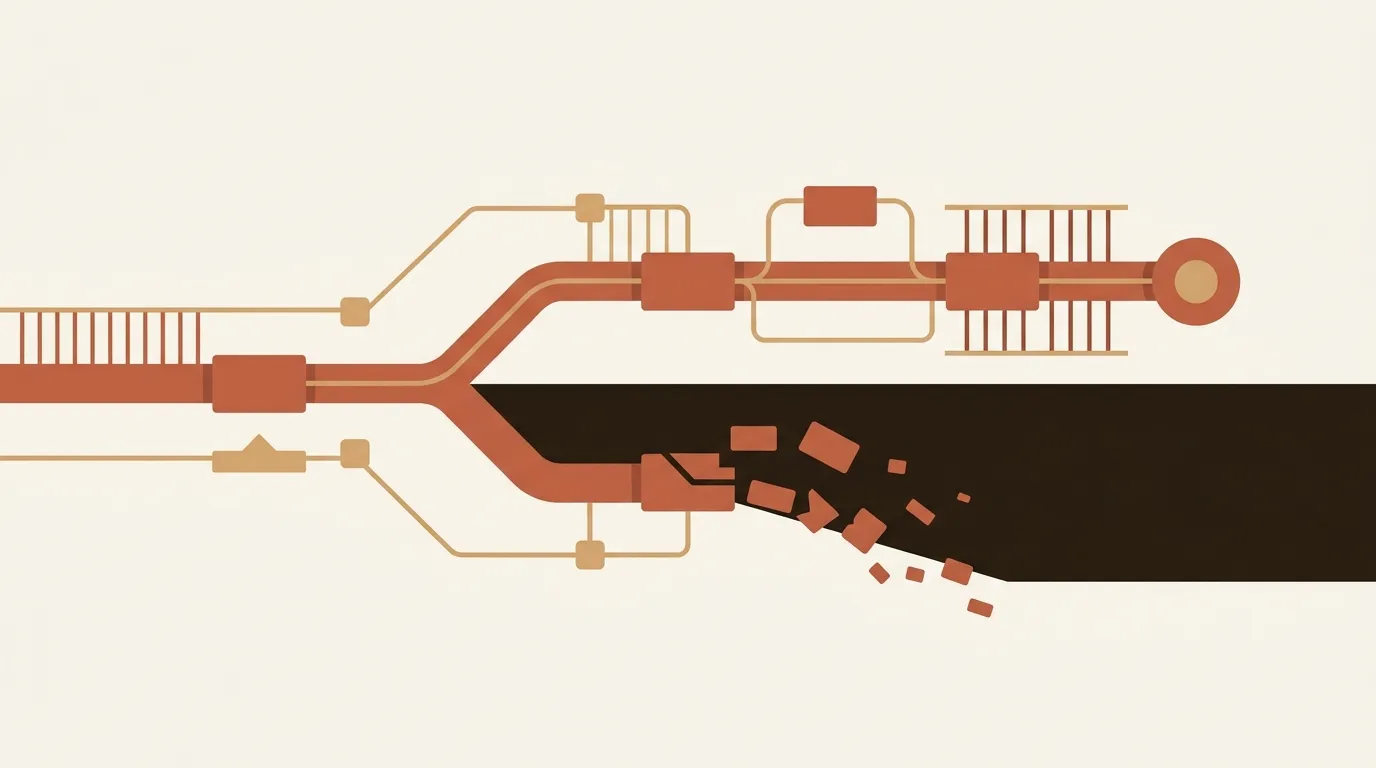

The tooling to replicate that kind of infrastructure has never been cheaper. Airtable's free tier, Webflow CMS, a sync layer like Whalesync, maybe a data enrichment API. You can stand up a 1,000-page content operation for under $100/month in software costs. But cheap tooling has created a dangerous illusion: that scale itself is the strategy.

It isn't. 60% of programmatic deployments fail because teams mistake template multiplication for differentiation. And "fail" doesn't mean "underperform." It means manual penalties, deindexed pages, or traffic cliffs that erase months of work inside 90 days.

We've watched this pattern play out across dozens of B2B content operations. The teams that succeed treat programmatic SEO as a quality-at-scale infrastructure decision. The teams that fail treat it as a publishing shortcut.

The $100/Month Trap

The economics are seductive. A single marketer can create and manage thousands of pages using spreadsheet-based workflows, syncing content from Airtable or Google Sheets directly to a CMS without touching code. That's real. That works. And it completely sidesteps the actual hard problem.

The hard problem is differentiation at the page level.

Google's helpful content systems have gotten very specific about what counts as "thin." Programmatic sites commonly show 70-90% content duplication across generated pages. Google's response isn't subtle: when it can't determine which of your nearly-identical pages to rank, it often chooses none. Your 5,000-page site becomes 5,000 pages of crawl budget waste.

G2 is the cautionary tale everyone should study. They lost 80% of their SEO traffic starting in 2023, largely attributed to programmatic content quality issues. Meanwhile, Capterra gained market share with cleaner, less templated approaches. Same market. Same intent patterns. Radically different outcomes based on content differentiation.

What "Differentiation" Actually Means in Numbers

We've seen a lot of vague advice about making programmatic pages "unique." That's not useful. Here are the specific thresholds that separate programs generating 300-700% organic traffic growth from those earning penalties.

Minimum 500 unique words per page. Not 500 words total with a shared 400-word boilerplate intro. Five hundred words of content that exists only on that specific page. This is the floor, not the ceiling. Pages targeting competitive B2B terms often need 800-1,200 unique words to compete.

30-40% differentiation between any two pages in the same template set. If you generate pages for "CRM software for healthcare" and "CRM software for logistics," a reader (and a crawler) should find roughly a third of each page materially different. Not just the H1 tag and a swapped industry noun. Different data points, different use cases, different objections addressed.

Unique data assets, not just template variations. 93% of penalized programmatic sites lacked genuine differentiation in their underlying data. If your only variable is a city name or industry keyword injected into a template, you don't have programmatic SEO. You have a mail merge with a domain name.

One case study we found instructive: a B2B SaaS company reduced their programmatic page count from 847 to 623 after implementing stricter quality gates. Those 623 pages generated $47K in monthly revenue with a 78% indexation rate, and engagement metrics actually exceeded their hand-written content. Fewer pages. More money. That's the math that matters.

The Monitoring Cadence Nobody Talks About

Building the pages is maybe 30% of the work. The other 70% is what happens after you publish.

Weekly Signal Monitoring

Every programmatic deployment needs a weekly check on three signals: indexation rate (what percentage of your pages are actually in Google's index), crawl frequency (how often Googlebot returns to your programmatic pages), and engagement metrics (bounce rate, time on page, scroll depth) segmented by template type.

If your indexation rate drops below 60%, something is wrong. If crawl frequency declines week over week for the same page set, Google is losing interest. Both are early warnings that arrive weeks before a traffic cliff.

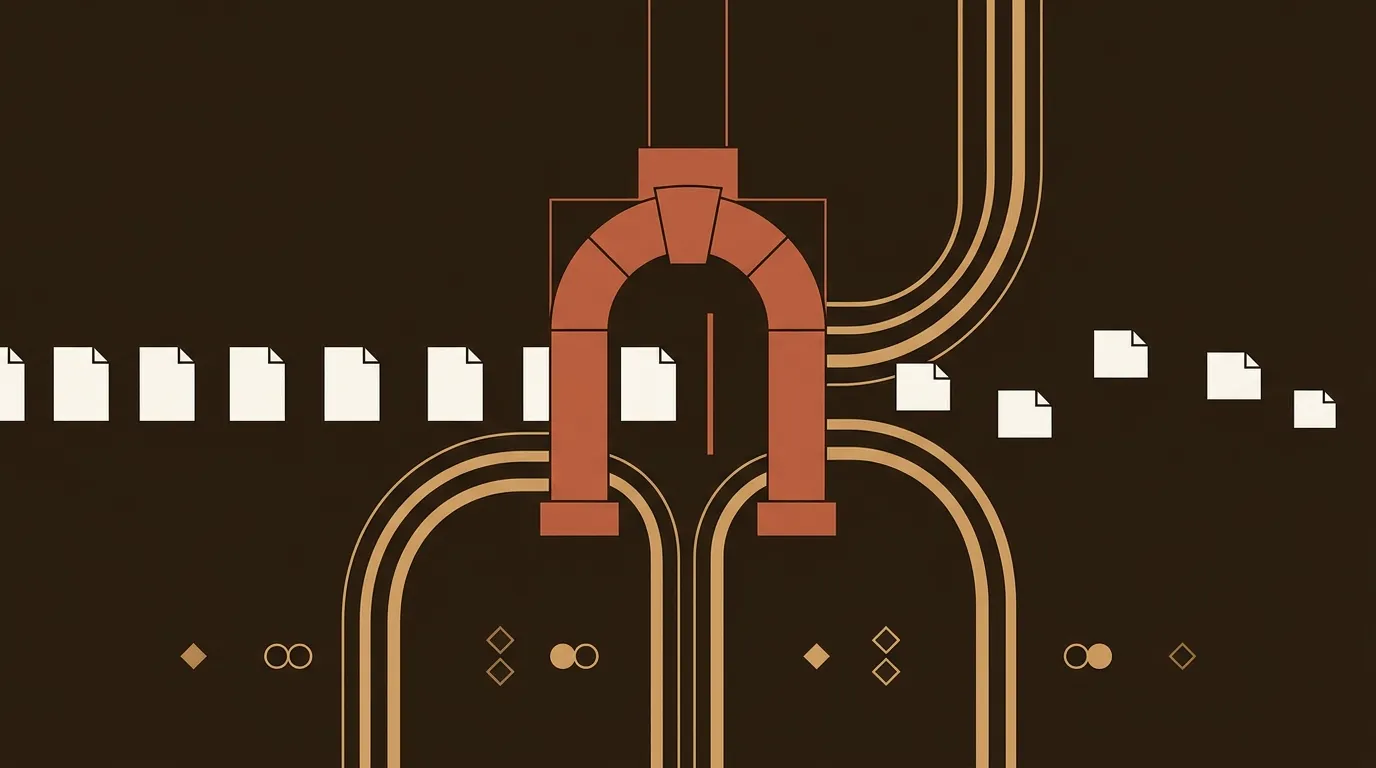

Monthly Pruning

This is where most teams fall apart. Content decays: prices change, businesses close, regulations update, links break, data goes stale. A page that was accurate when published becomes misleading six months later. For programmatic content, this decay happens at scale because all pages degrade at roughly the same rate.

Monthly pruning means reviewing your lowest-performing 10% of pages and making a binary decision: update with fresh data, or noindex. There is no third option. Leaving stale pages indexed actively hurts your performing pages through quality dilution.

Build refresh into the pipeline from day one. If your data comes from scrapeable sources, schedule regular re-scrapes. Have your automation compare new data to old data and flag pages that need updates. This is not optional maintenance. It is the core operating cost of programmatic SEO.

B2B's Specific Advantage (and Specific Risk)

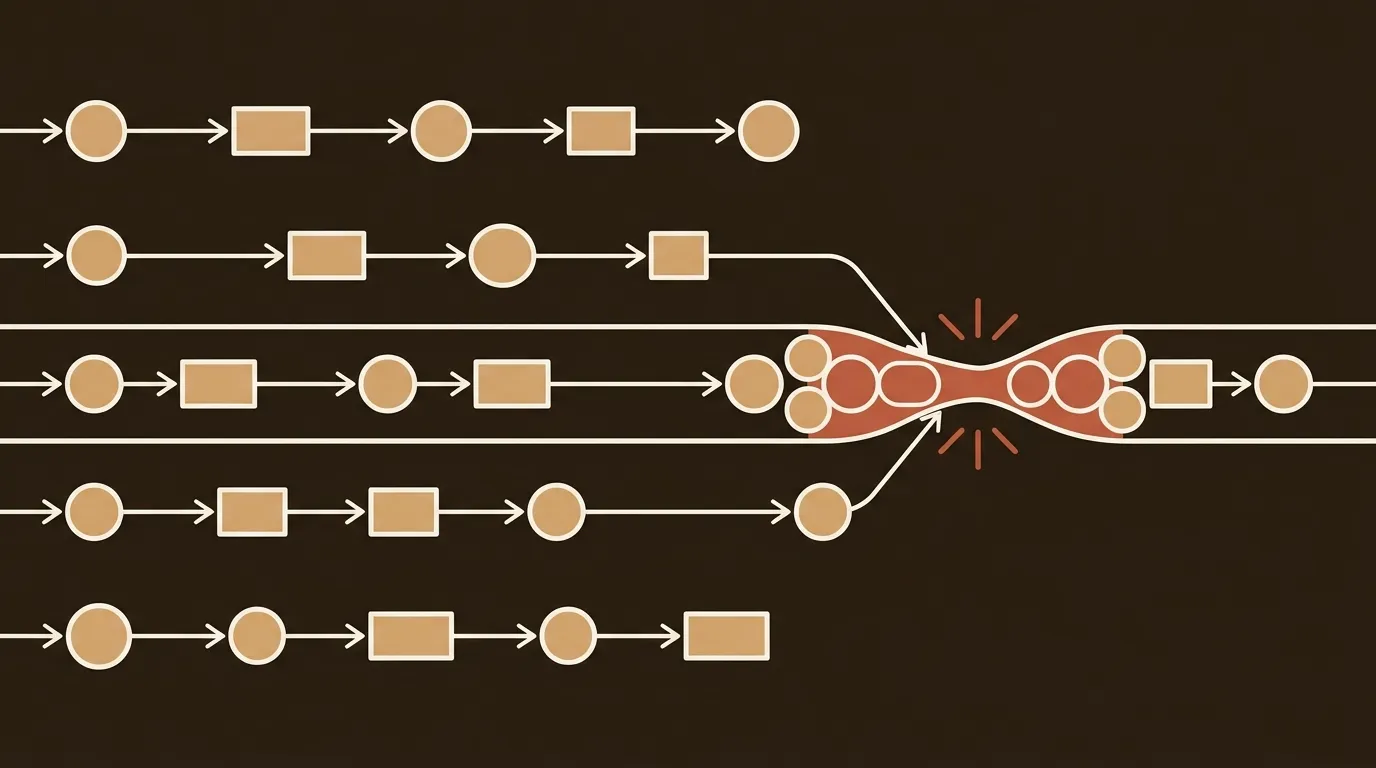

B2B has a structural advantage in programmatic SEO that B2C often doesn't: highly specific, commercially valuable long-tail queries with clear intent signals.

A B2B company with regional sales teams can generate pages targeting queries like "enterprise IT services in Dallas" or "manufacturing ERP consulting in Detroit." These are real queries with real buyer intent that competitors routinely ignore because the individual search volume looks small. But 500 pages each attracting 20 visits per month adds up to 10,000 monthly visitors, all with commercial intent.

The risk is equally specific. B2B buyers are more sophisticated readers. A healthcare compliance officer landing on your programmatic page about "HIPAA-compliant project management software" will spot thin content faster than a consumer browsing for running shoes. If your page just swaps "HIPAA" into a generic template, you've lost that visitor's trust permanently. And in B2B, one lost enterprise lead can represent tens of thousands in pipeline.

Bottom-of-funnel programmatic content represents a genuine opportunity here. ROI calculator pages tailored to specific business sizes. Pricing comparison pages for various use cases. Product demo request pages segmented by industry. These work because the data inputs genuinely change the output. A healthcare ROI calculator should produce materially different numbers than a logistics one.

The Five Patterns That Get You Penalized

We'll be blunt about what kills programmatic programs, because the mistakes are consistent and predictable.

Thin content with no proprietary data. If you can't point to a data source that your competitors don't have (or can't easily replicate), your programmatic pages are commodity content with a fancy build system.

Keyword cannibalization at scale. Generating 50 pages that all target minor variations of the same keyword doesn't give you 50 chances to rank. It gives Google 50 signals that you don't know what you're doing. Map your keyword targets before you generate. One page per intent cluster. No exceptions.

Unedited AI output. AI can generate the variable content for programmatic pages, but raw output without factual grounding or editorial review produces the kind of generic text Google's systems are specifically trained to detect. The output needs human review, or at minimum, an automated quality evaluation layer that catches factual errors and boilerplate language.

Poor technical infrastructure. Internal linking structure, XML sitemaps, canonical tags, page load speed, all multiply in importance when you're running thousands of pages. A 0.3-second load time penalty on one page is nothing. On 5,000 pages, it's a crawl budget disaster.

Optimizing only for Google. This one is newer. AI answer engines like ChatGPT and Perplexity are pulling from indexed content. If your programmatic pages are structured purely for traditional SERP ranking without answering questions in a way these systems can extract, you're optimizing for yesterday's traffic sources.

Progressive Rollout: The Discipline Tax

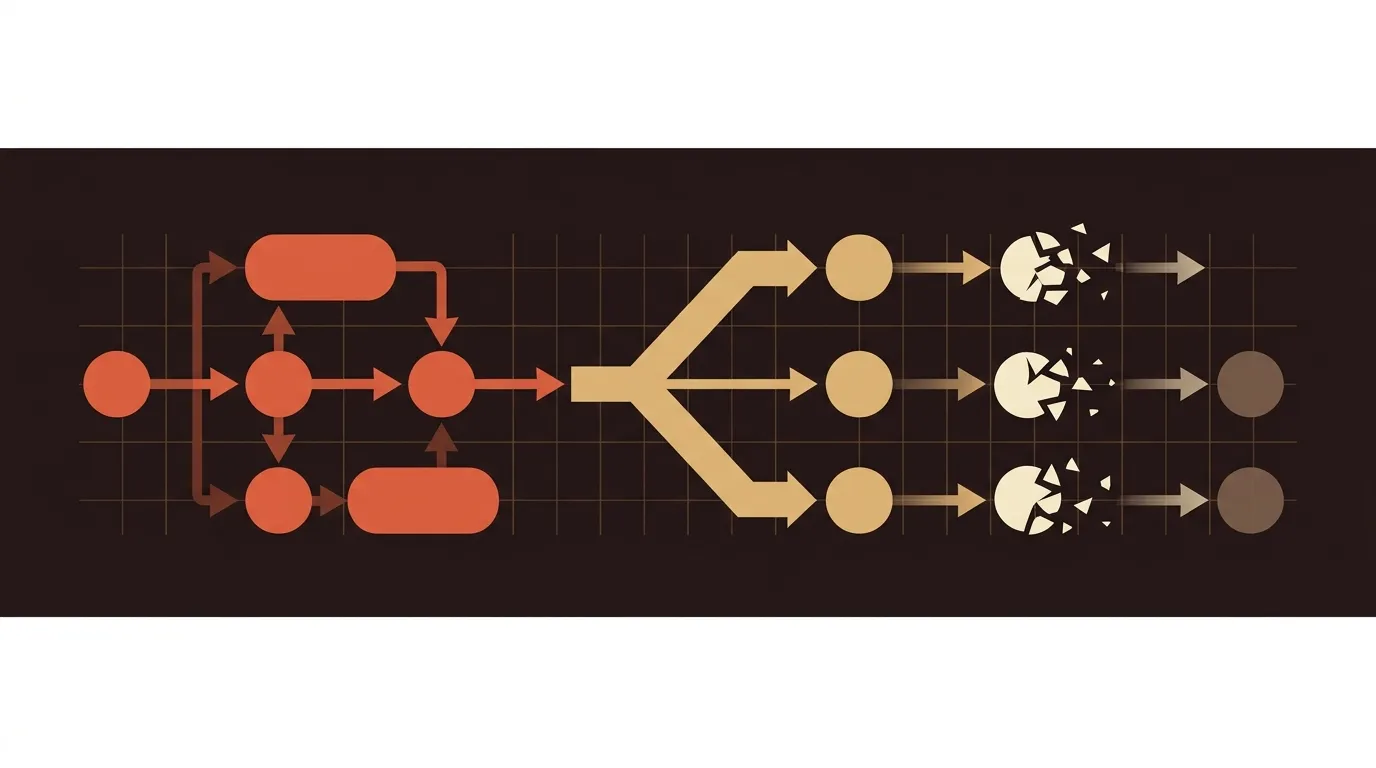

One pattern we've seen separate experienced operators from beginners is rollout cadence. Teams that launch 5,000 pages on day one almost always underperform teams that launch 50, measure, adjust, then scale to 500, then 5,000.

Progressive rollout lets you catch quality problems before they affect your entire domain. If your first 50 pages show a 90% indexation rate and strong engagement signals, you have evidence that your template and data approach works. If they show a 40% indexation rate, you've identified a problem that's 100x cheaper to fix at 50 pages than at 5,000.

A separate analysis of successful programmatic programs showed 220.65% organic traffic growth in Q1 2025 versus the prior quarter, growing from 5,520 to 17,700 monthly visitors. But that growth came after months of iterative template refinement on small page sets. The hockey stick came after the discipline, not before it.

What's Actually Hard About This

We should be honest about the genuinely messy parts. The tooling is easy. Standing up the infrastructure takes a weekend. But building differentiated data sources takes months. Creating quality evaluation systems that work at scale is an unsolved problem for most small teams. And the ongoing maintenance, the weekly monitoring and monthly pruning, is exactly the kind of unglamorous operational work that small B2B teams are already stretched too thin to handle.

Programmatic SEO is not a set-it-and-forget-it strategy. It is a content operations discipline that trades upfront writing time for ongoing quality management time. For teams that make that trade consciously and build the monitoring infrastructure to support it, the returns are real: 300-700% organic traffic growth is achievable. For teams that treat it as a shortcut to avoid the hard work of content creation, the 90-day penalty clock starts ticking the moment they hit publish.

The question worth asking isn't "can we build 1,000 pages?" It's "can we maintain 1,000 pages at a quality bar that gets better over time?" If the answer is yes, the infrastructure has never been more accessible. If the answer is uncertain, start with 50 and find out.

References

- Programmatic SEO for B2B SaaS Startups: The Complete 2026 Playbook - Averi AI

- Programmatic SEO: The Scalable Strategy B2B Brands Are Using to Own Search - Centric DXB

- Quality at Scale: How AI Solves Programmatic SEO's Biggest Challenge - Gracker AI

- Programmatic SEO Without Traffic Loss: Complete 2025 Guide - Passionfruit

- Common Programmatic SEO Mistakes That Kill Pipeline (And How To Fix Them) - Discovered Labs