Most programmatic SEO programs don't die from a lack of pages. They die from a lack of decisions.

We've watched B2B teams spin up 2,000 template-based pages in a weekend, see a brief traffic bump, and then watch the whole thing decay over 90 days because nobody built a system to decide what happens next. The pages that rank slip. The pages that don't rank sit there, burning crawl budget. And the team moves on to the next campaign, having learned nothing reusable.

The math on programmatic SEO still works. But the variable that matters in 2026 isn't page volume. It's the operational layer that monitors, refreshes, and retires pages based on real performance signals. Call it an agentic workflow, a living content system, or just "not being lazy after launch." Whatever the label, it's the difference between a program that compounds and one that collapses.

Template-Based Programmatic SEO Hit a Wall

The original playbook was elegant in its simplicity. Take a dataset (cities, product categories, job titles), pair it with a template, generate hundreds or thousands of long-tail pages. Near-zero marginal cost per page.

That playbook assumed Google wouldn't notice, or wouldn't care, that all your pages shared the same knowledge base with swapped variables. Google noticed. Helpful content updates, thin content penalties, and near-duplicate detection have made variable-substitution pages a liability rather than an asset.

The foundation of quality programmatic SEO now rests on genuinely unique data that varies meaningfully between pages, template architecture that solves real intent for each query, and technical infrastructure built for scale. Two out of three isn't enough. Miss any one of those and you're publishing dead weight.

So the question for small B2B teams isn't "how do we generate more pages?" It's "how do we build a system that knows which pages to generate, which to update, and which to kill?"

What Makes an Agentic Workflow Different

An AI writing tool waits for a prompt. You tell it what to write, it writes. Useful, but passive.

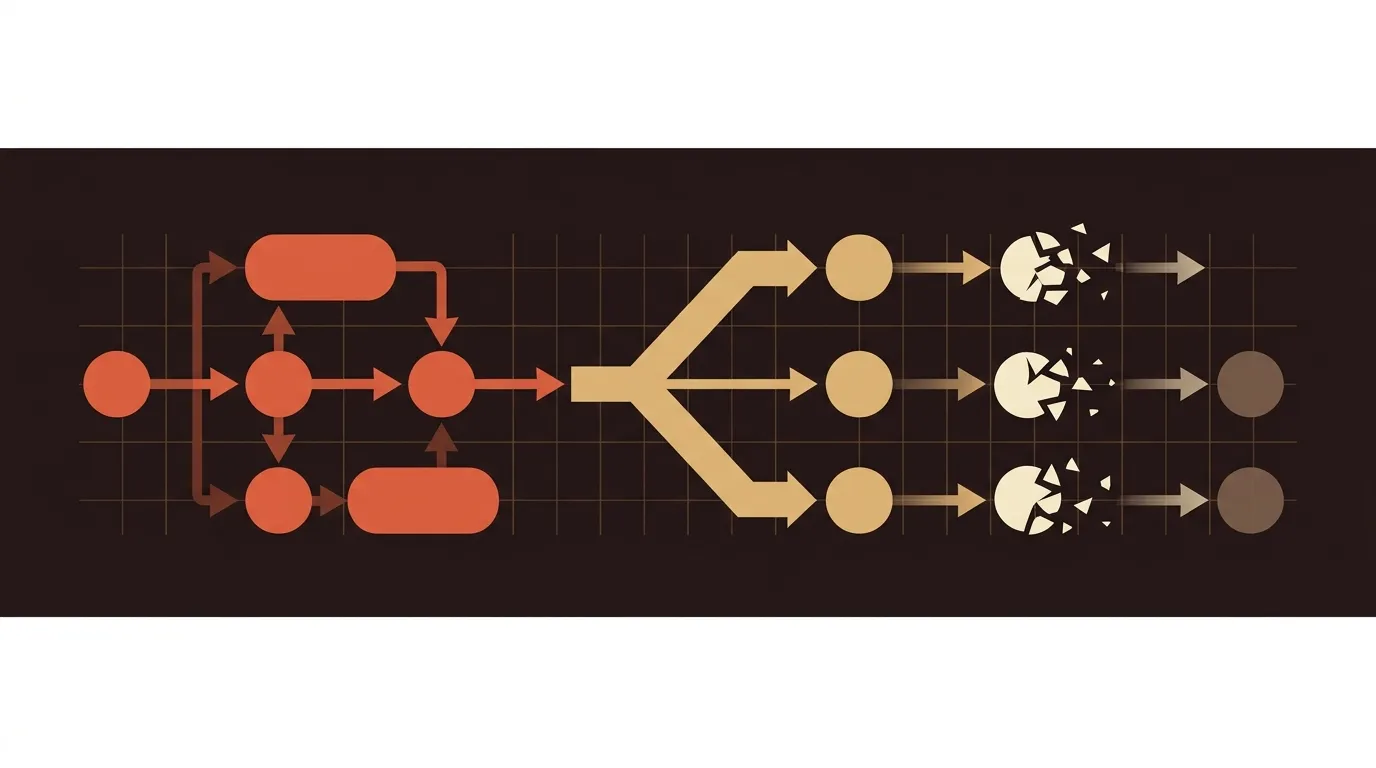

An agentic SEO system does something structurally different. It autonomously plans, executes, and iterates on content strategy: researching keywords, drafting content, optimizing for search, publishing to your CMS, and recovering rankings when they drop. No prompt required for each step. The system watches signals and acts.

This distinction matters more than it sounds like it should. A single all-purpose AI doing research AND writing AND optimization produces mediocre work at every stage. Specialized agents, each focused on one job and passing structured outputs to the next, produce consistently better results. Organizations leading in agentic AI report five times the revenue gains of those that lag behind.

For a 2-person marketing team at a B2B SaaS company, the practical implication is this: you don't need to hire five specialists. You need a workflow where each step has clear inputs, clear outputs, and a feedback mechanism. Whether that's five AI agents or a well-structured pipeline with human checkpoints, the architecture matters more than the headcount.

The Signal Stack That Decides Everything

A living content system needs exactly three categories of signals to function. Without them, you're flying blind. With them, every decision (build, refresh, kill) has a data-backed justification.

Ranking Velocity

This is the time from publication to first-page positioning for a target keyword. High-velocity sites often see faster ranking improvements because search engines crawl them more frequently. If your new pages take three weeks to appear in search results and that timeline isn't compressing over time, your site authority or content quality (or both) needs work.

We track this as a leading indicator. When ranking velocity improves, it means the system is working: the content is relevant, the technical SEO is clean, and Google trusts the domain enough to index quickly. When it degrades, something broke upstream.

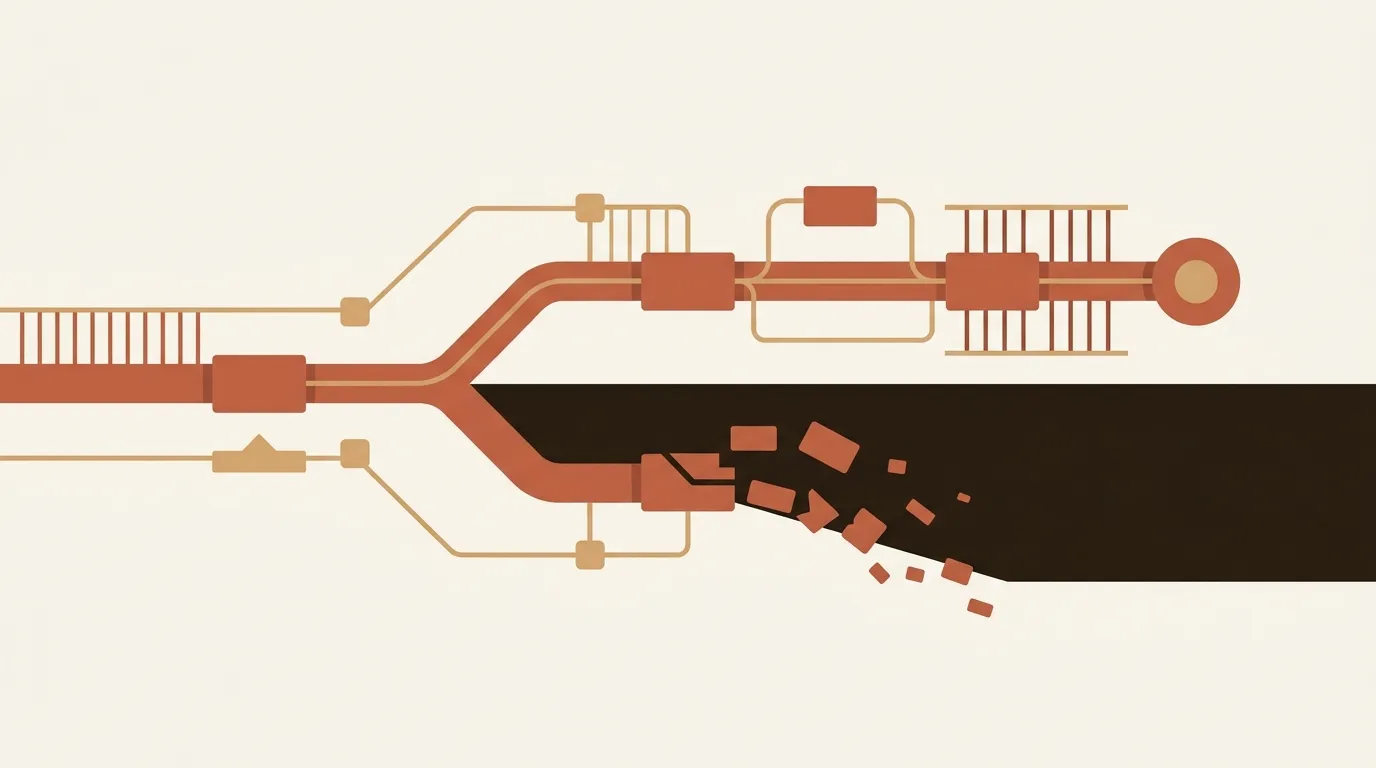

Content Decay Rate

Every page has a half-life. Content decay happens when a page gradually loses rankings, traffic, and engagement because information becomes outdated or competitors publish something better. The tricky part is that decay is silent. Traffic drops 5% a month, and you don't notice until you've lost 40%.

The best automated systems don't just report decay; they act on it. If a page's rankings start to slip, the system automatically initiates a refresh workflow, analyzing new SERP signals and updating content. This self-healing approach separates serious programs from one-and-done campaigns.

Freshness Thresholds

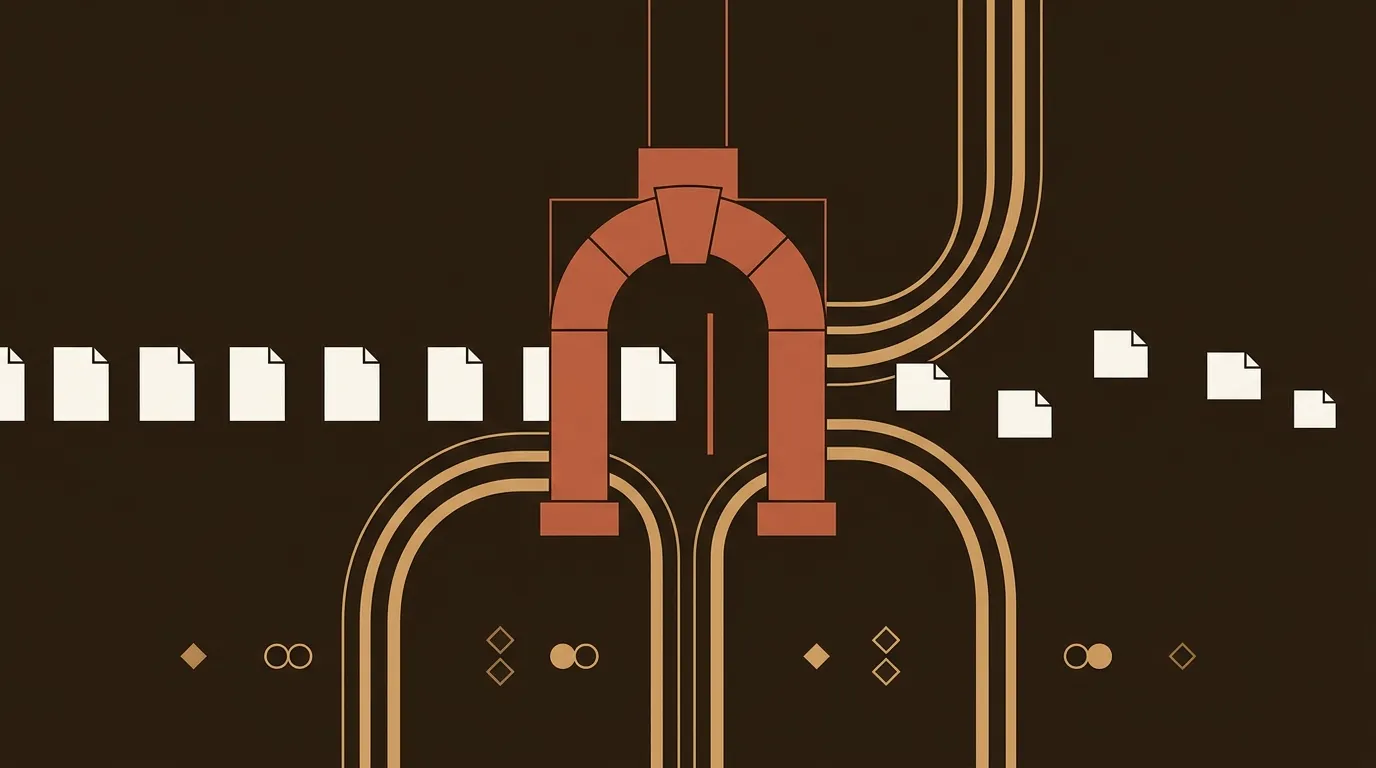

Here's a number that should change how you plan refresh cadence: pages updated within 60 days are 1.9x more likely to be cited by AI systems, and AI systems append recency markers to 28.1% of sub-queries, systematically favoring fresh content. This isn't just about Google anymore. AI-powered search (Perplexity, ChatGPT browsing, Google AI Overviews) actively penalizes stale pages in their citation behavior.

For B2B teams, this means a 60-day refresh window is the new baseline for any page you actually care about. Not every page, obviously. But your top 20% by traffic? Sixty days. No exceptions.

Per-Page Research: The Moat That Actually Holds

The root cause of template programmatic SEO failure is embarrassingly simple: all pages share the same knowledge base. Identical inputs produce identical outputs with different nouns. Google sees through it. Readers see through it.

Harbor's approach points to a better architecture. Their system launches an autonomous research agent for each individual page. This agent scrapes live competitor pages, pulls real-time data, reads relevant forum discussions, and synthesizes a unique research brief. Only after this per-page research phase does the writing agent receive its instructions.

The result is content grounded in genuinely different inputs for every URL. Not a shared template with swapped variables. Actually different information, different angles, different data points.

This is expensive computationally. It adds time and cost per page. But it's the reason some programmatic programs produce pages that rank and hold, while others produce pages that index and decay. The marginal cost per page goes up. The marginal value per page goes up faster.

For small teams, you don't need to build this from scratch. But you do need to ask: does my workflow inject unique, page-specific research into each piece of content? If the answer is "no, we use the same source data for everything," your program has an expiration date.

Publishing Cadence: The Discipline Problem

There's a temptation to publish everything at once. You've generated 500 pages; why not push them all live today?

Because dumping thousands of pages overnight looks suspicious and can trigger manual reviews. The recommended range is 50 to 500 new pages per day, depending on your site's existing size and authority. A brand-new domain publishing 500 pages in a day will raise flags. A domain with 10,000 indexed pages adding 200 per day probably won't.

But the harder discipline isn't the launch. It's what comes after. Publishing twenty articles one month and four the next sends mixed signals to search engines. You need a cadence you can maintain indefinitely, even if it's lower than your ideal.

We've seen this pattern repeatedly: teams launch big, get excited by initial results, then burn out on maintenance. Six months later, half the pages have decayed and nobody's refreshing them. The program didn't fail because the content was bad. It failed because the operating rhythm wasn't sustainable.

The Compounding Math (and Where It Breaks)

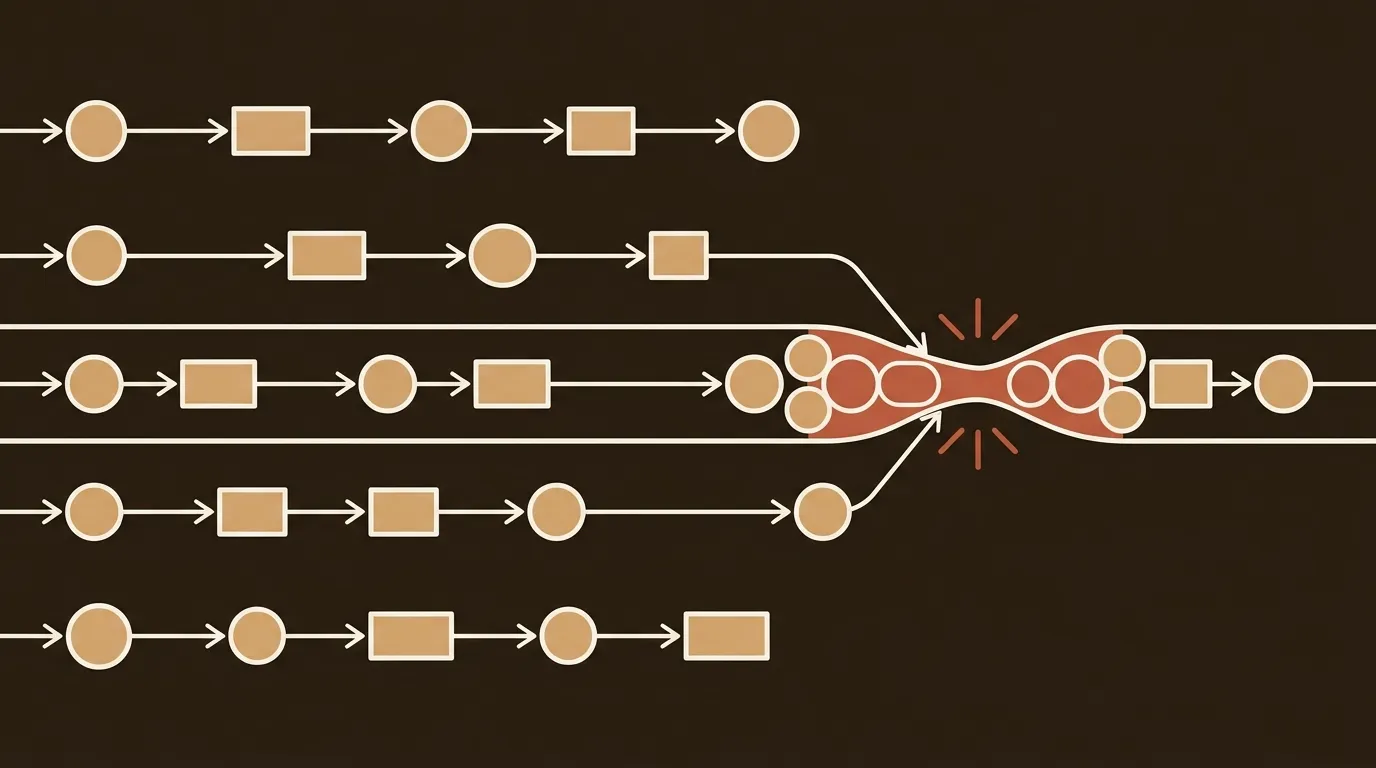

Successful programmatic implementations typically see a 300-700% increase in organic traffic within the first year. That number is real but misleading without context. It compounds only under specific conditions.

Indexation ratio above 90%. If more than 10% of your pages are excluded from Google's index, you have a quality or technical problem. Every non-indexed page is wasted production effort. Monitor this weekly.

Refresh cadence of 60 days for top performers. High-performing SaaS teams review and refresh important content every 3 to 6 months. We think 3 months is too slow for competitive terms. Sixty days for your top 20% of pages by traffic. Quarterly for the middle 50%. The bottom 30%? Evaluate whether they should exist at all.

A kill threshold. This is the part nobody talks about. Some pages will never rank. After 120 days with no meaningful impressions, a page is unlikely to suddenly break through. Remove it, redirect the URL, and reallocate the effort. Keeping dead pages live dilutes your domain's perceived quality.

Here's a rough model for a B2B team running 500 programmatic pages:

- Month 1: Publish 500 pages across 4 weeks (125/week)

- Month 2-3: Monitor ranking velocity. Identify the top 100 by impressions.

- Month 4: Refresh the top 100. Evaluate bottom 150 for removal.

- Month 5-6: Remove or consolidate underperformers. Publish 50 net-new pages targeting gaps found in ranking data.

- Ongoing: 60-day refresh cycle for top performers. Quarterly audit for everything else.

This is not a "set it and forget it" program. It's an operating system with recurring cycles. The teams that treat it this way compound. The teams that don't, stall out around month four.

The Human Layer Isn't Optional

Full automation sounds appealing. It is not sufficient. A 2023 Journal of Information Systems study found that human-in-loop automation produced higher satisfaction and sustained ranking improvement over 12 months compared to fully automated systems.

The practical split for a small team looks like this: AI handles research, drafting, technical optimization, and decay monitoring. Humans handle strategy (which topics, which audiences), quality review (sampling 10-15% of output), and the kill decision (which pages to retire). This is genuinely messy. The boundary between "what the system decides" and "what a person decides" shifts as you build trust in the system's judgment. There's no clean framework for it. You calibrate over time.

And that's fine. The future of agentic SEO is collaborative intelligence, not full autonomy. Businesses that build strong control layers and feedback loops will outperform those relying purely on automation. The winners are the ones who treat AI as a system requiring structure and oversight, not a shortcut.

What This Means for Your Next Quarter

The programmatic SEO systems that scale in 2026 are not simpler than what came before. They're more structured. More monitored. More willing to kill underperformers.

If you're running a B2B content program with limited headcount, the highest-value investment you can make right now is not "generate more pages." It's "build the signal layer that tells you what your existing pages are doing and what to do about it." Ranking velocity, decay rate, indexation ratio. Three numbers. Check them weekly. Act on them monthly.

The moat isn't thousands of pages. It's the operational discipline to manage them like a portfolio, reallocating effort based on returns. Some teams will figure this out. Most will keep publishing into the void and wondering why the traffic chart flattened.

References

- Frase.io, "AI Agents for SEO: Complete Guide to Agentic Content Automation (2026)" -- https://www.frase.io/blog/ai-agents-for-seo

- DigiPanda, "How Agentic AI Works in SEO 2026" -- https://digipanda.co.in/blogs/agentic-ai-seo-2026

- Stormy AI Blog, "How to Build a Programmatic SEO Engine with Claude Code in 2026" -- https://stormy.ai/blog/programmatic-seo-claude-code-2026

- Content Whale, "How Programmatic SEO Drives 10x Content Velocity" -- https://content-whale.com/us/blog/programmatic-seo-10x-content-velocity-quality/

- Harbor SEO, "Programmatic SEO with AI - Scale to 10,000+ Unique Pages" -- https://www.harborseo.ai/programmatic-seo