Google's March 2026 core update finished rolling out on April 8, and the early data tells a clear story: sites that treated each blog post as an isolated unit got punished, while sites with deliberate inter-article architecture held steady or gained. For B2B teams running automated content programs, this is the most operationally significant shift since the helpful content update. Not because automation stopped working, but because the wrong kind of automation stopped working.

We've spent the last three weeks analyzing traffic patterns across dozens of B2B content programs. The pattern is consistent enough to be worth writing about.

What the March 2026 Core Update Actually Changed

The update rolled out between March 27 and April 8, and the damage was lopsided. Mass-produced AI content, the kind generated at volume without editorial oversight or structural intent, saw traffic drops of up to 71%. Meanwhile, sites publishing original data and unique perspectives picked up a 22% visibility increase.

Google appears to have deployed what some analysts believe is a Gemini 4.0 Semantic Filter, built to distinguish between AI-as-tool (humans adding expertise on top of AI drafts) and AI-as-replacement (content that reads fine but adds nothing new). The distinction matters. If your automated pipeline generates 50 posts a month and each one is a competent rewrite of existing search results, you're on the wrong side of this line.

But here's what most commentary on the update misses: the penalty isn't just about individual article quality. It's about how articles relate to each other. Google's systems are getting better at evaluating topical authority across a cluster of pages, not just within a single URL. And that changes the math on content automation entirely.

AI Overviews Made the Problem Worse

AI Overviews now appear in 82% of B2B technology searches, up from roughly 36% in 2025. That's a staggering expansion.

The CTR impact is brutal. Seer Interactive analyzed 3,119 informational queries across 42 organizations and found that organic click-through rates dropped 61% for queries where an AI Overview appeared, falling from 1.76% to 0.61%. But brands cited within those AI Overviews earned 35% more organic clicks than brands that weren't cited on the same queries.

So the game changed. Ranking #1 for a query without being cited in the AI Overview summary is increasingly hollow. And getting cited in AI Overviews depends on something most content automation ignores entirely: whether your site demonstrates authoritative coverage of a topic across multiple interconnected pages, not just one well-optimized post.

This is where the content graph vs. content pile distinction becomes a real operational problem.

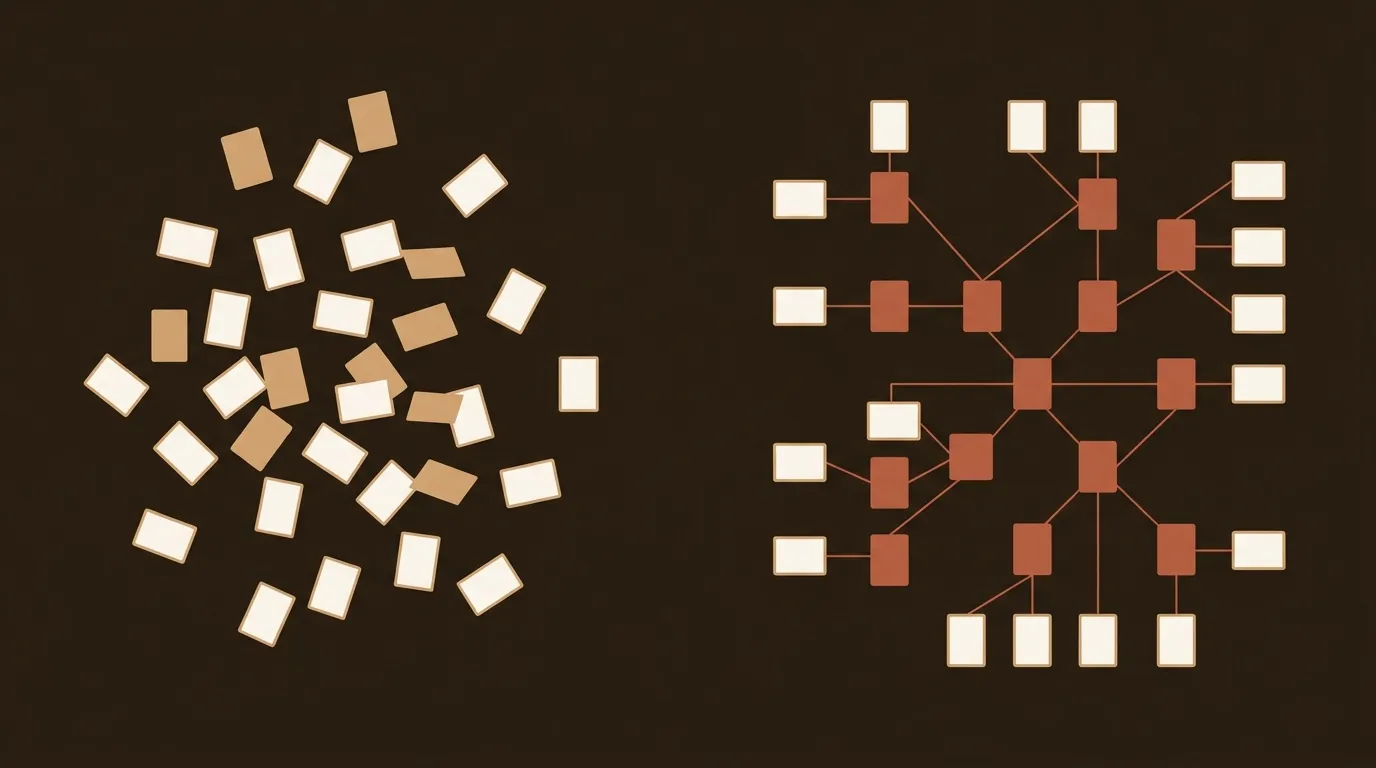

Most Automated Content Programs Build Piles, Not Graphs

Let's be specific about what we mean.

A content pile is what you get when your automation system treats each post as an independent unit. It researches the keyword, writes the article, optimizes meta tags, publishes it, and moves on. Each post might be individually excellent. But the collection of posts has no architectural intent. Internal links get added inconsistently, if a plugin reminds someone or a writer happens to remember a related post. Topical clusters exist on a spreadsheet somewhere but aren't enforced in the actual content.

A content graph is different. Every post is written with awareness of what already exists on the site and what's coming next. Internal links follow a deliberate equity distribution plan. Pillar pages define main entities and user intents, while cluster pages explore subtopics in depth. The connective tissue between them, contextual links with descriptive anchor text, helps search engines assess topic expertise across the whole cluster.

Most automation frameworks, including most of the popular AI writing tools, operate in pile mode. They're good at generating individual posts. They're terrible at building architecture.

The 40-Post Cluster Test

We've seen early data from teams running programmatic content workflows that makes this concrete. A 40-post cluster with deliberate cross-linking structure outperforms 40 standalone posts on the same topics by a measurable traffic multiple. The exact number varies by niche and domain authority, but the pattern is consistent: compounding value lives in the connections between articles, not in any single article.

Why? Three reasons.

Internal link equity distribution actually works. Pages that receive link equity from related, high-performing pages climb faster. If your linked pages aren't improving within 4-6 weeks, something is broken, whether that's links not surviving in live HTML or insufficient content quality on the target page.

Entity signals compound across related content. Search engines and LLMs both evaluate your authority on a topic by looking at how thoroughly and consistently you cover related concepts. One post about "B2B content strategy" tells Google you wrote about B2B content strategy. Twenty interconnected posts covering strategy, measurement, distribution, workflows, and tooling tell Google you might actually know what you're talking about.

AI Overviews prefer topical authorities. The sites getting cited in AI Overview summaries tend to be the ones with the deepest, most interconnected coverage of a topic. A standalone post, no matter how good, has a harder time earning those citations.

The Semantic Mesh Concept

There's an emerging approach that replaces manual, keyword-based internal linking with vector-based connections. The idea is to use embeddings to map the conceptual relationships between pages, so your internal link structure mirrors actual semantic proximity rather than whatever anchor text a writer guessed would be relevant.

This matters because the consumer of your content has changed. It's no longer just a human reader or a keyword-matching crawler. It's an LLM powering an AI Overview or a chatbot. These systems rely on semantic proximity, how closely related two concepts are in meaning, not just in text. If your internal linking structure doesn't mirror the semantic relationships between your entities, you're effectively hiding your expertise from the systems trying to rank you.

Is this approach fully mature? No. The tooling is early. Some implementations are clunky. And there's a real risk of over-engineering internal links to the point where they become noise rather than signal. But the direction is clearly right: architecture should follow semantics, not keywords.

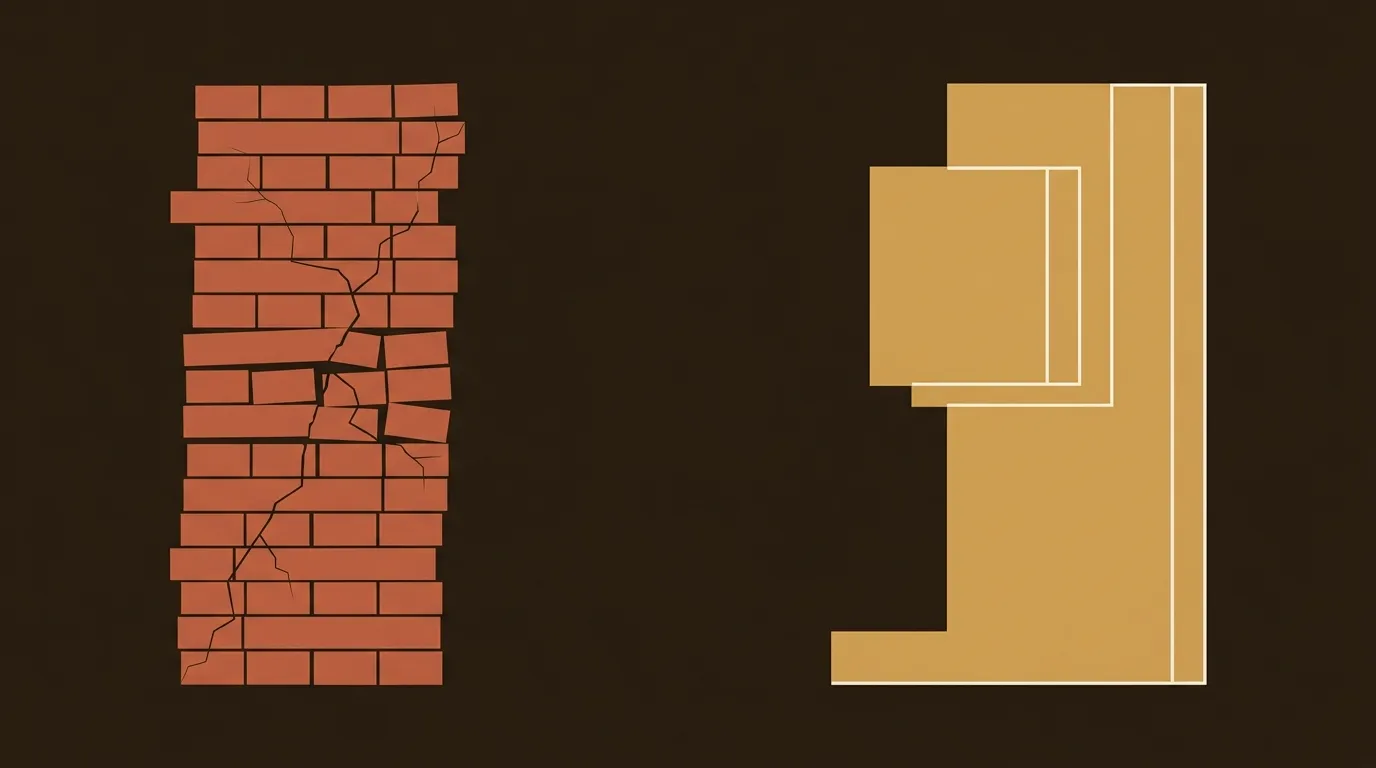

Why This Is Harder Than It Sounds

We'd be dishonest if we didn't acknowledge the operational difficulty here. Building a content graph is genuinely messy for small teams.

You need to define cluster structures before you start publishing, which requires upfront planning most time-starved teams skip. You need every piece of automated content to be aware of what else exists on the site and what's coming next, which means your content generation system needs a persistent context layer. And you need to maintain link integrity over time as content gets updated, redirected, or removed.

Most 1-3 person marketing teams barely have time to review AI-generated drafts before publishing. Asking them to also maintain a topical architecture map and enforce linking rules across every post? That's a workflow problem that hasn't been fully solved by any tool we've evaluated.

Some teams are handling this by front-loading the architecture work: defining pillar pages, mapping 8-20 subtopics with distinct intent, publishing the pillar plus the first 5-8 cluster pages, and interlinking everything immediately with descriptive anchors. Then they add to the cluster over time. It's not perfect, but it's a pattern that works.

Measuring at the Cluster Level

One more operational shift worth calling out: article-level metrics are becoming misleading.

If you're measuring success by individual post traffic, you're missing the compounding effects of cluster architecture. A post that gets 200 visits per month but sends meaningful link equity to three other posts in the cluster, boosting each of them by 15-20%, is far more valuable than a post getting 300 visits with no outbound internal links.

The goal isn't to collect metrics, but to connect signals to decisions: which content generates inbound leads, which influences the sales cycle, and which provides reassurance after purchase. That analysis only makes sense at the cluster level, where you can see how groups of related content work together to move a buyer through a research journey.

What This Means for 2026 Content Programs

The teams we see performing well after the March 2026 update share a few traits. They planned their topical architecture before they started generating content. They built (or configured) systems that enforce internal linking rules automatically. And they measure success at the cluster level, not the article level.

The teams struggling? They automated the easy parts, generation and publishing, without automating the hard part: architectural coherence.

The practical question for any B2B team running an automated content program right now isn't whether to keep automating. It's whether your system knows about the other 39 posts in the cluster when it writes post number 40. If it doesn't, you're building a pile. And piles don't compound.

References

- Google March 2026 Core Update: What Changed & What To Do - ClickRank

- Google March 2026 core update: What you need to know and how to adapt - Launchcodex

- Google March 2026 Core Update Is Complete: Confirmed Timeline, SEO Impact, and What Site Owners Should Do Next - ALM Corp

- Internal Linking Strategy: A Step-by-Step Guide (2026) - Linkbot

- The Semantic Mesh: Automating Internal Linking to Build Unbreakable Topic Clusters - Steakhouse Blog