A SaaS company we've been tracking saw 527% year-over-year growth in sessions from ChatGPT and Claude last quarter. Their traditional organic traffic? Flat. Their paid search costs? Up 18%. The math tells a clear story, and it's the same story IDC is projecting at scale: brands will allocate five times more budget to LLM optimization than traditional SEO by 2029.

But here's what nobody's actually modeling out: what does it cost to earn a single citation in an AI-generated response? And is that number even trackable for a team of two?

We think it is. We've spent the last few months building a unit economics model around this question, and the numbers are strange enough to share.

The Metric Swap Nobody Budgeted For

Cost-per-click made sense in a world where Google sent you traffic and you paid (directly or indirectly) to capture it. That model is breaking. Gartner estimates traditional search clicks will contract 25% by 2026 as conversational interfaces absorb attention. Meanwhile, 68% of marketers are already shifting budget toward AI-search visibility.

Cost-per-citation is the replacement metric. It answers a simple question: how much did you spend on content production and optimization to appear as a cited source in an LLM response?

The reason this metric matters more than vanity "brand mention" tracking is conversion quality. SEMrush's recent AI search study found that AI search visitors are 4.4x more valuable than traditional organic visitors. One dataset showed LLM referral traffic converting at 1.66% for signups versus 0.15% from standard organic. That's not a rounding error. That's an entirely different funnel shape.

Building the Cost-Per-Citation Formula

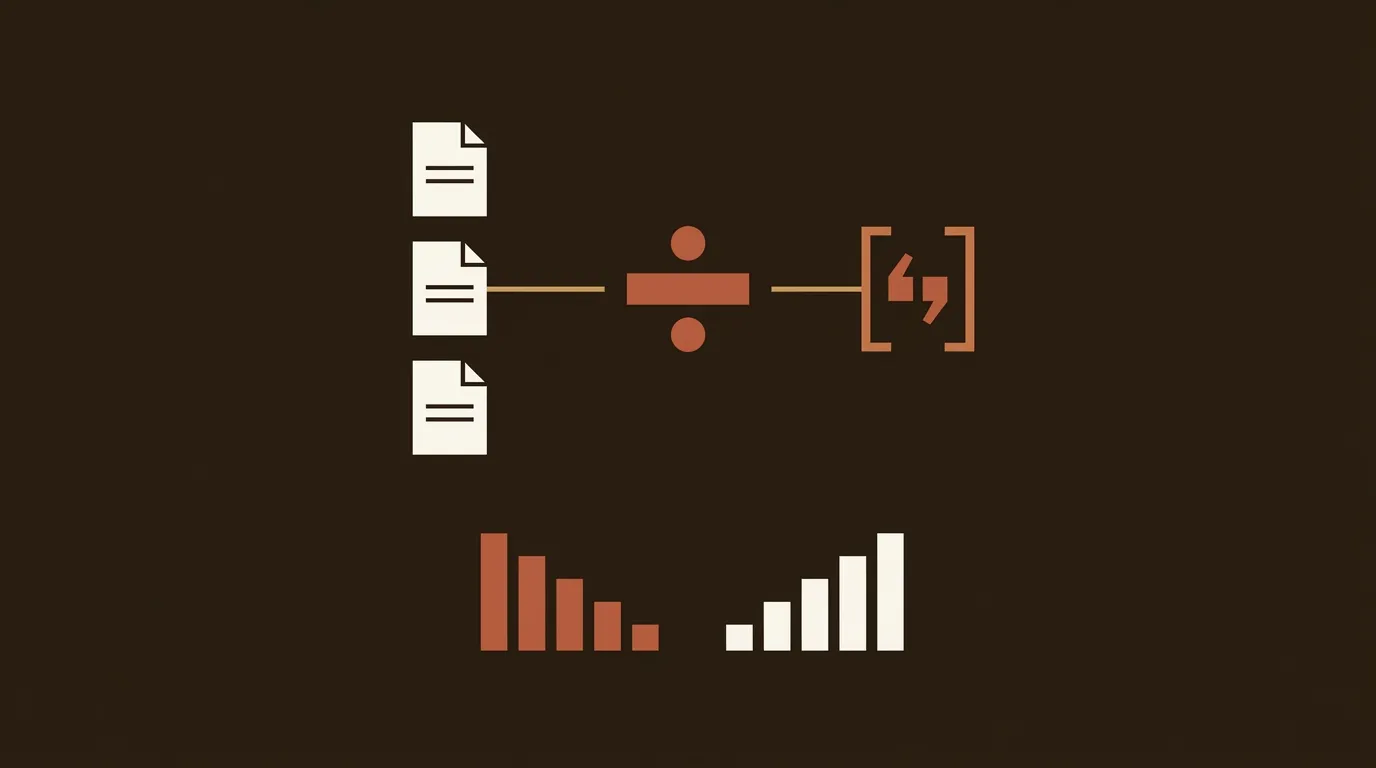

Here's where it gets practical. The formula itself is straightforward:

Cost-Per-Citation = Total Content Production Cost ÷ Number of Confirmed Citations Across AI Platforms

The hard part is the denominator. You need a measurement system before you can optimize anything.

For teams spending less than $2,000/month on content, a manual approach works and costs almost nothing. Run 25 to 50 prompts relevant to your product category across ChatGPT, Perplexity, Gemini, and Claude once per week. Log whether each response cites your domain. Calculate citation frequency as a percentage of total prompts. Previsible's 2025 study confirmed that this manual method produces the same strategic insights as enterprise platforms at roughly 1/20th the cost.

Once your measurement time starts exceeding your optimization time, tools like Otterly ($99/month) or Profound ($299/month) automate the tracking. But don't jump there first. We've seen teams buy monitoring software before they had anything to monitor.

What "Good" Looks Like by Stage

The benchmarks are still forming, but Previsible's data gives us something to work with. In your first six months of generative engine optimization, any citation at all is a positive signal. By month six to twelve, citation in 10 to 20% of your tracked queries is strong. Beyond twelve months, 20 to 30% puts you in a competitive position for your category.

So if you're producing 10 articles per month at $300 each ($3,000 total), and after six months you're cited in 15% of your 50 tracked queries (that's about 7.5 citations per measurement cycle), your cost-per-citation is landing around $400. Expensive? Compared to what? A single branded paid search click in competitive B2B SaaS categories runs $15 to $40. If a citation drives even 5 high-intent visits, you're already ahead.

The Platform Problem: One Content Piece, Four Different Algorithms

This is the genuinely messy part. LLM platforms do not share a citation playbook, and the divergence is wider than most people assume.

ZipTie.dev's analysis of cross-platform citation behavior found that only 11% of domains are cited by both ChatGPT and Perplexity for the same query. Let that sink in. 71% of all cited sources appeared on just one platform.

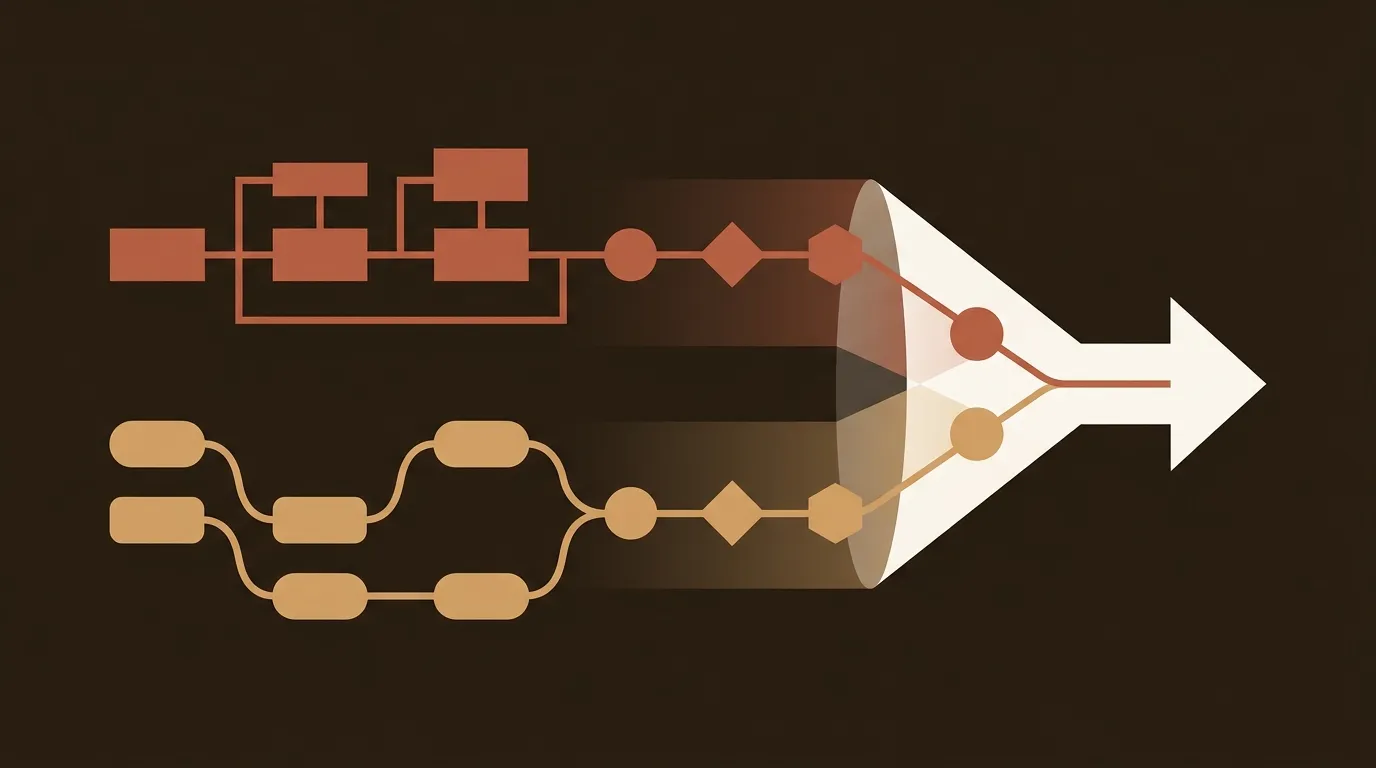

The platform preferences break down like this:

- ChatGPT leans heavily on Wikipedia (47.9% of top citations) and established reference pages

- Perplexity pulls disproportionately from Reddit (46.7%) and recent forum discussions

- Google AI Overviews favors YouTube (23.3%) and structured content

- Claude cites blogs most frequently (43.8%)

And here's a number that should change how you think about this: 28.3% of ChatGPT's most-cited pages have zero organic visibility in traditional search. Your SERP ranking does not predict your AI citation rate. Full stop.

For a small team, the implication is that you need to pick your platform battles. Trying to optimize for all four simultaneously with constrained resources is a losing strategy. If your buyers skew toward using Perplexity for research (common in technical B2B), focus there. If they're asking ChatGPT, focus there.

What Actually Moves Citation Rate (Without a Separate Production Track)

The operational question most teams ask is whether LLM-optimized content requires a completely different workflow. Our answer: no, but it requires different emphasis within your existing workflow.

Three specific content decisions have outsized impact on citation probability.

Proprietary Data Is a 3.7x Multiplier

Content that includes original data is 3.7 times more likely to be cited by LLMs. This is the single highest-ROI change you can make. And "original data" doesn't require a research department. It means running a 50-person survey of your customers. It means publishing your own benchmarks. It means sharing internal metrics that others can reference.

We've seen a 12-person SaaS company increase their citation rate from 4% to 19% in three months by adding one proprietary data point to each blog post. The data didn't need to be groundbreaking. It needed to be citable, meaning specific, attributed, and not available elsewhere.

Structured Answer Blocks Beat Long-Form Narrative

LLMs extract information in chunks. Content with clear hierarchical formatting, including headings, bullets, and tables, is 28 to 40% more likely to be cited. The specific pattern that works best: a direct answer in 40 to 60 words, followed by supporting evidence, followed by source attribution.

This isn't a radical departure from good SEO writing. It's just more disciplined. Every section should have a self-contained answer block that an LLM can extract without needing the surrounding context.

Claim-Level Attribution Signals Trust

LLMs are trained to prefer content that shows its work. When you make a claim, link to the source. When you cite a number, name where it came from. This is basic editorial hygiene, but most B2B blogs skip it. The models notice.

Kevin Indig's analysis of over 7,000 citations across AI platforms found that content depth, readability, and clear attribution had the biggest impact on citation probability. Not domain authority. Not backlink count. Attribution.

Freshness Is Platform-Dependent (and This Changes Your Update Calendar)

One workflow decision that most teams get wrong: applying a single content refresh cadence across all platforms.

Perplexity applies aggressive freshness penalties, with citation rates dropping to 37% after content passes the 180-day mark. That means Perplexity-targeted content needs quarterly updates. ChatGPT and Google AI Overviews are more forgiving of older foundational content; annual refreshes on high-priority pages work, supplemented by monthly updates on trending topics.

For a small team, this means tagging your content library by target platform and setting different update schedules. It's more work than a single "refresh everything every 6 months" policy. But it concentrates effort where it actually affects citation rate.

Setting Up Attribution in GA4

You can't calculate cost-per-citation without tracking AI referral traffic separately. The setup takes about 15 minutes.

In GA4, create a custom channel grouping with a new channel called "AI Search" that captures referrals from chat.openai.com, chatgpt.com, perplexity.ai, gemini.google.com, claude.ai, and copilot.microsoft.com. Within two to three months you'll have a baseline; within six months you'll have trend data that informs content investment decisions.

The revenue attribution formula from there: multiply AI referral visits by the average CPC of the keywords driving those visits. If you're getting 200 AI referral visits per month on queries where the average CPC is $3, that's $600 in equivalent paid search value. Compare that to your content production cost and you have a real ROI number, not a guess.

The Single Number for 2026

Small B2B teams need clarity, not complexity. Track three numbers: citation rate by platform, content production cost per piece, and conversion rate from AI referral traffic. Those three inputs give you cost-per-citation, and cost-per-citation gives you a budget allocation framework.

The teams building this measurement infrastructure now are going to have six months of compounding data by the time their competitors start asking what "GEO" means. That data compounds in a specific way, too. The architecture of LLMs retains users rather than routing them, which means the traffic that does escape these walled gardens is deliberate and high-intent. Every citation you earn is harder-won than a traditional SERP click. And worth more.

We don't know exactly what the cost-per-citation benchmarks will settle at across industries. Nobody does yet. But the teams that start measuring now will be the ones who set those benchmarks.

References

- IDC Blog, "Marketing's New Imperative: The Shift from SEO to LLM Optimization" -- https://blogs.idc.com/2025/09/12/marketings-new-imperative-the-shift-from-seo-to-llm-optimization/

- Superframeworks, "Best LLM SEO Tools 2025" -- https://superframeworks.com/blog/best-llm-seo-tools

- MentorCruise, "Transform Your SEO Strategy for the AI Era" -- https://mentorcruise.com/blog/transform-your-seo-strategy-for-the-ai-era-complete-llm-optimization-guide/

- Previsible, "2025 State Of AI Discovery Report" -- https://previsible.io/seo-strategy/ai-seo-study-2025/

- ZipTie.dev, "How Different AI Platforms Cite the Same Source Differently" -- https://ziptie.dev/blog/how-different-ai-platforms-cite-the-same-source-differently/