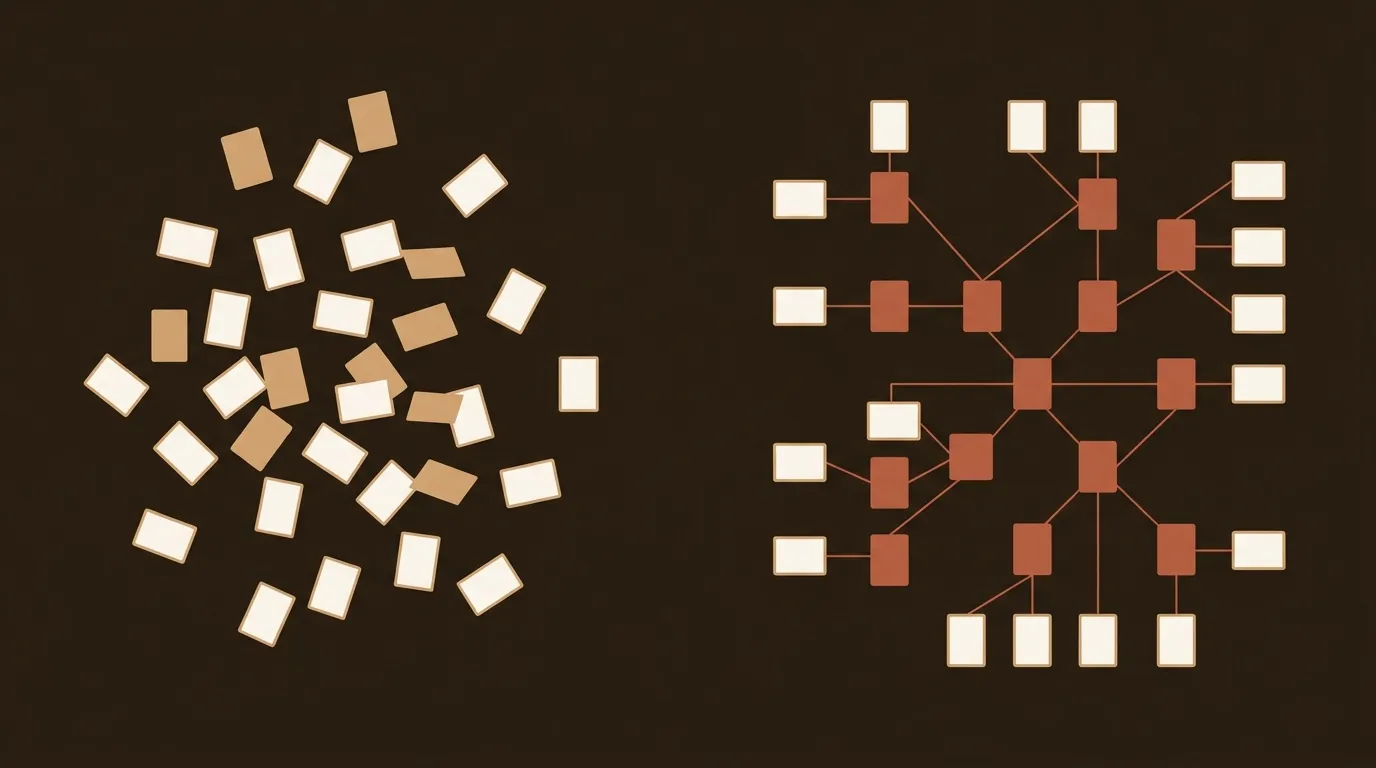

Most B2B marketing teams still plan their content around a Google Sheet with dates, topics, and a color-coded status column. Every Monday, someone asks, "What's going live this week?" And every Friday, the answer is some version of "We pushed two posts, we're behind on three, and nobody checked if last month's articles are still ranking."

That workflow made sense five years ago. It doesn't anymore.

The Calendar Was Built for a Different Search Engine

The editorial calendar as a strategy tool assumes a specific model of how content gets discovered: you publish, Google crawls, you rank (or you don't), and over time your domain authority lifts everything. Publish consistently, build topical clusters, wait for compounding traffic.

That model is fracturing. Google's AI Overviews now appear in roughly 47% of all search queries, pulling synthesized answers directly into the SERP. ChatGPT search, Perplexity, and similar tools are creating a parallel discovery layer where content gets cited, not clicked. And the signals that determine whether your blog post appears in an AI Overview have almost nothing to do with your publishing cadence.

A 2024 Ahrefs study found that refreshed content outperformed net-new posts in 65% of cases when measured by organic traffic gains within 90 days. Not because the new content was bad, but because the refreshed content already had backlinks, citation history, and topical authority baked in.

So the question isn't "How many posts do we publish this month?" It's "Which existing posts are decaying, which are gaining citation velocity, and where is there an open authority window we can fill?"

Cadence Intuition vs. Performance Signals

We've talked to dozens of marketing managers running 1-3 person teams. Almost all of them set publishing cadence the same way: gut feel plus whatever the CEO said in a planning meeting. "Two posts a week sounds right." "Our competitors publish daily." "HubSpot says companies that blog 16+ times per month get 3.5x more traffic."

That HubSpot stat is real, by the way. But it's from a 2023 benchmark report that measured volume against traffic without controlling for content quality, refresh cycles, or AI-driven discovery. Publishing 16 mediocre posts per month in 2026 is a waste of budget. Publishing 4 posts with high citation potential while refreshing 8 decaying ones is a different kind of math entirely.

The problem with cadence-based planning is that it treats every publishing slot as equal. Tuesday's post gets the same weight whether it's targeting a keyword with rising search demand or one that's been absorbed into an AI Overview snippet. A dynamic content queue, built on live performance signals, would weigh these very differently.

What does "live performance signals" actually mean in practice? Three things worth tracking.

Ranking velocity. Not just where a post ranks today, but how quickly it's moving. A post that jumped from position 18 to position 9 in two weeks is telling you something. It probably needs a content refresh, more internal links, or a supporting article to push it into the top 5. A post that's been sitting at position 7 for six months with no movement might be capped by a stronger competitor's topical depth.

Refresh ROI. This is the traffic or conversion lift per hour invested in updating an existing post vs. writing a new one from scratch. We've seen cases where a 45-minute refresh (updated stats, new H2 section, improved meta description) generated more incremental traffic than a brand-new 2,000-word article that took 6 hours to produce. Not always. But often enough that ignoring refresh ROI is leaving real results on the table.

AI citation exposure. This one is newer and harder to measure. Tools like Semrush and Ahrefs are beginning to track AI Overview inclusion, and Semrush's 2025 data shows that content cited in AI Overviews tends to have specific structural patterns: clear definitions, data-backed claims, and authoritative sourcing. Your editorial calendar says nothing about whether your upcoming posts are optimized for citation. A dynamic queue would prioritize topics where citation windows are open.

Why Small Teams Are the Most Affected

Enterprise content operations can afford to publish at high cadence and sort through the data afterward. They have dedicated SEO analysts, content strategists, and editorial leads. If 30% of their output underperforms, they absorb the loss.

A 2-person marketing team publishing 8 posts a month doesn't have that luxury. Every post represents a significant chunk of their monthly output. And here's the part that rarely gets said out loud: most small teams are spending 70-80% of their content time on production (writing, editing, formatting, publishing) and maybe 20% on strategy (what to write, why, and what to do with it after).

That ratio is backwards.

The teams getting the best results per post are spending more time on the "what and why" than the "how." They're checking Search Console weekly for ranking velocity changes. They're running content audits quarterly (or monthly). They're treating their existing content library as a portfolio that needs active management, not a graveyard of past efforts.

But this is genuinely messy. There's no perfect framework for deciding whether to refresh a post from 2023 or write a new one targeting a trending subtopic. The signals sometimes conflict. A post might be decaying in organic traffic but gaining citations in AI Overviews. What do you do? We don't have a clean answer. Nobody does yet.

The Editorial Calendar Isn't Dead. It's Just Demoted.

Let's be precise about the claim here. We're not saying stop planning content. Planning is good. Having a list of upcoming topics, assigned owners, and rough timelines still matters for coordination, especially if you're working with freelancers or cross-functional teams.

What we are saying is that the calendar should be an output of your strategy, not the strategy itself.

The strategy layer should be a dynamic queue that answers three questions every week:

- Which published posts are losing rankings and need intervention?

- Which topics have an open authority window right now (rising search demand, weak competition, AI Overview gaps)?

- What's the highest-ROI action for our next available content slot: new post, refresh, or supporting asset?

The calendar becomes the scheduling layer beneath that, handling logistics. "This refresh goes live Wednesday, this new post Friday, this supporting infographic gets added to the existing cluster next Monday."

That's a meaningful difference from "We publish Tuesdays and Thursdays, here are the topics for Q3."

What a Dynamic Content Queue Looks Like in Practice

Say you're a B2B SaaS company with 120 published blog posts. Here's a simplified version of how a signal-driven queue might work.

Every Monday, you pull three data sets: Search Console ranking changes (last 14 days), Ahrefs or Semrush traffic trends for your top 30 posts, and a spot check of AI Overview inclusion for your primary keywords.

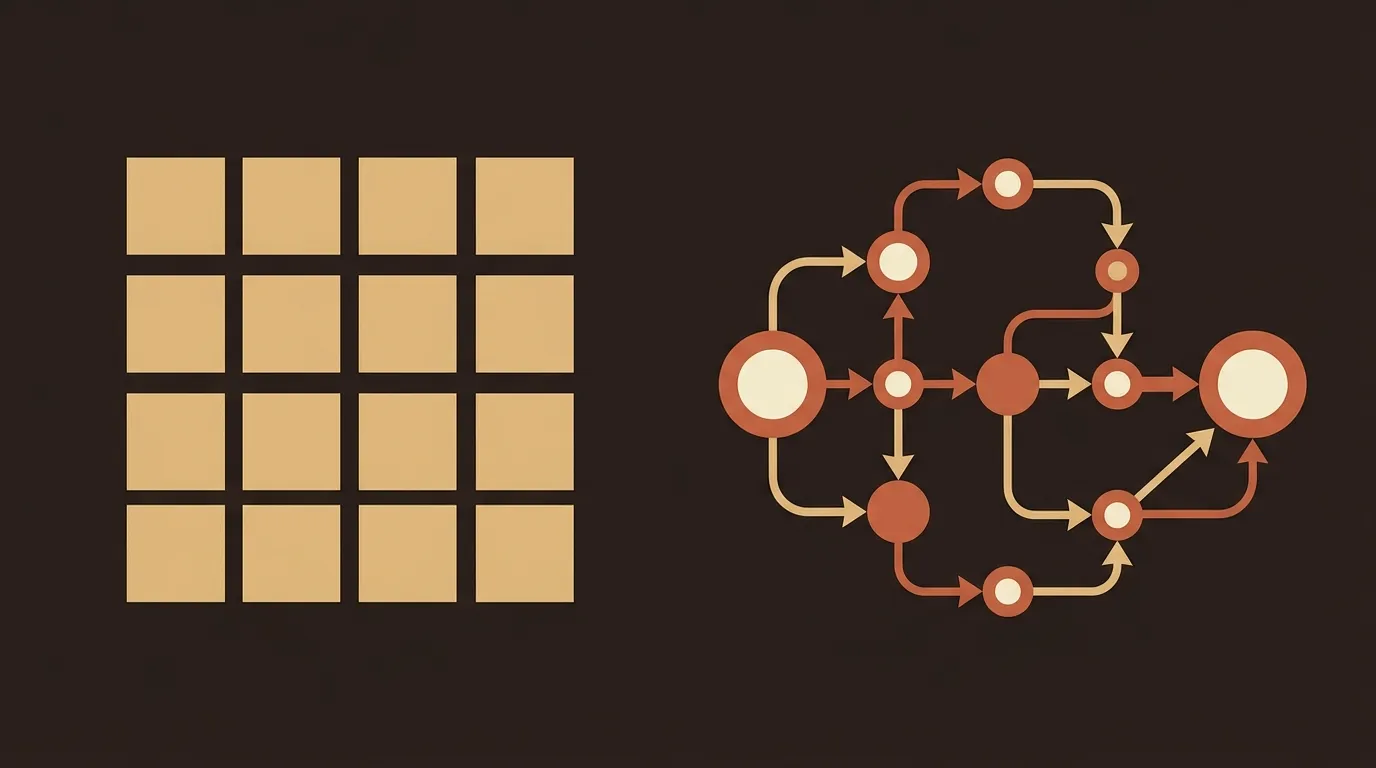

You sort your content into four buckets. Growing: posts gaining rankings or traffic, leave them alone or add internal links to accelerate. Stable: posts holding position, low priority. Decaying: posts losing rankings or traffic, candidates for refresh. Missing: topics where you have no coverage but the signals (search volume, competition, AI citation gaps) suggest you should.

Your weekly content allocation then becomes a ratio. Maybe 40% refresh, 40% new-on-missing-topics, 20% supporting assets for growing posts. That ratio shifts based on what the data shows.

This is not a new idea. SEO-mature companies have been doing versions of this for years. A 2025 report from Conductor showed that organizations with formal refresh cycles saw 35% higher organic traffic year-over-year than those focused primarily on net-new production. The difference is that in 2026, the signals you need to track include AI citation patterns, not just traditional SERP positions.

The Hard Part Nobody Wants to Admit

Building a dynamic queue sounds great in a blog post. Actually doing it requires discipline and tooling that most small teams don't have.

You need to check ranking data weekly. You need a system for scoring refresh candidates. You need to track which posts have been refreshed, when, and what the result was. And you need someone who can look at the data and make judgment calls, because the signals are often ambiguous.

Most teams will try this for three weeks and then revert to the calendar because it's simpler. We've seen it happen. The calendar wins not because it's better, but because it's easier to follow. That's an honest assessment of the adoption challenge.

The teams that make it stick tend to automate the data-gathering part. Whether that's a custom dashboard, an API pulling from Search Console and Ahrefs, or one of the growing number of content intelligence platforms, the pattern is the same: reduce the manual overhead of signal collection so humans can focus on the judgment calls.

Where This Goes Next

AI-driven search is still in its awkward adolescence. Google keeps tweaking AI Overviews. ChatGPT's search integration is growing fast but inconsistent. New citation patterns are emerging that nobody fully understands yet.

The one thing we're confident about: a static editorial calendar won't be the right interface for content strategy in 18 months. It'll still exist as a coordination tool, the same way a spreadsheet still exists even after you adopt a CRM. But the strategic decisions, what to write, what to refresh, and what to let go, will be driven by live signals, not quarterly planning sessions.

Small B2B teams that figure this out early will punch above their weight. The rest will keep publishing on Tuesdays and wondering why the traffic charts are flat.

References

- Semrush, "Google AI Overviews Study" (2025): https://www.semrush.com/blog/google-ai-overviews-study/

- Ahrefs, "Content Refresh Study" (2024): https://ahrefs.com/blog/content-refresh/

- HubSpot, "How Often Should You Blog?" (2023): https://blog.hubspot.com/marketing/blogging-frequency

- Conductor, "Content Refresh Strategy" (2025): https://www.conductor.com/academy/content-refresh-strategy/

- Search Engine Land, "ChatGPT Search Usage Growth" (2025): https://searchengineland.com/chatgpt-search-usage-growth-2025-454218